Elastic Agent data streams for Fleet

Elastic Agent uses data streams to store time series data across multiple indices while giving you a single named resource for requests. Data streams are well-suited for logs, metrics, traces, and other continuously generated data. They offer a host of benefits over other indexing strategies:

- Reduced number of fields per index: Indices only need to store a specific subset of your data–meaning no more indices with hundreds of thousands of fields. This leads to better space efficiency and faster queries. As an added bonus, only relevant fields are shown in Discover.

- More granular data control: For example, file system, load, CPU, network, and process metrics are sent to different indices–each potentially with its own rollover, retention, and security permissions.

- Flexible: Use the custom namespace component to divide and organize data in a way that makes sense to your use case or company.

- Fewer ingest permissions required: Data ingestion only requires permissions to append data.

Elastic Agent uses the Elastic data stream naming scheme to name data streams. The naming scheme splits data into different streams based on the following components:

type- A generic

typedescribing the data, such aslogs,metrics,traces, orsynthetics. dataset- The

datasetis defined by the integration and describes the ingested data and its structure for each index. For example, you might have a dataset for process metrics with a field describing whether the process is running or not, and another dataset for disk I/O metrics with a field describing the number of bytes read. namespace-

A user-configurable arbitrary grouping, such as an environment (

dev,prod, orqa), a team, or a strategic business unit. Anamespacecan be up to 100 bytes in length (multibyte characters will count toward this limit faster). Using a namespace makes it easier to search data from a given source by using a matching pattern. You can also use matching patterns to give users access to data when creating user roles.By default the namespace defined for an Elastic Agent policy is propagated to all integrations in that policy. if you’d like to define a more granular namespace for a policy:

- In Kibana, go to Integrations.

- On the Installed integrations tab, select the integration that you’d like to update.

- Open the Integration policies tab.

- From the Actions menu next to the integration, select Edit integration.

- Open the advanced options and update the Namespace field. Data streams from the integration will now use the specified namespace rather than the default namespace inherited from the Elastic Agent policy.

The naming scheme separates each components with a - character:

<type>-<dataset>-<namespace>

For example, if you’ve set up the Nginx integration with a namespace of prod, Elastic Agent uses the logs type, nginx.access dataset, and prod namespace to store data in the following data stream:

logs-nginx.access-prod

Alternatively, if you use the APM integration with a namespace of dev, Elastic Agent stores data in the following data stream:

traces-apm-dev

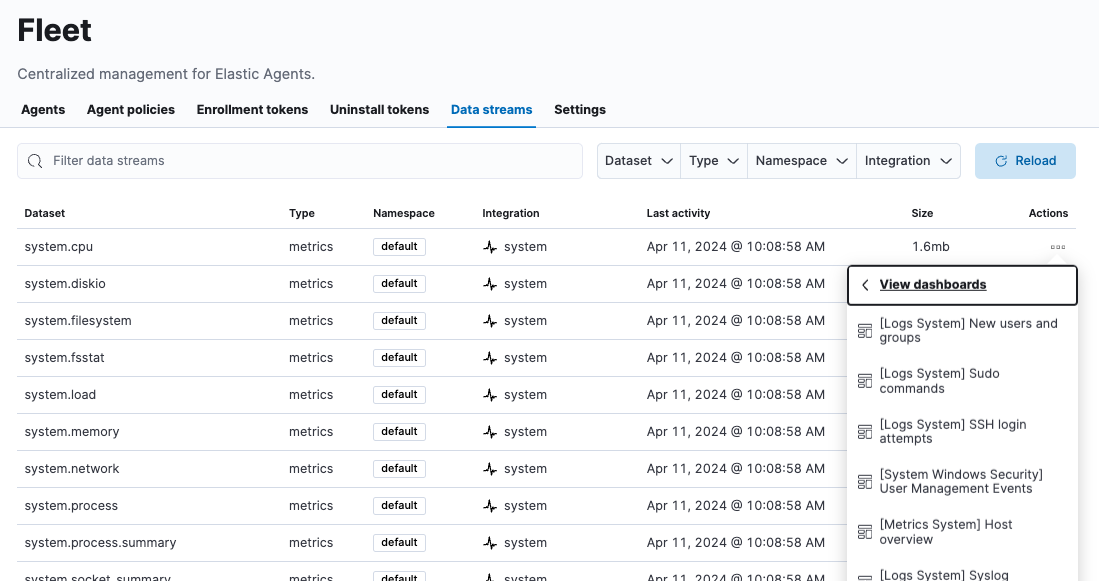

All data streams, and the pre-built dashboards that they ship with, are viewable on the Fleet Data Streams page:

If you’re familiar with the concept of indices, you can think of each data stream as a separate index in Elasticsearch. Under the hood though, things are a bit more complex. All of the juicy details are available in Elasticsearch Data streams.

When searching your data in Kibana, you can use a data view to search across all or some of your data streams.

An index template is a way to tell Elasticsearch how to configure an index when it is created. For data streams, the index template configures the stream’s backing indices as they are created.

Elasticsearch provides the following built-in, ECS based templates: logs-*-*, metrics-*-*, and synthetics-*-*. Elastic Agent integrations can also provide dataset-specific index templates, like logs-nginx.access-*. These templates are loaded when the integration is installed, and are used to configure the integration’s data streams.

Custom index mappings may conflict with the mappings defined by the integration and may break the integration in Kibana. Do not change or customize any default mappings.

When you install an integration, Fleet creates two default @custom component templates:

- A

@customcomponent template allowing customization across all documents of a given data stream type, named following the pattern:<data_stream_type>@custom. - A

@customcomponent template for each data stream, named following the pattern:<name_of_data_stream>@custom.

The @custom component template specific to a data stream has higher precedence over the data stream type @custom component template.

You can edit a @custom component template to customize your Elasticsearch indices:

Open Kibana and go to the Index Management page using the navigation menu or the global search field, then open the Data Streams tab.

Find and click the name of the integration data stream, such as

logs-cisco_ise.log-default.Click the index template link for the data stream to see the list of associated component templates.

Go to the Index Management page using the navigation menu or the global search field, and open the Component Templates tab.

Search for the name of the data stream’s custom component template and click the edit icon.

Add any custom index settings, metadata, or mappings. For example, you may want to:

Customize the index lifecycle policy applied to a data stream. See Configure a custom index lifecycle policy in the APM Guide for a walk-through.

Specify lifecycle name in the index settings:

{ "index": { "lifecycle": { "name": "my_policy" } } }Change the number of replicas per index. Specify the number of replica shards in the index settings:

{ "index": { "number_of_replicas": "2" } }

Changes to component templates are not applied retroactively to existing indices. For changes to take effect, you must create a new write index for the data stream. You can do this with the Elasticsearch Rollover API.

Use the index lifecycle management (ILM) feature in Elasticsearch to manage your Elastic Agent data stream indices as they age. For example, create a new index after a certain period of time, or delete stale indices to enforce data retention standards.

Installed integrations may have one or many associated data streams—each with an associated ILM policy. By default, these data streams use an ILM policy that matches their data type. For example, the data stream metrics-system.logs-*, uses the metrics ILM policy as defined in the metrics-system.logs index template.

Want to customize your index lifecycle management? See Tutorials: Customize data retention policies.

Elastic Agent integration data streams ship with a default ingest pipeline that preprocesses and enriches data before indexing. The default pipeline should not be directly edited as changes can easily break the functionality of the integration.

Starting in version 8.4, all default ingest pipelines call a non-existent and non-versioned "@custom" ingest pipeline. If left uncreated, this pipeline has no effect on your data. However, if added to a data stream and customized, this pipeline can be used for custom data processing, adding fields, sanitizing data, and more.

Starting in version 8.12, ingest pipelines can be configured to process events at various levels of customization.

If you create a custom index pipeline, Elastic is not responsible for ensuring that it indexes and behaves as expected. Creating a custom pipeline involves custom processing of the incoming data, which should be done with caution and tested carefully.

global@custom-

Apply processing to all events

For example, the following pipeline API request adds a new field

my-global-fieldfor all events:PUT _ingest/pipeline/global@custom{ "processors": [ { "set": { "description": "Process all events", "field": "my-global-field", "value": "foo" } } ] } ${type}-

Apply processing to all events of a given data type.

For example, the following request adds a new field

my-logs-fieldfor all log events:PUT _ingest/pipeline/logs@custom{ "processors": [ { "set": { "description": "Process all log events", "field": "my-logs-field", "value": "foo" } } ] } ${type}-${package}.integration-

Apply processing to all events of a given type in an integration

For example, the following request creates a

logs-nginx.integration@custompipeline that adds a new fieldmy-nginx-fieldfor all log events in the Nginx integration:PUT _ingest/pipeline/logs-nginx.integration@custom{ "processors": [ { "set": { "description": "Process all nginx events", "field": "my-nginx-field", "value": "foo" } } ] }Note that

.integrationis included in the pipeline pattern to avoid possible collision with existing dataset pipelines. ${type}-${dataset}-

Apply processing to a specific dataset.

For example, the following request creates a

metrics-system.cpu@custompipeline that adds a new fieldmy-system.cpu-fieldfor all CPU metrics events in the System integration:PUT _ingest/pipeline/metrics-system.cpu@custom{ "processors": [ { "set": { "description": "Process all events in the system.cpu dataset", "field": "my-system.cpu-field", "value": "foo" } } ] }

Custom pipelines can directly contain processors or you can use the pipeline processor to call other pipelines that can be shared across multiple data streams or integrations. These pipelines will persist across all version upgrades.

If you have a custom pipeline defined that matches the naming scheme used for any Fleet custom ingest pipelines, this can produce unintended results. For example, if you have a pipeline named like one of the following:

global@customtraces@customtraces-apm@custom

The pipeline may be unexpectedly called for other data streams in other integrations. To avoid this problem, avoid the naming schemes defined above when naming your custom pipelines.

Refer to the breaking change in the 8.12.0 Release Notes for more detail and workaround options.

See Tutorial: Transform data with custom ingest pipelines to get started.