Secure your connection to Elasticsearch

The Logstash Elasticsearch output, input, and filter plugins, as well as monitoring and central management, support authentication and encryption over HTTPS.

Elasticsearch clusters are secured by default (starting in 8.0). You need to configure authentication credentials for Logstash in order to establish communication. Logstash throws an exception and the processing pipeline is halted if authentication fails.

In addition to configuring authentication credentials for Logstash, you need to grant authorized users permission to access the Logstash indices.

Security is enabled by default on the Elasticsearch cluster (starting in 8.0). You must enable TLS/SSL in the Elasticsearch output section of the Logstash configuration in order to allow Logstash to communicate with the Elasticsearch cluster.

Elasticsearch generates its own default self-signed Secure Sockets Layer (SSL) certificates at startup.

Logstash must establish a Secure Sockets Layer (SSL) connection before it can transfer data to a secured Elasticsearch cluster. Logstash must have a copy of the certificate authority (CA) that signed the Elasticsearch cluster’s certificates. When a new Elasticsearch cluster is started up without dedicated certificates, it generates its own default self-signed Certificate Authority at startup. See Starting the Elastic Stack with security enabled for more info.

Elasticsearch Service uses certificates signed by standard publicly trusted certificate authorities, and therefore setting a ssl_certificate_authorities value is not necessary.

Elasticsearch Serverless simplifies safe, secure communication between Logstash and Elasticsearch.

Configure the Logstash Elasticsearch output plugin to use cloud_id and an api_key to establish safe, secure communication between Logstash and Elasticsearch Serverless. No additional SSL configuration steps are needed.

Configuration example:

output {elasticsearch { cloud_id => "<cloud id>" api_key => "<api key>" } }

For more details, check out Grant access using API keys.

Our hosted Elasticsearch Service on Elastic Cloud simplifies safe, secure communication between Logstash and Elasticsearch. When you configure the Logstash Elasticsearch output plugin to use cloud_id with either the cloud_auth option or the api_key option, no additional SSL configuration steps are needed. Elastic Cloud Hosted is available on AWS, GCP, and Azure, and you can try it for free at https://cloud.elastic.co/registration.

Configuration example:

output {elasticsearch { cloud_id => "<cloud id>" cloud_auth => "<cloud auth>" } }output {elasticsearch { cloud_id => "<cloud id>" api_key => "<api key>" } }

For more details, check out Grant access using API keys or Sending data to Elastic Cloud Hosted.

If you are running Elasticsearch on your own hardware and using the Elasticsearch cluster’s default self-signed certificates, you need to complete a few more steps to establish secure communication between Logstash and Elasticsearch.

You need to:

- Copy the self-signed CA certificate from Elasticsearch and save it to Logstash.

- Configure the elasticsearch-output plugin to use the certificate.

These steps are not necessary if your cluster is using public trusted certificates.

By default an on-premise Elasticsearch cluster generates a self-signed CA and creates its own SSL certificates when it starts. Therefore Logstash needs its own copy of the self-signed CA from the Elasticsearch cluster in order for Logstash to validate the certificate presented by Elasticsearch.

Copy the self-signed CA certificate from the Elasticsearch config/certs directory.

Save it to a location that Logstash can access, such as config/certs on the Logstash instance.

Use the elasticsearch output's ssl_certificate_authorities option to point to the certificate’s location.

Example

output {

elasticsearch {

hosts => ["https://...]

ssl_certificate_authorities => ['/etc/logstash/config/certs/ca.crt']

}

}

- Note that the

hostsurl must begin withhttps - Path to the Logstash copy of the Elasticsearch certificate

For more information about establishing secure communication with Elasticsearch, see security is on by default.

Logstash needs to be able to manage index templates, create indices, and write and delete documents in the indices it creates.

To set up authentication credentials for Logstash:

Use the Management > Roles UI in Kibana or the

roleAPI to create alogstash_writerrole. For cluster privileges, addmanage_index_templatesandmonitor. For indices privileges, addwrite,create, andcreate_index.Add

manage_ilmfor cluster andmanageandmanage_ilmfor indices if you plan to use index lifecycle management.POST _security/role/logstash_writer { "cluster": ["manage_index_templates", "monitor", "manage_ilm"], "indices": [ { "names": [ "logstash-*" ], "privileges": ["write","create","create_index","manage","manage_ilm"] } ] }- The cluster needs the

manage_ilmprivilege if index lifecycle management is enabled. - If you use a custom Logstash index pattern, specify your custom pattern instead of the default

logstash-*pattern. - If index lifecycle management is enabled, the role requires the

manageandmanage_ilmprivileges to load index lifecycle policies, create rollover aliases, and create and manage rollover indices.

- The cluster needs the

Create a

logstash_internaluser and assign it thelogstash_writerrole. You can create users from the Management > Users UI in Kibana or through theuserAPI:POST _security/user/logstash_internal { "password" : "x-pack-test-password", "roles" : [ "logstash_writer"], "full_name" : "Internal Logstash User" }Configure Logstash to authenticate as the

logstash_internaluser you just created. You configure credentials separately for each of the Elasticsearch plugins in your Logstash.conffile. For example:input { elasticsearch { ... user => logstash_internal password => x-pack-test-password } } filter { elasticsearch { ... user => logstash_internal password => x-pack-test-password } } output { elasticsearch { ... user => logstash_internal password => x-pack-test-password } }

To access the indices Logstash creates, users need the read and view_index_metadata privileges:

Create a

logstash_readerrole that has thereadandview_index_metadataprivileges for the Logstash indices. You can create roles from the Management > Roles UI in Kibana or through theroleAPI:POST _security/role/logstash_reader { "cluster": ["manage_logstash_pipelines"], "indices": [ { "names": [ "logstash-*" ], "privileges": ["read","view_index_metadata"] } ] }Assign your Logstash users the

logstash_readerrole. If the Logstash user will be using centralized pipeline management, also assign thelogstash_systemrole. You can create and manage users from the Management > Users UI in Kibana or through theuserAPI:POST _security/user/logstash_user { "password" : "x-pack-test-password", "roles" : [ "logstash_reader", "logstash_system"], "full_name" : "Kibana User for Logstash" }logstash_systemis a built-in role that provides the necessary permissions to check the availability of the supported features of Elasticsearch cluster.

If TLS encryption is enabled on an on premise Elasticsearch cluster, you need to configure the ssl_enabled and ssl_certificate_authorities options in your Logstash .conf file:

output {

elasticsearch {

...

ssl_enabled => true

ssl_certificate_authorities => '/path/to/cert.pem'

}

}

- The path to the local

.pemfile that contains the Certificate Authority’s certificate.

Hosted Elasticsearch Service simplifies security. This configuration step is not necessary for hosted Elasticsearch Service on Elastic Cloud. Elastic Cloud Hosted is available on AWS, GCP, and Azure, and you can try it for free at https://cloud.elastic.co/registration.

The elasticsearch output supports PKI authentication. To use an X.509 client-certificate for authentication, you configure the keystore and keystore_password options in your Logstash .conf file:

output {

elasticsearch {

...

ssl_keystore_path => /path/to/keystore.jks

ssl_keystore_password => realpassword

ssl_truststore_path => /path/to/truststore.jks

ssl_truststore_password => realpassword

}

}

- If you use a separate truststore, the truststore path and password are also required.

If you want to monitor your Logstash instance with Elastic Stack monitoring features, and store the monitoring data in a secured Elasticsearch cluster, you must configure Logstash with a username and password for a user with the appropriate permissions.

The security features come preconfigured with a logstash_system built-in user for this purpose. This user has the minimum permissions necessary for the monitoring function, and should not be used for any other purpose - it is specifically not intended for use within a Logstash pipeline.

By default, the logstash_system user does not have a password. The user will not be enabled until you set a password. See Setting built-in user passwords.

Then configure the user and password in the logstash.yml configuration file:

xpack.monitoring.elasticsearch.username: logstash_system

xpack.monitoring.elasticsearch.password: t0p.s3cr3t

If you initially installed an older version of X-Pack and then upgraded, the logstash_system user may have defaulted to disabled for security reasons. You can enable the user through the user API:

PUT _security/user/logstash_system/_enable

If you plan to use Logstash centralized pipeline management, you need to configure the username and password that Logstash uses for managing configurations.

You configure the user and password in the logstash.yml configuration file:

xpack.management.elasticsearch.username: logstash_admin_user

xpack.management.elasticsearch.password: t0p.s3cr3t

- The user you specify here must have the built-in

logstash_adminrole as well as thelogstash_writerrole that you created earlier.

Instead of using usernames and passwords, you can use API keys to grant access to Elasticsearch resources. You can set API keys to expire at a certain time, and you can explicitly invalidate them. Any user with the manage_api_key or manage_own_api_key cluster privilege can create API keys.

Tips for creating API keys:

API keys are tied to the cluster they are created in. If you are sending output to different clusters, be sure to create the correct kind of API key.

Logstash can send both collected data and monitoring information to Elasticsearch. If you are sending both to the same cluster, you can use the same API key. For different clusters, you need an API key per cluster.

A single cluster can share a key for ingestion and monitoring.

A production cluster and a monitoring cluster require separate keys.

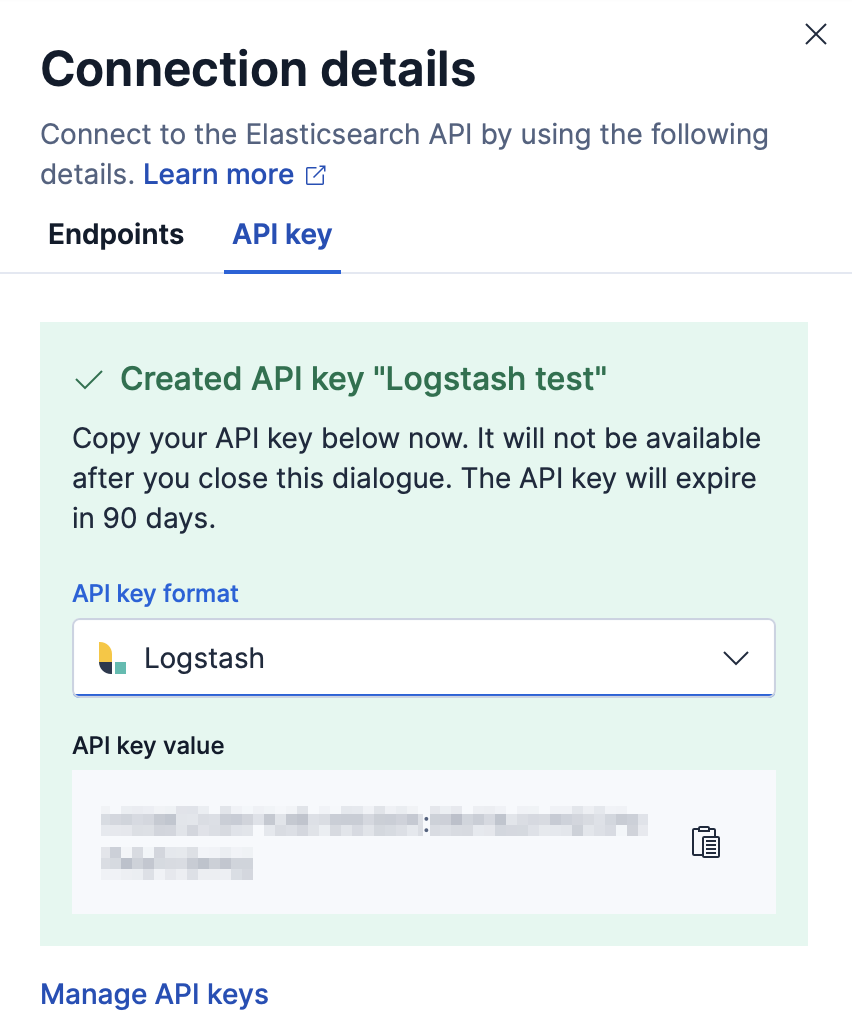

When you create an API key for Logstash, select Logstash from the API key format dropdown. This option formats the API key in the correct

id:api_keyformat required by Logstash.

The UI for API keys may look different depending on the deployment type.

For security reasons, we recommend using a unique API key per Logstash instance. You can create as many API keys per user as necessary.

You can create API keys using either the Create API key API or the Kibana UI. This section walks you through creating an API key using the Create API key API. The privileges needed are the same for either approach.

Here is an example that shows how to create an API key for publishing to Elasticsearch using the Elasticsearch output plugin.

POST /_security/api_key

{

"name": "logstash_host001",

"role_descriptors": {

"logstash_writer": {

"cluster": ["monitor", "manage_ilm", "read_ilm"],

"index": [

{

"names": ["logstash-*"],

"privileges": ["view_index_metadata", "create_doc"]

}

]

}

}

}

- Name of the API key

- Granted privileges

The return value should look similar to this:

{

"id":"TiNAGG4BaaMdaH1tRfuU",

"name":"logstash_host001",

"api_key":"KnR6yE41RrSowb0kQ0HWoA"

}

- Unique id for this API key

- Generated API key

You’re in luck! The example we used in the Create an API key section creates an API key for publishing to Elasticsearch using the Elasticsearch output plugin.

Here’s an example using the API key in your Elasticsearch output plugin configuration.

output {

elasticsearch {

api_key => "TiNAGG4BaaMdaH1tRfuU:KnR6yE41RrSowb0kQ0HWoA"

}

}

- The format of the value is

id:api_key, whereidandapi_keyare the values returned by the Create API key API

Creating an API key to use for reading data from Elasticsearch is similar to creating an API key for publishing described earlier. You can use the example in the Create an API key section, granting the appropriate privileges.

Here’s an example using the API key in your Elasticsearch inputs plugin configuration.

input {

elasticsearch {

"api_key" => "TiNAGG4BaaMdaH1tRfuU:KnR6yE41RrSowb0kQ0HWoA"

}

}

- The format of the value is

id:api_key, whereidandapi_keyare the values returned by the Create API key API

Creating an API key to use for processing data from Elasticsearch is similar to creating an API key for publishing described earlier. You can use the example in the Create an API key section, granting the appropriate privileges.

Here’s an example using the API key in your Elasticsearch filter plugin configuration.

filter {

elasticsearch {

api_key => "TiNAGG4BaaMdaH1tRfuU:KnR6yE41RrSowb0kQ0HWoA"

}

}

- The format of the value is

id:api_key, whereidandapi_keyare the values returned by the Create API key API

To create an API key to use for sending monitoring data to Elasticsearch, use the Create API key API. For example:

POST /_security/api_key

{

"name": "logstash_host001",

"role_descriptors": {

"logstash_monitoring": {

"cluster": ["monitor"],

"index": [

{

"names": [".monitoring-ls-*"],

"privileges": ["create_index", "create"]

}

]

}

}

}

- Name of the API key

- Granted privileges

The return value should look similar to this:

{

"id":"TiNAGG4BaaMdaH1tRfuU",

"name":"logstash_host001",

"api_key":"KnR6yE41RrSowb0kQ0HWoA"

}

- Unique id for this API key

- Generated API key

Now you can use this API key in your logstash.yml configuration file:

xpack.monitoring.elasticsearch.api_key: TiNAGG4BaaMdaH1tRfuU:KnR6yE41RrSowb0kQ0HWoA

- The format of the value is

id:api_key, whereidandapi_keyare the values returned by the Create API key API

To create an API key to use for central management, use the Create API key API. For example:

POST /_security/api_key

{

"name": "logstash_host001",

"role_descriptors": {

"logstash_monitoring": {

"cluster": ["monitor", "manage_logstash_pipelines"]

}

}

}

- Name of the API key

- Granted privileges

The return value should look similar to this:

{

"id":"TiNAGG4BaaMdaH1tRfuU",

"name":"logstash_host001",

"api_key":"KnR6yE41RrSowb0kQ0HWoA"

}

- Unique id for this API key

- Generated API key

Now you can use this API key in your logstash.yml configuration file:

xpack.management.elasticsearch.api_key: TiNAGG4BaaMdaH1tRfuU:KnR6yE41RrSowb0kQ0HWoA

- The format of the value is

id:api_key, whereidandapi_keyare the values returned by the Create API key API

See the Elasticsearch API key documentation for more information:

See API Keys for info on managing API keys through Kibana.