What is log analytics?

Log analytics definition

Log analytics is the process of searching, investigating, and visualizing data generated by IT systems, which is stored as time-sequenced logs. Log analytics takes log monitoring one step further, allowing observability teams to discover patterns and anomalies across an organization. This can help them resolve application and system issues quickly and provide operational insights to get ahead of future problems. Log analysis can also be applied to historical data in archived logs for additional insights.

Why is log analytics important?

Log data is exponentially growing. Between human generated and machine generated data, logging tools need to scale to manage the influx of data. Traditional analytics tools struggle with the variety and volume of logging data in today’s complex systems. Without a centralized, robust logging platform, challenges (and costs) can increase. Because data is the key to understanding how your business processes run now, and can help you plan for the future.

What is the history of log analytics?

Since the inception of computer-generated records, organizations have been trying to review logs, at scale. But logs are generated across your entire IT ecosystem. Many do not contain all the information required, and are usually not in a consistent format. In modern tools, the process of log analysis centralizes this information and translates it for easier consumption.

Where is log analytics headed?

Because log data continues to grow, it is critical to think about how to store and access this information in the future. Being able to handle the volume of data makes it easier to use logs for other purposes like security, fraud, anomaly detection, and more. Log analytics use cases are continually expanding, such as analyzing how customers are navigating websites, where people get frustrated when using applications, and more.

How to perform log analysis

Performing log analysis comes down to a few key steps.

Collect and centralize data

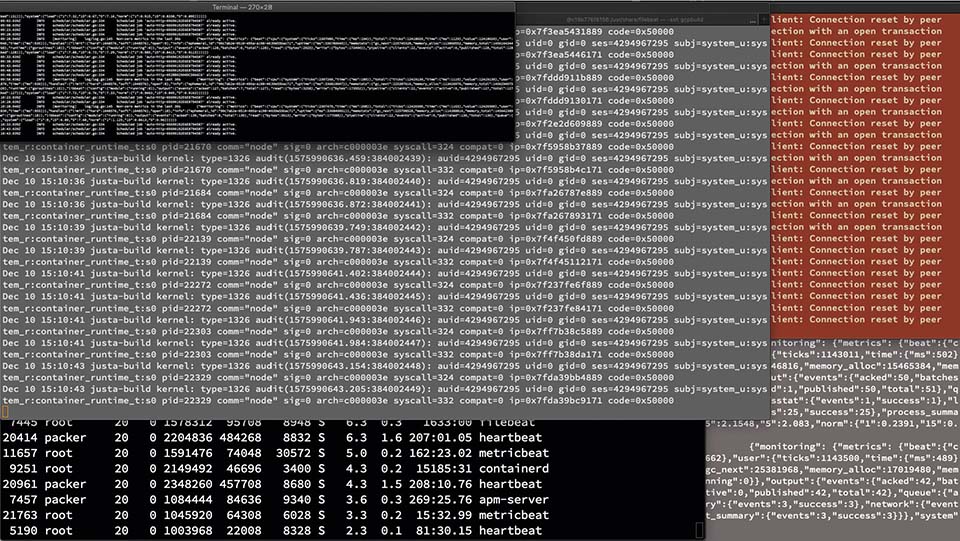

To start, gather all logs in a central location. Having everything together will make it easier to analyze. Once all logs are centralized, it is important to parse and index them. Logs gather data from separate systems, which also means there can be differences in naming conventions, formats, schema, etc. Standardizing terminology at the beginning can save hours of confusion (or errors) that can happen in the log analysis process. Learn more about log aggregation.

Analyze data

Now it is time to search through and analyze (run queries) for pattern recognition. Depending on the software, this step could involve a visualization tool. Reporting dashboards can help aggregate data for non-technical users and people outside an organization. Plus, it is easier to see trends and anomalies by reviewing graphs, compared to detailed logs.

Set up monitoring and alerts

Log analysis is critical when you are trying to resolve issues. But where organizations can see the biggest ROI is setting up real-time monitoring and alerting. For example, correlation analysis finds messages from different sources that all stem back to a singular, specific event. Then your system can determine what events need alerts based on patterns identified in logs. Teams can be notified in real time when conditions change. This speeds up recovery time by providing instant information about what happened, where, when, why, and how it impacted performance.

Who uses log analytics?

SREs, IT Operations, DevOps engineers, and IT enterprise architects are the primary users for log analytics tools. And some organizations must review logs for compliance audits, which can expand the list of users and stakeholders.

Log analytics vs. APM

Log analytics and Application Performance Monitoring (APM) are closely linked. Where log analytics interprets data from across an organization to resolve issues and make informed business decisions, APM monitors individual applications and their behavior in even more detail. The goal is to ensure a positive customer experience and customer transactions.

APM tracks performance metrics — like response time, latency, and errors — to help pinpoint issues that could impact users. Once an anomaly or issue is detected by APM, the associated logs (and metrics) are correlated and surfaced for further troubleshooting and root cause analysis. By monitoring users, organizations can understand how information is accessed and make improvements that can reduce customer churn.

What are the benefits of log analytics?

A lot of answers to operational questions can be found in logs. Teams can leverage logs to:

- Improve customer experiences (and reduce churn): Review how users engage with an application to make better decisions that keep them engaged and navigate easier.

- Decrease resource usage and latency: Identify where in your organization resources are not optimized and resolve performance issues.

- Identify customer behavior: What are your customers interested in? What customers are the most active and where are they going? Logs provide an opportunity to gather information so you can personalize your sales and marketing material.

- Spot suspicious activity: Bad actors leave a trail across your organization. Analyzing behavior can help stop them before they gain access to valuable data.

- Comply with audits: For companies that need to adhere to standards and regulations, audits are a regular occurrence. Using log analytics can help ensure audits do not fail.

What are the challenges of log analytics?

Key challenges for log analytics include:

- Scale: As logs grow, challenges for teams increase. Many log analytics tools struggle to scale when it comes to reviewing enterprise logs, and organizations are increasingly looking at AI for IT Operations (AIOps) to manage that volume of data.

- Centralization: Having a single pane of glass to view what is happening in your organization is one of the biggest benefits of log analytics. But log data is varied and often siloed. Outdated architectures may not be able to integrate with modern tools. Teams need to standardize logs so the information can be easily analyzed.

- Cost: Not all log data needs to be readily available, but when teams need it, they need it now. Cost-effective storage with data tiers reduces the overhead.

- Diverse data: Given the complexity of today’s distributed applications across multiple services and systems, log data is equally diverse. From structured to unstructured logs across infrastructure, applications, and services, the need to normalize and understand your log data for efficient querying is critical.

What are the use cases for log analytics?

From real-time application and performance monitoring, to root cause analysis and SIEM, log analytics can help revolutionize your business. But log analysis can be used to help with so much more. Organizations can leverage log data to ensure compliance with security policies, examine online user behavior, and overall make better business decisions.

Where do I store my log data?

Where you store your log data depends on how long you want immediate access to the information and your data’s volume. For long-term storage Amazon Simple Storage Service (S3), AWS Glacier, or archival storage can work. Another approach is storing them directly in distributed storage systems, like Elasticsearch that can deliver different storage tiers. It is important when deciding on a tool that you examine how easy it is to rehydrate data before you can analyze. With some tools, teams need to wait up to 24 hours before data can be searchable.

What your log analytics strategy needs

To get the most out of logs, you need a log analytics tool that can centralize and automatically process logs for specific events. Then teams can perform a deeper analysis to extract meaningful insights and make predictions based on patterns. It also will require both scale to handle the sheer volume of logs and the speed to deliver the answers you need in milliseconds (instead of minutes).

Elastic’s log analytics solution

As one of the most popular and most deployed log management and search tools, Elastic Observability — built on Elasticsearch — provides powerful and flexible log management and analytics. From on-premises to Elastic Cloud, for observability or security initiatives, Elastic can easily scale to handle petabytes of your log data for troubleshooting and insights.

With Elastic you get:

- Easy deployment that can work for a range of use cases

- Scale and reliability (up to petabytes of data)

- Integrations into tools your teams already use — plus advanced machine learning capabilities built into the platform

- Lower costs with a simple data tier strategy so you only pay for what you consume

- Centralized log management

Whether you want to use log analytics for observability or security, Elastic allows you to ingest once and leverage everywhere.