What is log monitoring?

Log monitoring definition

Log monitoring is the process of collecting, analyzing, and acting on log data from various sources. This can include applications and infrastructure — compute, network, and storage. When developers and operational teams monitor logs, they’re doing so to find anomalies and issues within a system so that they can troubleshoot those issues as efficiently as possible.

To guarantee performance, availability, and security, logs need to be continuously observed by developers and engineering teams. This process, commonly known as log monitoring, happens in real time as logs are recorded.

Alongside metrics and traces, log monitoring is an important part of observability. Log monitoring, as well as robust log management software, is critical for businesses because it allows teams to uncover, address, and solve issues before they affect customers or other types of users.

Wait, what are logs?

Log data is information that is generated by various systems and applications as they run. This data can include system events, error messages, performance metrics, and user activity. For example, a log can provide a record of a fault and the time that fault occurred, which would then allow you to find errors within your code to troubleshoot issues. Each log is time-stamped and shows an event that happened at a certain point in time.

Logs can show events happening in the operating system – these include such events as connection attempts, errors, and configuration changes. These types of logs are known as system logs.

In contrast, application logs show information about events happening within the application software stack, especially in dedicated proxies, firewalls, and other software applications. These types of logs record software changes, CRUD operations, application authentication, and more.

What is the history of log monitoring?

Log monitoring has a long history. Today, most developers rely on observability tools for their log monitoring needs. In the past, however, logs were reviewed manually. In the earliest days of Unix, developers used text and search tools to review their log files.

Manually reviewing logs is no longer a viable option. The volume of data is simply too large to be understood by humans. Teams need to review logs quickly to resolve issues before they slow down their business. Thankfully, as more and more logs get generated, the industry has also made major strides in its ability to automate parts of log monitoring.

Today’s organizations have a variety of robust tools to help them effectively conduct log monitoring. This is essential, as modern systems have a web of interdependent applications that all need to be tracked. Log monitoring tools now offer consolidated data storage and automation, which is crucial to organizations with distributed cloud apps.

Why is log monitoring important?

Reviewing and monitoring event logs in your system and applications may not seem particularly impactful, especially when everything is running smoothly. But the ability to monitor log files effectively has massive ramifications for organizations.

When issues arise, teams need to be able to dive in and review logs so that they can pinpoint specific events and instances. Once anomalies are identified, teams can then troubleshoot issues so that systems are always secure and end users always have a seamless experience.

Without robust log monitoring, teams are left in the dark, unable to monitor performance and availability of systems and applications or offer a stellar customer experience. This is especially complex and risky due to the distributed nature of cloud applications. With so much data flowing, organizations need robust log monitoring to stay on top of their business.

What are the benefits of log monitoring?

It's clear that log monitoring is important, but why is it particularly important at the enterprise scale? Enterprises tend to process and generate huge amounts of log data. As volume increases, log monitoring needs to be robust and monitor the performance and availability of systems and applications to offer a positive customer experience.

Here are some of the key benefits of enterprise log monitoring:

Centralize data to give visibility across operations

Today's log monitoring tools offer a centralized experience. Developers can go to one place to monitor all system and application logs. This centralized approach gives immediate visibility into system operation without developers having to dig through different systems. Because everything is centralized, responses can be quicker and more efficient, saving time and resources.

Allow for faster responses to incidents

When logs are automatically monitored and available to view within a centralized solution, it's much easier to review logs, detect issues, and then respond quickly to any incident. Fast performance is important from an end-user perspective – no one wants an incident to affect users. But it's also important from a security perspective. Any breach or vulnerability needs to be troubleshooted immediately to reduce risk.

Automate to save time

With so much data being generated, enterprises are inundated with an ever-increasing number of logs that become extraordinarily difficult to parse. Log monitoring tools offer the ability to not only monitor logs but also flag or correct issues automatically. These automated features, which are always improving, save substantial amounts of time for busy teams.

Use the same logs for observability and security operations

When log monitoring is centralized, it can be used for more than one purpose. Modern enterprises can use the same logs for observability and security operations, streamlining workflows and reducing time spent on separate tooling.

What are the challenges of log monitoring?

Even though organizations have been monitoring logs for decades, many organizations have been doing it in a piecemeal fashion, relying on traditional approaches and legacy platforms. However, as the volume of data increases, enterprises that want to remain competitive have to rise to the challenge and embrace new tools for log monitoring.

Here are some of the top challenges of conducting log monitoring:

More complexity than ever before

Technology has gotten increasingly complex with more data being created, processed, and stored than ever before. The cloud has created more systems with virtual and ephemeral objects creating virtual logs. All of this means more logs and more data, making it harder to monitor logs. Organizations need better, more robust tools to sift through it all.

Increased volume and different formats for logging

The volume of logs has increased exponentially, and there are different logging forms. A variety of systems generate logs which come in as structured or unstructured without a common format. This means observability experts have to decipher what the log means, which takes substantial resources. Organizations have to create a standardized format in order to effectively monitor their logs.

Legacy data silos

Many enterprises continue to operate with distributed systems as opposed to centralized ones. Not only does this approach incur high costs because of the volume created, but it also causes extra work for developers and operation teams who could be more efficient when data is centralized.

Legacy manual and piecemeal approaches

Even though major strides have been made in log monitoring platforms, many approaches are too manual. These manual approaches are time consuming, vulnerable to human error, and are often outdated, which puts the organization at risk. Sometimes these approaches are piecemeal as well, which means issues can go undetected.

Log monitoring use cases

Logs are generated by many different systems and devices. Within an enterprise, log monitoring is most commonly used for the following:

Network monitoring

Network devices like firewalls, switches, routers, and load balancers generate logs that then need to be monitored.

Infrastructure monitoring

Log monitoring for infrastructure tracks on-premise and cloud solutions such as virtual machines, platforms like AWS or Azure, container platforms such as Kubernetes, third-party data sources, and open-source software.

Application monitoring

Log monitoring for applications typically encompasses two types of applications: (1) what the end user ultimately experiences and (2) the services within distributed apps. Application monitoring is important because application performance can affect the customer experience. For example, if services are down, customers might leave the site or app. Databases are considered applications, as well.

Compliance

Businesses are legally required to keep certain types of data. In order to remain compliant, they need to store log data for a certain amount of time. That way they have a historical record to review should any incidents arise.

What to consider when choosing a log monitoring solution

Enterprises that conduct log monitoring need a platform that makes teams more productive and efficient. Here are some things to consider when choosing a log monitoring solution:

Centralized data

With a high volume of logs in different types and coming from a variety of sources, you want to choose a platform that offers centralized data. A centralized view will make it much easier and more efficient to parse log data.

Actionable Alerting

Finding error conditions and pattern matching is just the first step. The ability to act and alert on anomalies in logs effectively is crucial to log monitoring. An effective platform needs to filter through and prioritize log monitoring alerts to identify what needs to be addressed now and investigated by IT operations teams. The ability to apply AIOps and machine learning to your monitoring is one way to minimize alert fatigue for your end users.

Cost-friendly or ROI-proven

You don’t want to choose a tool just because it’s the least expensive, but you do want a platform that is cost-friendly or ROI-proven. It’s worthwhile to conduct a cost analysis to understand the tradeoffs of the log monitoring solutions you’re considering.

Total observability solution — not just log monitoring

Log monitoring is only one component of observability. To get the most value, it’s best to look for a platform that offers a full-suite observability solution. Log monitoring only provides a part of the story.

FAQ about log monitoring

Learn more about log monitoring.

What’s the difference between log monitoring and log analytics?

Log monitoring and log analytics are closely related and work together to make sure systems are running smoothly. Log monitoring deals with tracking and monitoring logs, while log analytics delivers more context so that teams can understand the best actions to take.

What are some log monitoring best practices?

Organizations conducting log monitoring at scale need an enterprise system that centralizes log management. These systems need to manage the massive amount of logs created by complex systems and have a tiered approach to logs that helps lower ongoing costs.

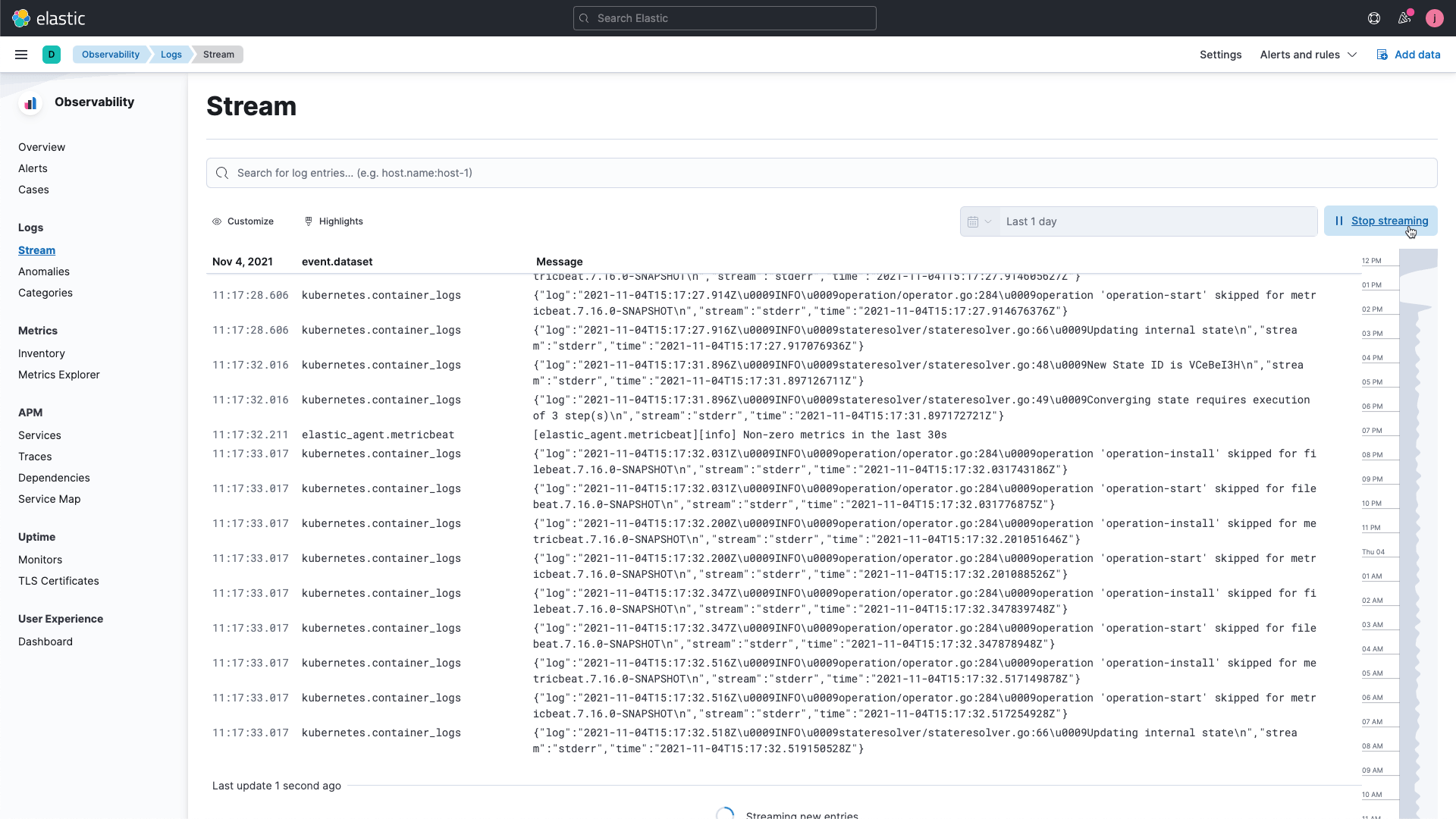

Log monitoring with Elastic

As the recognized leader in log monitoring, Elastic Observability (powered by the Elasticsearch engine) provides powerful and flexible log monitoring features. From on-premises to Elastic Cloud, for observability or security initiatives, Elastic can easily scale to handle petabytes of log data so teams can troubleshoot quickly. With Elastic you get:

- Easy deployment that works for your use cases

- Scale and reliability, no matter your data size

- Integrations into tools teams love — plus built-in machine learning capabilities

- Lower costs with a simple data tier strategy (you only pay for what you consume)

- Centralized log management

Beyond monitoring and alerting, the Elastic’s flexible storage capabilities allows you to keep historical log data for future analysis, investigation, and discovery. Whether you are monitoring logs for observability or security, Elastic allows you to ingest once and leverage everywhere.