On-demand webinar

Scaling Log Aggregation at Fitbit

Hosted by:

Breandan Dezendorf

Overview

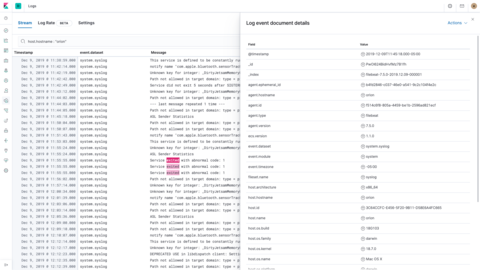

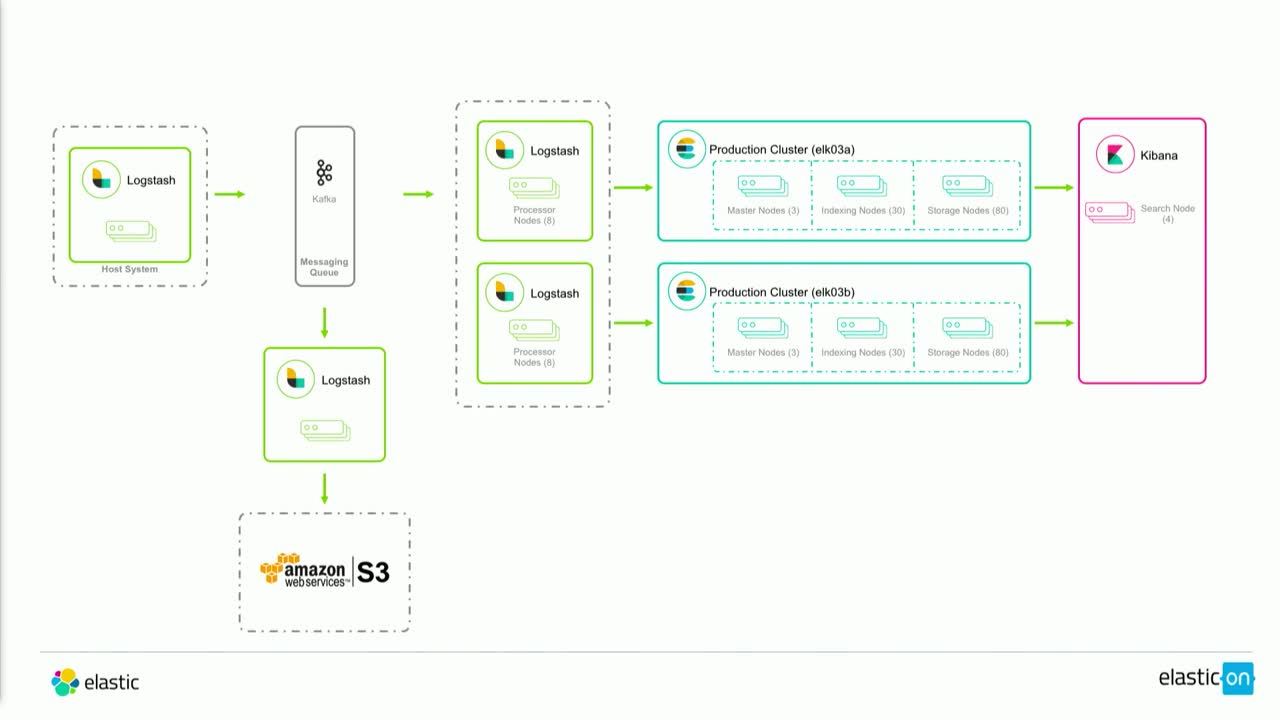

Over the course of their logging aggregation journey, Fitbit has scaled from 35,000 to 225,000 logs per second, increased data storage from 5 to 30 days, and upgraded from Elasticsearch 1.5.x to 5.5.x. This talk covers the challenges of scaling a log aggregation pipeline to process approximately 19 billion messages per day — representing 35 TB of data — and utilizing every part of the Elastic Stack, from log queuing to field mappings.

Learn how Fitbit scaled their deployment and find out how their team uses extensive operational analysis to validate failures as well as enhance disaster recovery and archive the data for each part of the Elastic Stack. See how Fitbit meets the diverse business needs of internal customers — from software developers to customer support personnel — and how they deliver useful, cost-effective information while maintaining performance, integrity, and data security.

View next