Managing My Type 1 Diabetes with Elastic Machine Learning

My name is Andreas Helmer. I am 32 years old and I live in Ontario, Canada. I am a "data geek" and I am using Elastic to help me learn about my personal data. I work at AGFA HealthCare — a global radiology IT and healthcare company — where it is my job to try and work some of that “cold data” out of our systems and software in order to analyze how well our technology is working. My work is highly enjoyable, and it is what introduced me to Elastic. The Elastic Stack allows me to parse data from some of our logs to extract all kinds of statistical information. However, I want more than just “cold data” — I want data that pertains to me.

There are 1.5 million people living in the US and Canada with Type 1 Diabetes — including myself — analyzing, storing, reading, and understanding data is part of our daily lives. Living with Type 1 Diabetes means that one must constantly evaluate what we do on a daily basis. From eating, counting carbohydrates, exercising and even sleeping, everything we do has an impact on our blood glucose and insulin levels. We could not manage our own lives without looking at, analyzing, and interpreting our personalized data.

I was diagnosed with Type 1 Diabetes approximately 13 years ago and to be honest, initially, I did not take it too seriously, I would carb count, take my insulin and check my blood sugars (occasionally) just like I was told to. I was managing my condition adequately until about a month before my wedding. My fiancée and I were downtown enjoying the Kitchener Blues Festival, and all I remember is turning to my fiancée and saying that I needed to go get some food. Then, I felt nothing... I had blacked out and fell right on my face (from a standing position), from apparent hypoglycaemia. Luckily, I was OK, but I cannot really remember much of the incident. I felt fine once I was in the hospital, after a few hours of rest and a restoration of my glucose levels. I spent the next few hours in the ER but after having Type 1 Diabetes for almost a decade at that point, this was my first true medical emergency. Honestly, it scared me. It was not until approximately a year or two afterwards that I purchased myself an insulin pump and my first continuous glucose monitor; a device that checks my blood sugar levels every five minutes. This piece of technology was a life changer, and is what got me interested in exploring my own personal data.

(Machine) Learning about my Diabetes

Coping with — and managing — diabetes means interpreting a high volume of data. Each person has their own personal data set to understand, as we have different bodies and intricacies that make management of our blood sugars unique. We must continuously track our data on a daily basis. This includes measuring the carbohydrates we eat, measuring our insulin intake, how much exercise we complete, and how much we sleep. All of this data has to be documented so that we avoid high blood sugars (hyperglycaemia) which, if left untreated, can lead to Diabetic Ketoacidosis, a life threatening condition. Low blood sugars (hypoglycaemia) is just as dangerous as our bodies still need sugars to function, and too much insulin can rob our bodies of this basic need. Surfing these rough waves of highs and lows is why I decided to use Elastic. It has been monumentally helpful in monitoring my personal data, consequently smoothing out my blood sugar levels.

What I have done is not necessarily "new" as there are a multitude of services, tools, and projects out there that could do similar data analysis, however, that is part of the underlying issue. As I carry multiple devices to help me manage my day-to-day life, I needed a platform that can understand, (or one that I can configure to understand), all of these different types of data... Logstash and the rest of the Elastic Stack to the rescue!

Devices Used to Gather Diabetes Data

My diabetes solution consists of four major devices: Medtronic 630g Insulin Pump, Dexcom G5 Continuous Glucose Monitor (CGM), iPhone 8, and my Apple Watch Series 2. All of these devices have various formats of data output, which can become convoluted. This is why I decided to use Logstash, enabling me to parse the various sources of information. Logstash makes it incredibly easy to configure all sorts of input sources (CSV, JSON, HTTP REST) and then output and index the various documents into Elasticsearch, so that I can display the information in my own customized dashboards. I wanted a solution that came from one stack and was eager to try out some of the advanced features, such as Elastic machine learning UI within Kibana.

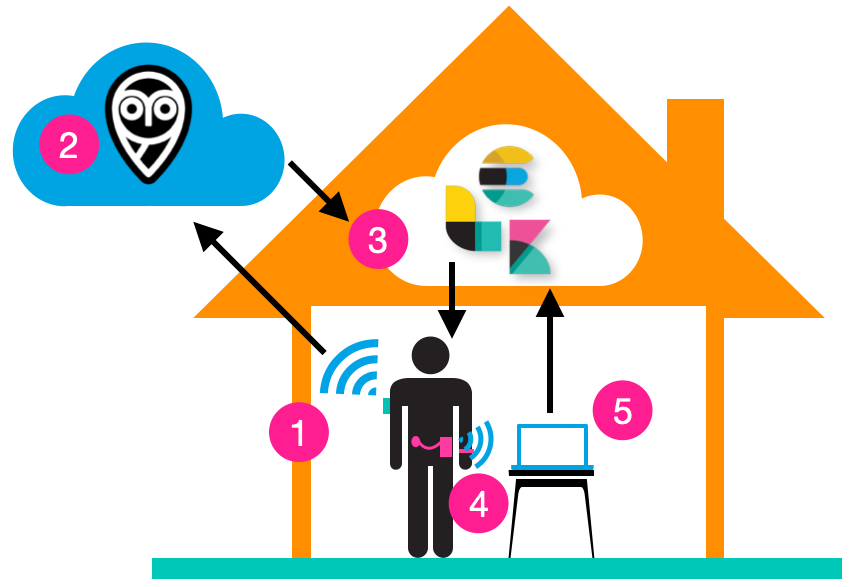

My on-the-go “live” setup consists of the following:

- The Dexcom CGM communicates via BLE every five minutes to my iPhone 8 which uses an app that uploads the information to a cloud service called Nightscout.

- The Nightscout Foundation, which is an open Source project that started the #WeWillNotWait movement. It is a service that can run on a cloud platform, such as Heroku, and allows for remote monitoring of CGM data. I highly recommend you check them out for more information as they were the pioneers of opening up the data from these CGMs.

- My own home Elastic Stack server.

- My insulin pump and Apple Watch, which need to be synced manually to my home laptop. Unfortunately, to get the data from my insulin pump and Apple Watch, I need to upload that data manually.

- A Macbook on my home network to download the CSV/JSON files from the other devices.

Below is a sample of the configuration that I am using to parse the CGM. This is not anything fancy as Logstash makes this so easy. However, this allows me to get LIVE data from the sensor on my body into my home server. Within a few moments I am able to see the data in my Kibana dashboard and calculate a set of metrics that can help me stabilize my blood sugars.

# Logstash Nightscout Poller

input{

http_poller{

#Poll Nightscout every 5 minutes for the last 3 sugar value (sgv) JSON entries (15 minutes worth of entries)

urls = {

nightscoutPollUrl => "https://mynightscout.herokuapp.com/api/v1/entries/sgv.json?count=3"

}

request_timeout => 60

socket_timeout => 60

schedule => { cron => "*/5 * * * *" }

codec => "json"

}

}

filter {

mutate {

#Manipulate some fields

add_field => {"mmolL" => 0}

rename => {"_id" => "id"}

remove_tag => ["@version","id"]

remove_field => ["dateString","sysTime","unfiltered","filtered","rssi"]

}

ruby {

#SGV is by default in mg/dL so divide by 18 to get mmol/L

code => "event.set('mmolL',event.get('sgv')/18.0)"

}

date {

#Match the timestamp to the JSON object timestamp

match => [ "date", "UNIX_MS" ]

remove_field => ["date"]

}

if [device] == "share2" {

#Junk from an old integration, don't care.

drop{}

}

if [sgv] < 18 {

#Most likely errors from a failed sensor, as "sgv < 18" indicates fatally low glucose levels.

drop{}

}

}

output{

#stdout{}

#Index all the things to Elasticsearch (localhost) and rely on auto templating!

elasticsearch {

index => "herrcgm-%{+YYYY.MM.dd}"

}

}

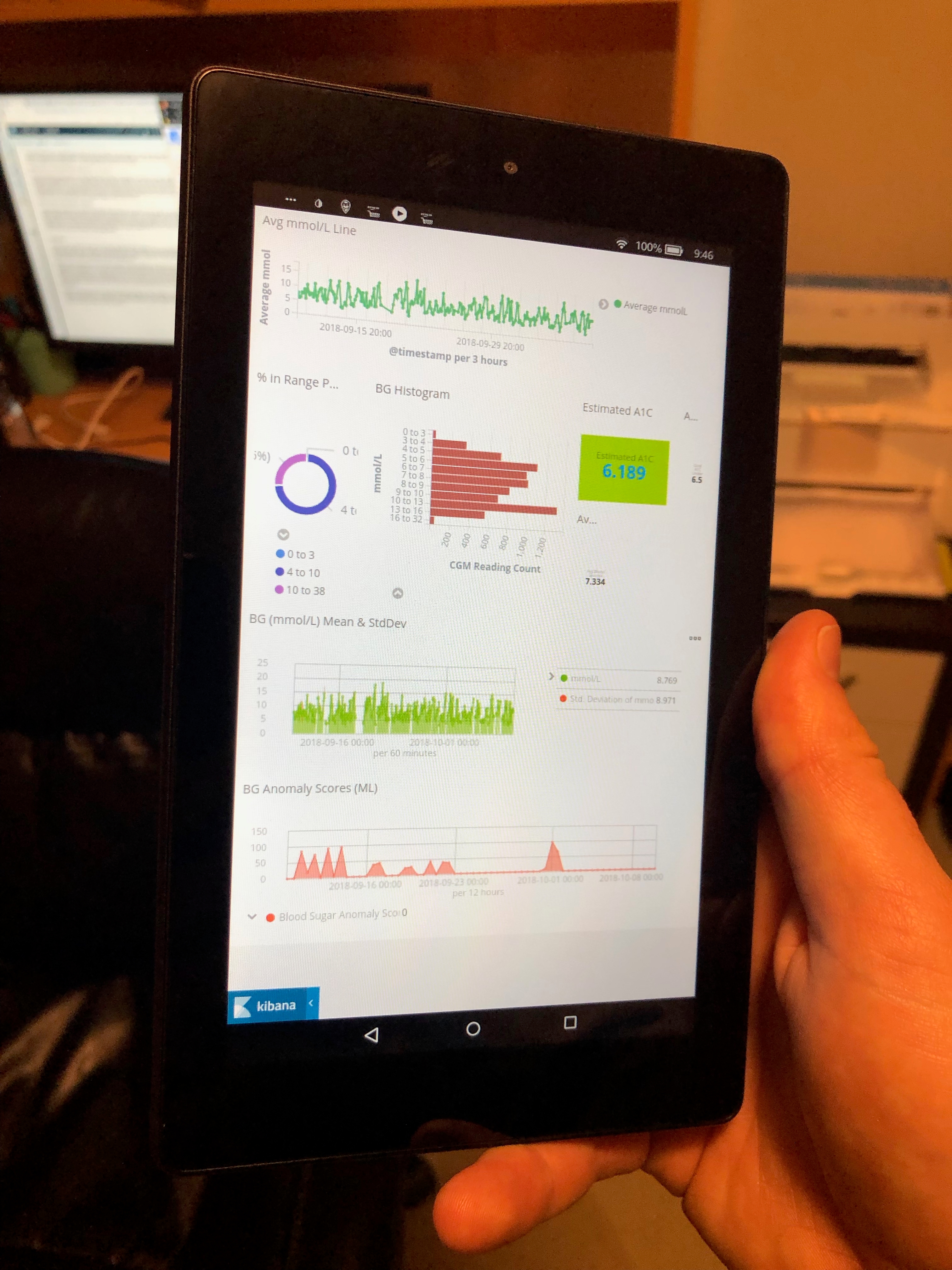

The information from my dashboard is able to help me predict my Hemoglobin A1C levels (the gold standard test for average blood sugar values), compare my data to the previous day and week, and even show me how my overall day is progressing, ie. live reporting at the touch of my fingers.

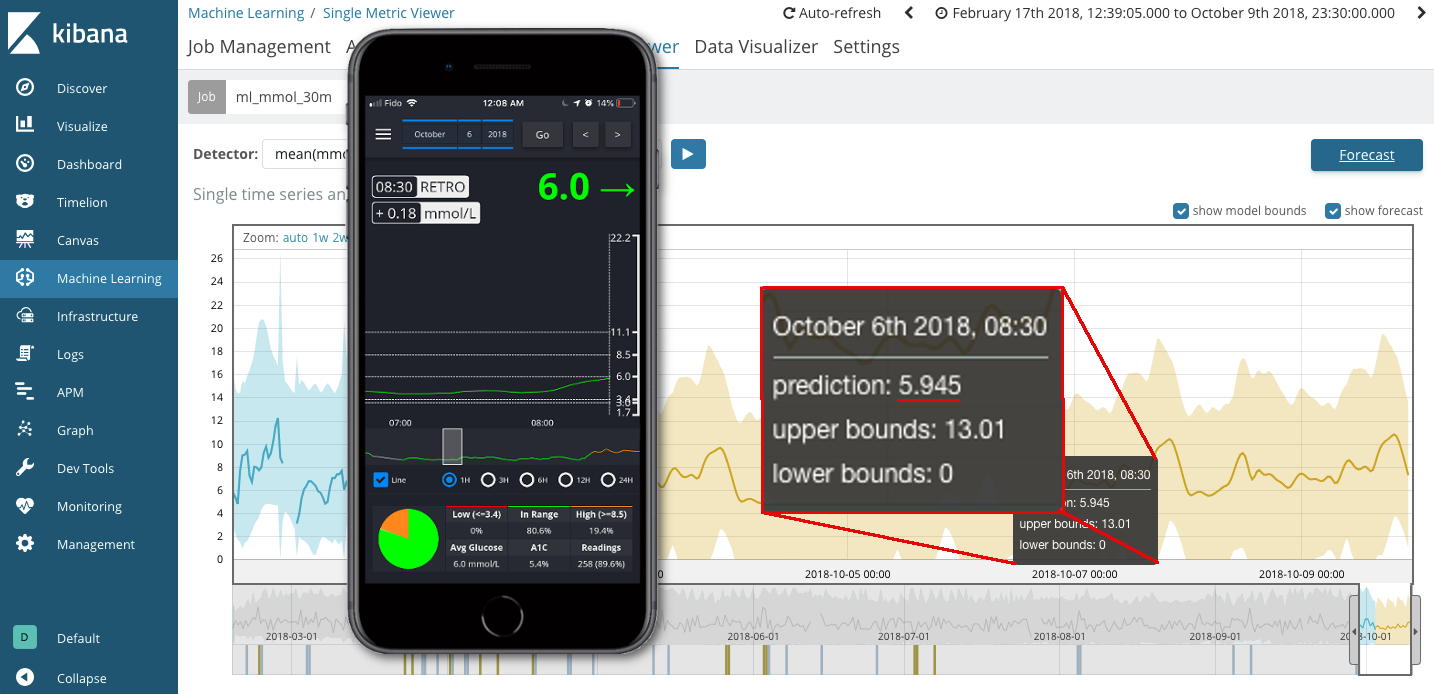

This is not even the best part! The most astonishing function is the ability to combine the Elastic machine learning functionality with all of my CGM data. The real “Aha” moment for me was the day after Elastic{ON} Toronto in October 2018, when I imported eight months worth of data into ML and had it forecast two weeks ahead. Since I only indexed data up to the end of September the forecasted data could be compared to actual data that I had already collected. Therefore, I randomly picked a data point from the forecast and compared it to the actual data from my phone. Take a look at what I found:

I was instantly amazed. The random point that I had picked was only within a 10% margin of error! My regular finger pricking glucometer even boasts a margin of error of 20%. Could the ML functionality really be that accurate? Of course it cannot predict the future perfectly as every day is a different day for someone with diabetes, but I was still amazed at how predictable my own blood sugar patterns were (on a good day). What I can do now, however, is setup alerts when my data ranges outside of the "norm" and make adjustments to help me smooth out my sugar values.

What's Next?

If Elastic can provide me with such an accurate description of live analysis of my own CGM data what more can it help me do? Can it analyze my insulin intake? Can it help to index my food consumption? How does pizza consumption affect me?! (Hint: pizza is the worst). Is it able to compare my heart rate data to my blood sugar values? Is it able to calculate how exercise affects me?

While I know the answers to a lot of these questions through experience and experimentation, I did not have a framework in place until now, to help me analyze and explore this data. All of the work that I am doing to analyze my own health is, of course, at my own risk. By no means is this any sort of endorsement to use Elastic as a medical device. I am attempting to improve the quality of my own life by figuring out the best way to manage my diabetes using the Elastic stack, and not simply looking at raw CPU, memory or event ingestion data. This journey has been about turning my personal data into a far more valuable source of information.

Elastic has been paramount in helping to effectively manage my Type 1 Diabetes. It has given me peace of mind, it has enabled me to combine all of the healthcare devices, which I carry on my person daily, and present the data to me in a format that I can easily understand. Hopefully Elastic can ensure that I do not pass out at the next Blues Fest that I attend! If you'd like to ask me any questions, feel free to reach out through LinkedIn.