Rally 1.0.0 Released: Benchmark Elasticsearch Like We Do

After more than two years in the making and more than 1400 commits from 27 contributors, we are proud to announce the 1.0.0 release of Rally, our benchmarking tool for Elasticsearch. To get started with Rally, check the quickstart in the docs. To help you improve your benchmarks, we also recently published seven tips for better Elasticsearch benchmarks.

Our development team uses Rally to run regular nightly and release benchmarks to spot performance issues early during development. We also use it during feature development or to tune Elasticsearch. In this blog post we want to share with you a few highlights of Rally's current feature set.

Geared towards Elasticsearch

Rally consists of two major components:

- A load generator

- An optional component that can configure a cluster for you

While the load generator is mostly agnostic of Elasticsearch and can be extended with custom operations, the setup component is heavily geared towards Elasticsearch. It allows you to build Elasticsearch from the source tree or to setup arbitrary versions from Elasticsearch 1.x up to the latest 6.3 release. If you already have some configuration management solution, like Puppet or Ansible, you can use that as well and use Rally only to generate load and do the reporting.

Flexible benchmark scenarios

Rally comes with a default set of benchmark scenarios, so called Rally "tracks" that we use for our benchmarks. However, you can --- and should --- define your own tracks to get more realistic results for your specific situation.

Reporting

While Rally provides basic reporting out of the box, you can setup a dedicated Elasticsearch metrics store. Rally will then store each and every sample there along with a lot of metadata. That helps you to better document your benchmarks and allows you to define custom visualizations in Kibana.

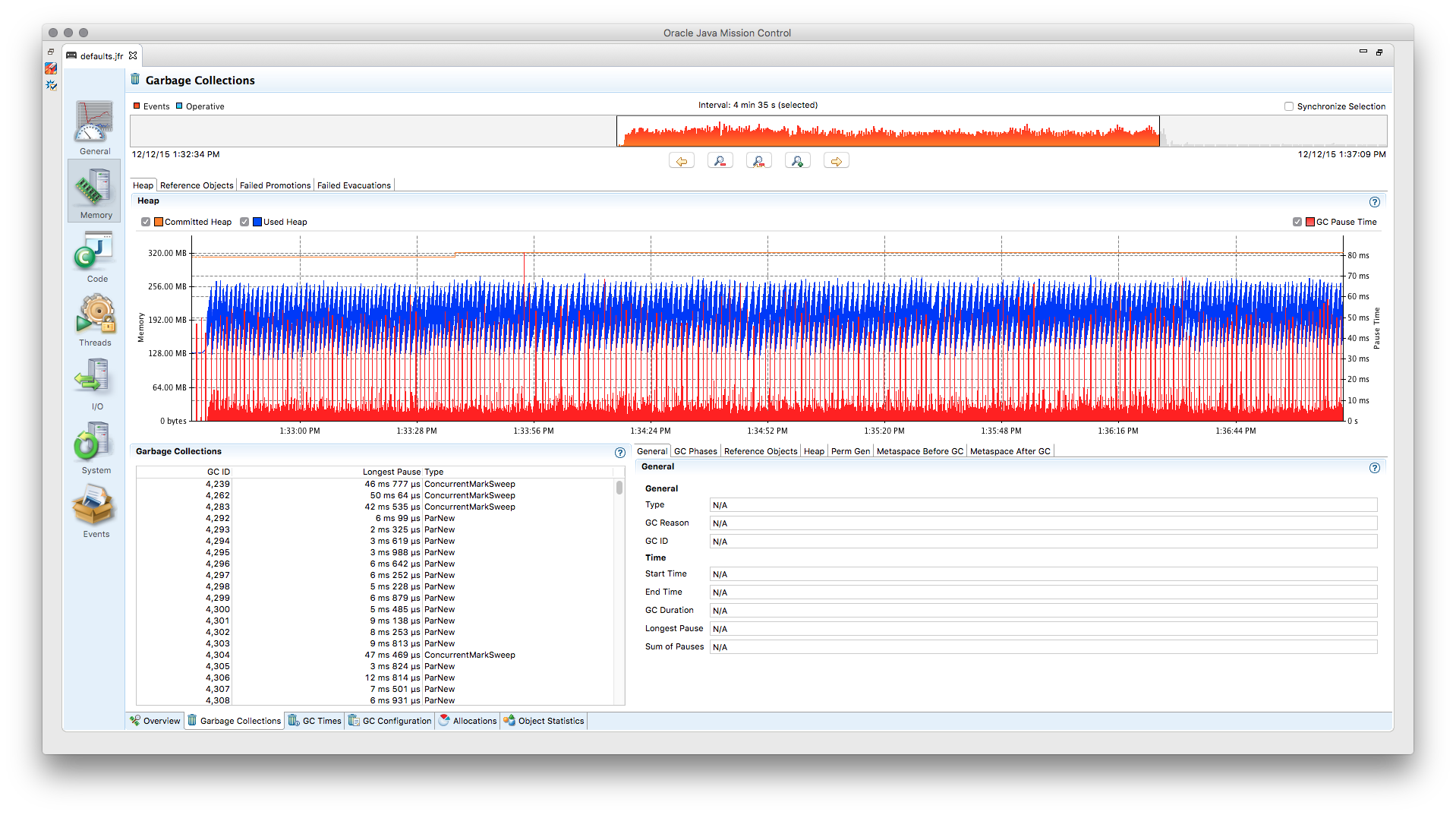

Gathering Insights with Telemetry

Often it is not sufficient to know that some operation is slow and we need an ability to dig deeper. That's why Rally has built-in telemetry that provides more insight into cluster internals.

Where are we headed?

While the 1.0.0 release marks an important milestone for us, we have many exciting topics ahead:

- We want to simplify creating benchmarks based on your workloads, for example by providing a bulk data file generator or creating benchmarks based on your slow query log.

- We also want to improve reporting capabilities by providing a dashboard generator for your benchmarks.

The image at the top of the post has been provided by Andrew Basterfield under the CC BY-SA license (original source). The image has been colorized differently than the original but is otherwise unaltered.