Elastic App Search allows developers to bring the power of Elasticsearch to mobile apps in a pretuned search experience.

When parsing body text during data ingestion, the App Search crawler extracts all the content from the specified website and spreads it in fields depending on the HTML tags it finds. Text within title tags are assumed as title field, anchor tags are parsed as links, and body is parsed as one giant field with everything else.

But what if a website has a custom structure — for example, the color, size, and price included on product pages — that you want to capture in specific fields and not as part of a single body field?

App Search allows you to add meta tags to your website to create custom fields, but sometimes making changes on the website is too complicated or just not possible.

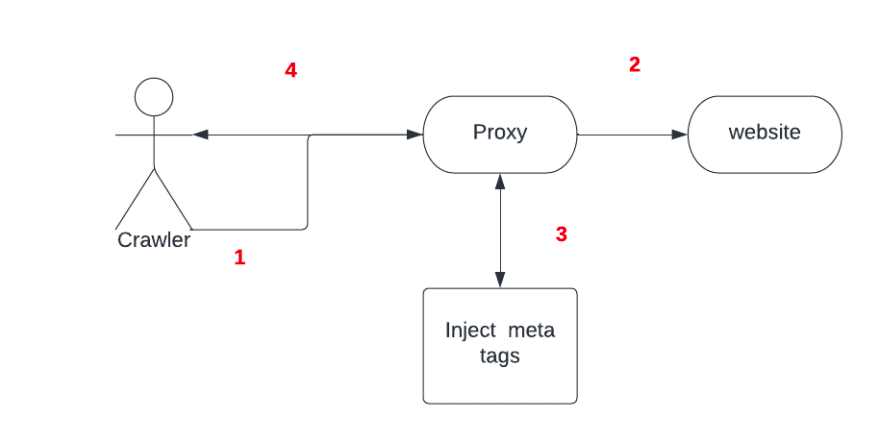

This post will explore the key components of how to create a proxy between the crawler and the website that does the extraction, creates the needed meta tags, and then injects these in the new response so App Search can grab the tags and use them.

[Related article: Elasticsearch Search API: A new way to locate App Search documents]

The body parsing solution

To solve the body parsing problem, you can create a NodeJS server that hosts a product page and a proxy that stands in front. This will receive the crawler request, hit the product page, inject the meta tags, and then return the page to the crawler.

Here’s how to add custom fields using meta tags:

<head>

<meta class="elastic" name="product_price" content="99.99">

</head>

<body>

<h1 data-elastic-name="product_name">Printer</h1>

</body>Tools

- Nodejs (to create example page and proxy)

- Ngrok (to expose local proxy to the internet)

- App Search (to crawl the page)

The page

Following the example, the first step is to serve a page that emulates a product page for a printer.

index.html

<html>

<head>

<title>Printer Page</title>

</head>

<body>

<h1>Printer</h1>

<div class="price-container">

<div class="title">Price</div>

<div class="value">2.99</div>

</div>

</body>

</html>server.js

const express = require("express");

const app = express();

app.listen(1337, () => {

console.log("Application started and Listening on port 1337");

});

app.get("/", (req, res) => {

res.sendFile(__dirname + "/index.html");

});Afterward, use App Search to crawl the page. The data you want to have as fields (like the price) is put inside the body content field:

Next, create a proxy capable of recognizing this data and injecting a meta tag to the response, so App Search can recognize this is a field.

proxy.js

const http = require("http"),

connect = require("connect"),

app = connect(),

httpProxy = require("http-proxy");

app.use(function (req, res, next) {

var _write = res.write;

res.write = function (data) {

_write.call(

res,

data

.toString()

.replace('class="value"', 'class="value" data-elastic-name="price"')

);

};

next();

});

app.use(function (req, res) {

proxy.web(req, res);

});

http.createServer(app).listen(8013);

var proxy = httpProxy.createProxyServer({

target: "http://localhost:1337",

});

console.log("http proxy server" + " started " + "on port " + "8013");Finally, start your server and proxy to expose the proxy with Ngrok and use it in App Search. Voilà! The price is now a separate field:

You can get as fancy as you want using the middleware that transforms the body response to add meta tags based on existent classes, but also based on the content itself.