Transform overview

editTransform overview

editYou can use transforms to pivot your data into a new entity-centric index. By transforming and summarizing your data, it becomes possible to visualize and analyze it in alternative and interesting ways.

A lot of Elasticsearch indices are organized as a stream of events: each event is an individual document, for example a single item purchase. Transforms enable you to summarize this data, bringing it into an organized, more analysis-friendly format. For example, you can summarize all the purchases of a single customer.

Transforms enable you to define a pivot, which is a set of features that transform the index into a different, more digestible format. Pivoting results in a summary of your data in a new index.

To define a pivot, first you select one or more fields that you will use to group your data. You can select categorical fields (terms) and numerical fields for grouping. If you use numerical fields, the field values are bucketed using an interval that you specify.

The second step is deciding how you want to aggregate the grouped data. When using aggregations, you practically ask questions about the index. There are different types of aggregations, each with its own purpose and output. To learn more about the supported aggregations and group-by fields, see Create transform.

As an optional step, you can also add a query to further limit the scope of the aggregation.

The transform performs a composite aggregation that paginates through all the data defined by the source index query. The output of the aggregation is stored in a destination index. Each time the transform queries the source index, it creates a checkpoint. You can decide whether you want the transform to run once or continuously. A batch transform is a single operation that has a single checkpoint. Continuous transforms continually increment and process checkpoints as new source data is ingested.

Imagine that you run a webshop that sells clothes. Every order creates a document that contains a unique order ID, the name and the category of the ordered product, its price, the ordered quantity, the exact date of the order, and some customer information (name, gender, location, etc). Your dataset contains all the transactions from last year.

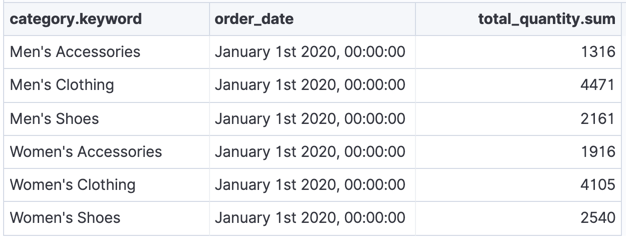

If you want to check the sales in the different categories in your last fiscal year, define a transform that groups the data by the product categories (women’s shoes, men’s clothing, etc.) and the order date. Use the last year as the interval for the order date. Then add a sum aggregation on the ordered quantity. The result is an entity-centric index that shows the number of sold items in every product category in the last year.

The transform leaves your source index intact. It creates a new index that is dedicated to the transformed data.

Performance considerations

editTransforms perform search aggregations on the source indices then index the results into the destination index. Therefore, a transform never takes less time or uses less resources than the aggregation and indexing processes.

If your transform must process a lot of historic data, it has high resource usage initially—particularly during the first checkpoint.

For better performance, make sure that your search aggregations and queries are optimized and that your transform is processing only necessary data. Consider whether you can apply a source query to the transform to reduce the scope of data it processes. Also consider whether the cluster has sufficient resources in place to support both the composite aggregation search and the indexing of its results.

If you prefer to spread out the impact on your cluster (at the cost of a slower

transform), you can throttle the rate at which it performs search and index

requests. Set the docs_per_second limit when you create or

update your transform. If you want to calculate the

current rate, use the following information from the

get transform stats API:

documents_processed / search_time_in_ms * 1000