Transformation von Observability mit AI Assistant, OTel-Standardisierung, fortlaufendes Profiling und erweiterte Log Analytics

Elastic Observability bietet exakte Einblicke mit dem AI Assistant, die beste, mit OpenTelemetry (OTel) standardisierte Lösung, Profiling-Erweiterungen und umfassende Log Analytics für eine schnellere Problembehebung.

Neuartige KI- und generative KI-Lösungen haben ein neues Zeitalter der Observability eingeläutet. Durch die zunehmende Allgegenwärtigkeit dieser KI-Technologien wandelt sich Observability von einem manuellen, reaktiven Verfahren hin zu einem KI-gesteuerten Ansatz, der Probleme automatisch diagnostiziert und behebt.

Die Zeit, in der monolithische Anwendungen in Rechenzentren ausgeführt und nur selten aktualisiert wurden, gehört längst der Vergangenheit an. Früher verließen sich Operations-Teams auf Server-, Netzwerk- und Speicher-Tools, um ihre Tech-Silos zu überwachen und mussten Daten manuell auswerten und sich in Konferenzbrücken mit anderen Teams absprechen, um Probleme identifizieren, bewerten und beheben zu können. Seit dem Aufkommen der Cloud mit deren Komplexität, abstrahierter Infrastruktur und schnelleren Entwicklungszyklen brauchen Operations- und SRE-Teams Observability, um diese neuen „unbekannten Unbekannten“ zu bändigen. Und obwohl Observability-Tools diese Herausforderungen etwas erleichtert haben, wird ein Großteil der Arbeit immer noch manuell erledigt und durch Tool-Silos und exponentiell steigende Kosten erschwert.

Durch jahrelange Erfahrung und Innovation in Bereichen wie KI und Machine Learning (ML), Vektordatenbanken, Elasticsearch Relevance EngineTM (ESRE) und Retrieval Augmented Generation (RAG) ist Elastic in einer hervorragenden Position, um IT-Teams in diesem neuen Zeitalter der KI-gestützten Observability zu unterstützen, Metriken, Logs, Traces und Profiling in einer einzigen Plattform bereitzustellen und umsetzbare Einblicke zu generieren.

Kelly Fitzpatrick, Senior Analyst bei RedMonk:

Observability im Jahr 2023 stellt uns vor die Herausforderung, bahnbrechende neue Technologien und neue Standards mit zunehmend komplexen Systemen in Einklang zu bringen. Elastic unterstützt Unternehmen bei diesen Herausforderungen mit Tools, die speziell für dieses ständig im Umbruch befindliche Betriebsumfeld entwickelt wurden. Mit dem AI Assistant für Observability, der erneuerten Selbstverpflichtung zu OpenTelemetry und der allgemeinen Verfügbarkeit von Universal Profiling können SRE-Teams die Kosten und die Komplexität ihrer Systeme mit Elastic besser verwalten.

Interaktiver Elastic AI Assistant für umfassende Betriebsdaten mit kontextbezogenen, umsetzbaren Einblicken

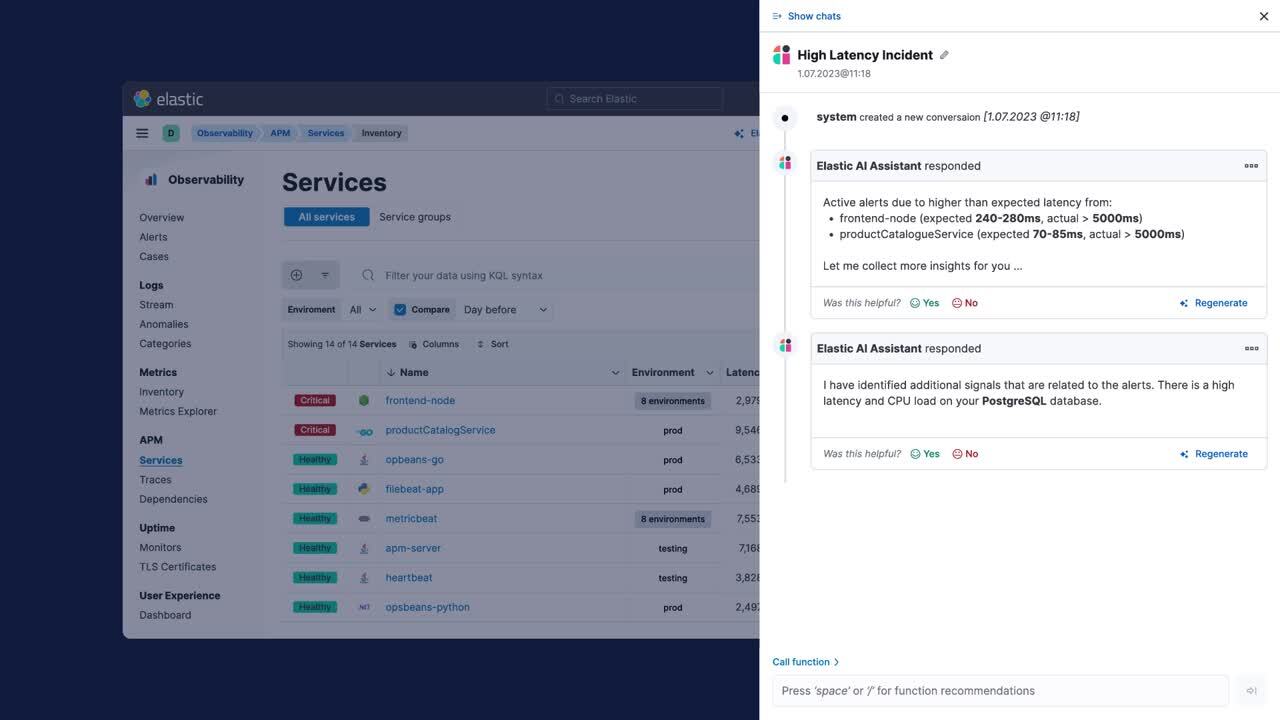

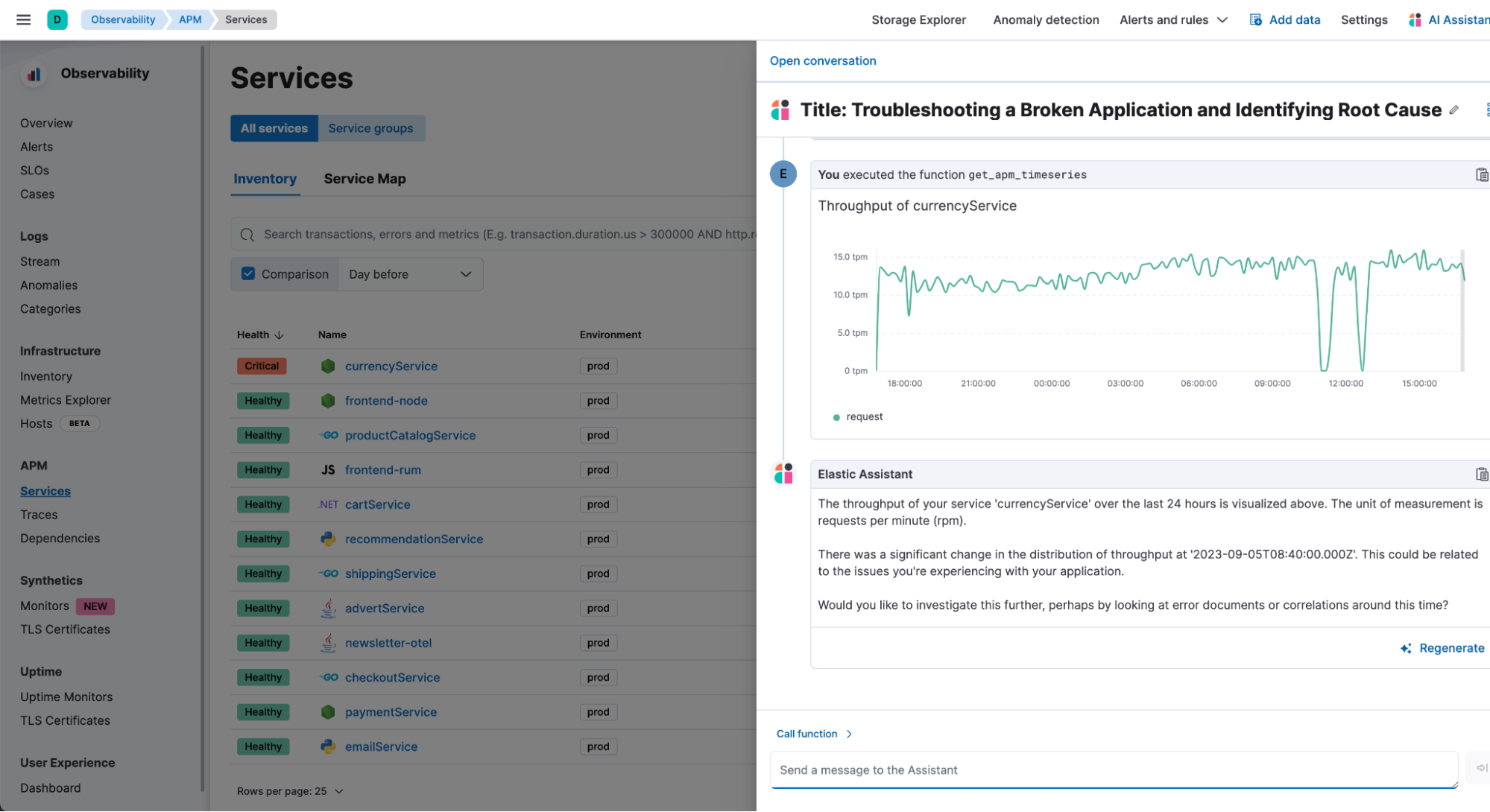

Elastic nutzt die langjährige Machine Learning-Erfahrung und Integration mit generativen KI-Plattformen, um Observability mit relevanten und kontextbezogenen KI-gestützten Einblicken zu transformieren. Der Elastic AI Assistant für Observability (als technische Vorschau verfügbar) nutzt die Elasticsearch Relevance Engine (ESRE), um das Verständnis von Anwendungsfehlern, Log-Nachrichten und Alerts zu verbessern und Vorschläge für optimale Code-Effizienz bereitzustellen. Außerdem können SREs mit der interaktiven Chat-Schnittstelle des Elastic AI Assistant chatten, alle relevanten Telemetriedaten an einem Ort visualisieren und gleichzeitig proprietäre, interne Informationen für die Behebung nutzen.

Elastic ermöglicht die Integration privater Daten, wie etwa Runbooks, den Verlauf vergangener Incidents, Fallhistorien und vieles mehr. Mit einem Inferenz-Prozessor auf Basis des Elastic Learned Sparse EncodeR (ELSER) erhält der Assistant Zugriff auf die wichtigsten Daten zum Beantworten von Fragen oder zum Erledigen von Aufgaben. Der Assistant lernt ständig dazu und erweitert seine Wissensdatenbank mit zunehmender Nutzung und angeleitetem Lernen. SREs können dem Assistant den Umgang mit bestimmten Problemen beibringen, damit er die entsprechenden Szenarien in Zukunft unterstützen kann, etwa indem er Ausfallberichte erstellt, Runbooks aktualisiert und automatisierte Behebungsmaßnahmen erweitert. Mit der Kombination aus Elastic AI Assistant und Machine Learning können SREs Probleme schnell und proaktiv ermitteln und beheben, indem sie mühsame und manuelle Datenabrufe über Silos hinweg eliminieren.

Dank der Integration interner, geschäftsspezifischer Informationen in die LLMs liefert der Elastic AI Assistant hochrelevante Ergebnisse, um die Identifikation und Behebung von Problemen zu beschleunigen, und dient damit als AIOps-Turbo für Ihre Teams.

Erfahren Sie mehr darüber, wie der Elastic AI Assistant kontextbezogene Einblicke zur Behebung von Observability-Problemen liefert.

Mehr Betriebseffizienz mit OpenTelemetry-Standardisierung, besseren Log Analytics und einem neuen Signal: Universal Profiling

Erneuerte Elastic-Selbstverpflichtung zu OpenTelemetry

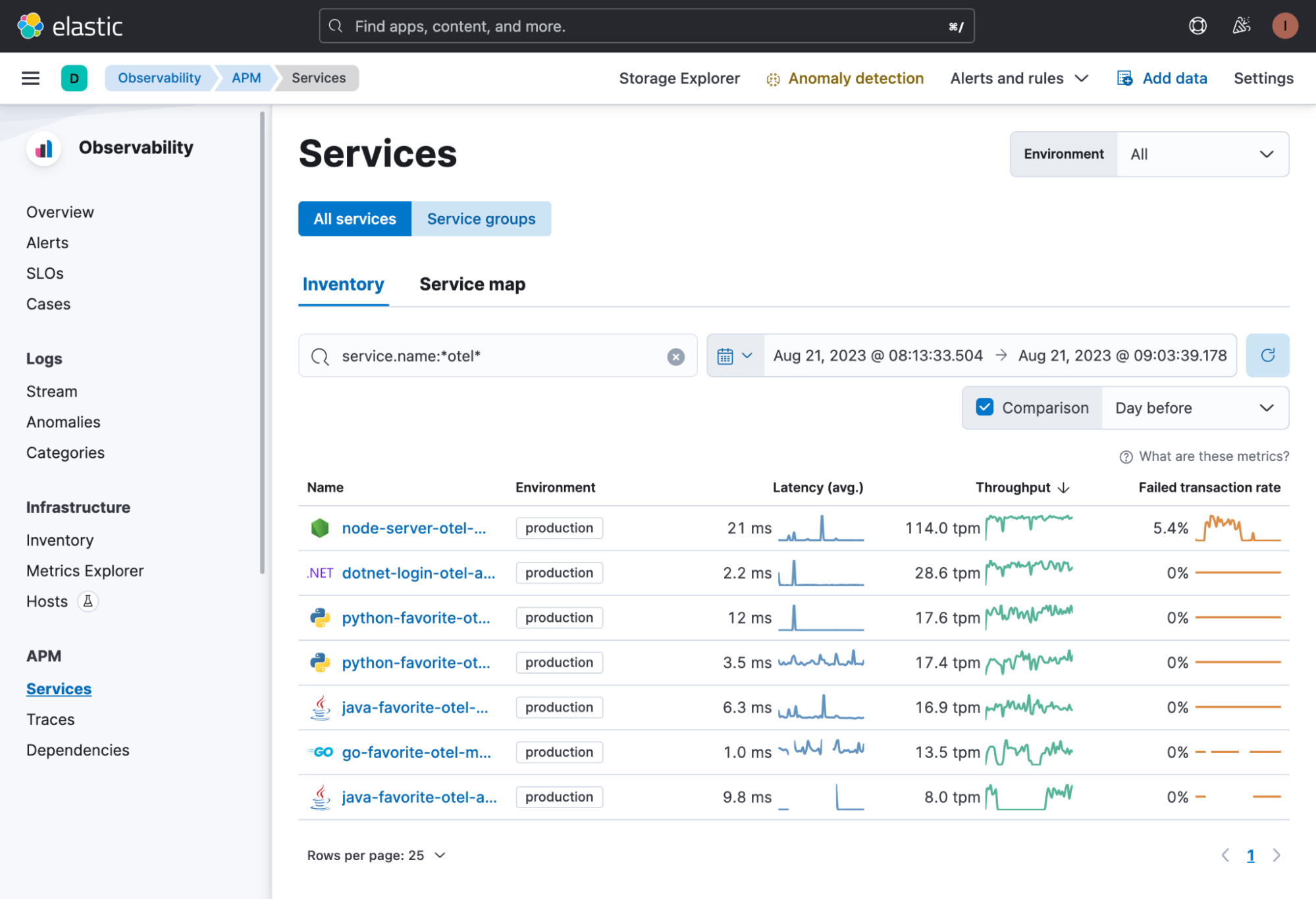

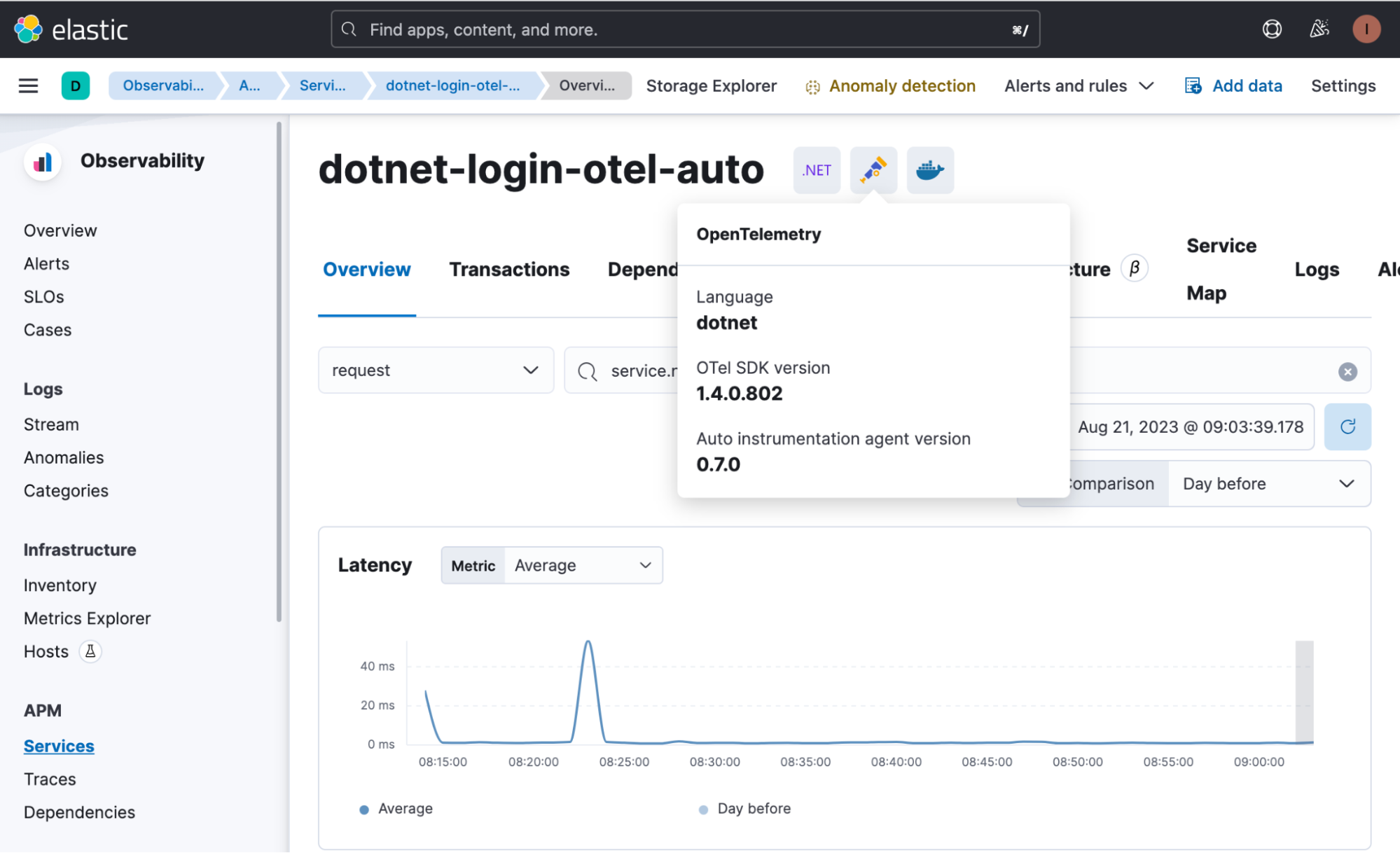

Elastic unterstützt OpenTelemetry nativ und bereitet eine Zukunft vor, in der OTel die logische Wahl als Schema und Datenerfassungsarchitektur für Elastic Observability und Elastic Security ist. Elastic hat das Elastic Common Schema (ECS) zu OpenTelemetry (OTel) beigesteuert und engagiert sich mit dieser Investition umfassend dafür, OpenTelemetry als Branchenstandard zu etablieren. Auf diese Weise können Kunden offene Standards nutzen und von einer offenen Ingestion profitieren. Elastic bringt sich weiterhin in OpenTelemetry ein, um standardisierte Methoden für SREs bereitzustellen, mit denen diese ihre Metriken, Logs und Traces ingestieren, Kosten senken sowie ihre Transparenz und Anbieterunabhängigkeit erweitern können.

Erfahren Sie, wie Sie OpenTelemetry und Elastic für die Instrumentierung beliebter Sprachen einsetzen, ein standardisiertes, offenes Log-Format (ECS) nutzen und Daten mit KI und ML analysieren können, um Anbieterunabhängigkeit mit OpenTelemetry zu erreichen.

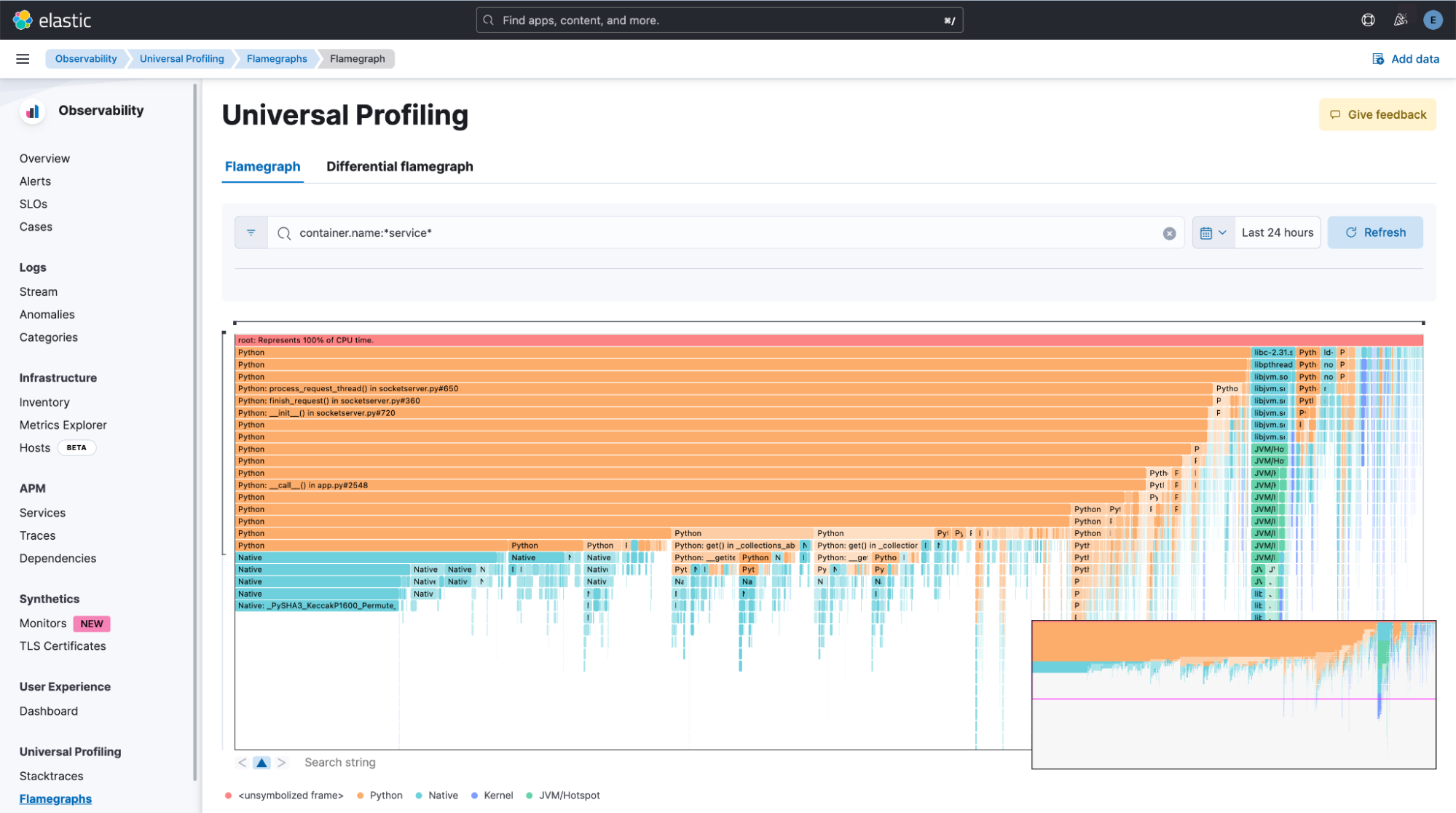

Optimiertes, effizientes Computing mit Universal Profiling

Elastic Universal ProfilingTM ist inzwischen allgemein verfügbar und versetzt Unternehmen in die Lage, ihre Kosten zu kontrollieren, ihre Ressourcen zu optimieren und ihr Wachstum nachhaltig zu gestalten. In komplexen cloudnativen Umgebungen kommt es häufig zu blinden Flecken für SRE-Teams, weil sich viele Komponenten nicht instrumentieren lassen. Universal Profiling zeichnet sich durch „Always-on“-Zero-Instrumentierung und einen sehr kleinen Overhead aus, weist auf Performance-Engpässe hin und bietet dabei Einblicke in Drittanbieter-Bibliotheken, um die Lösung von Problemen zu beschleunigen und es Unternehmen zu ermöglichen, ihre Cloud-Kosten und den CO2-Fußabdruck ihrer Infrastruktur zu reduzieren. Universal Profiling ermöglicht SREs einen umfassenden Überblick über ihren ressourcenhungrigen Code, damit sie Rechenzyklen optimieren und Engpässe im Handumdrehen identifizieren und beheben können.

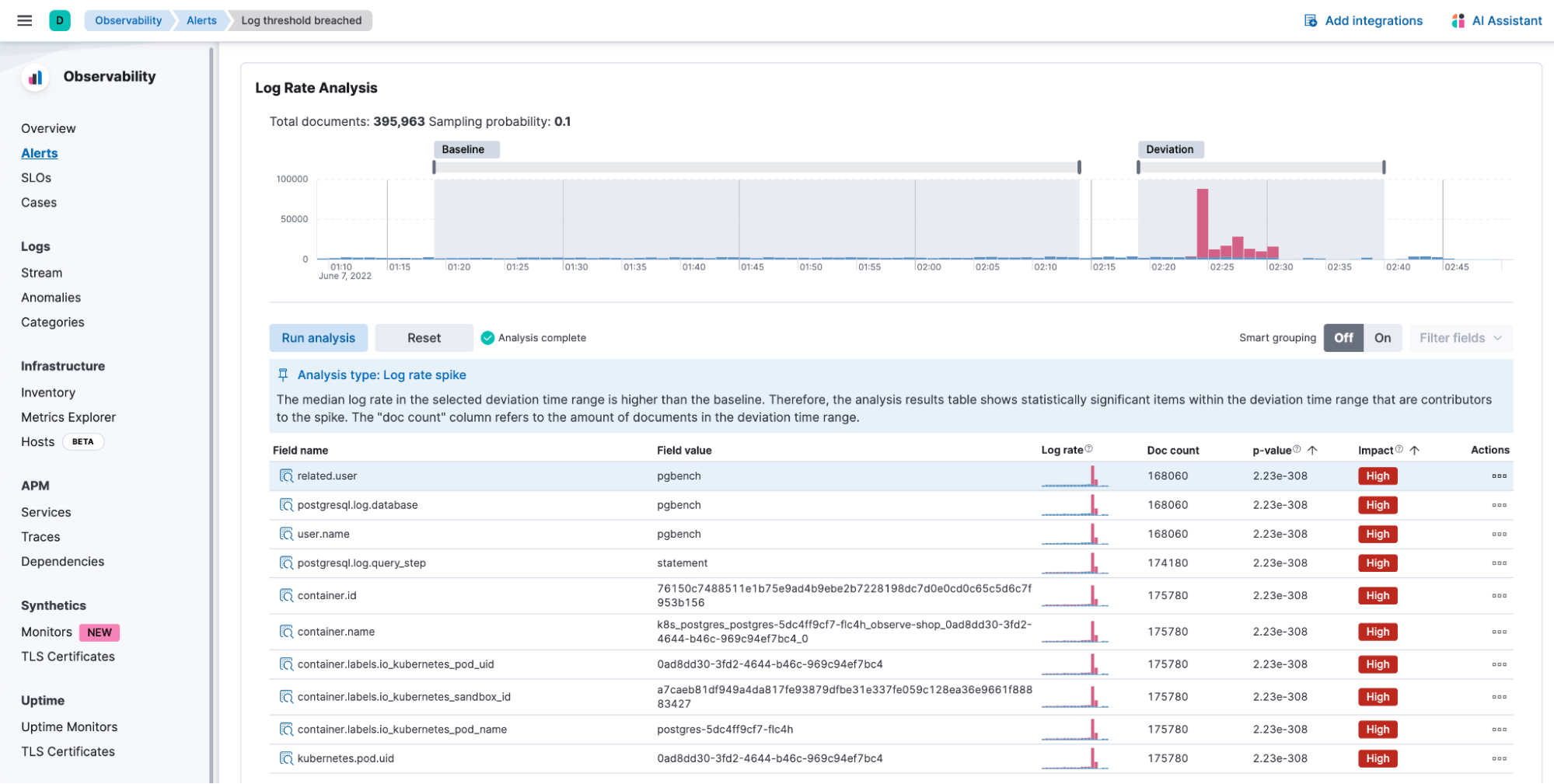

Erweiterte Log Analytics-Journey

Dank der erweiterten Log Analytics-Journey von Elastic können SREs ihre Logs mit dem einzigartigen Log-Routing-Prozessor automatisch kategorisieren, die Log-Daten weiter verarbeiten und anreichern und Log Analytics mit KI unterstützen. Mit den Machine-Learning-Algorithmen von Elastic erhalten SREs automatisierte Analysen für Log-Ausschläge, Muster- und Anomalieerkennung sowie Änderungspunkterkennung. Die Log-Ingestion von Elastic Observability unterstützt jetzt offene Ingestionsformen mit OpenTelemetry sowie Hunderte von sofort einsatzbereiten Integrationen und benutzerdefinierten Formaten, jeweils optimiert im Hinblick auf Kosten und Leistung. Die erweiterte Log-Journey von Elastic ermöglicht niedrigere Speicherkosten, mehr Betriebseffizienz und eine schnellere Problembehebung.

Erfahren Sie mehr über die Logging-Journey und die Unterstützung von Elastic.

Eine Vision für die Zukunft der Observability

Elastic wird auch weiterhin neue Innovationen und Versionen der Full-Stack-Observability-Lösung entwickeln, um SRE-Teams dabei zu unterstützen, komplexe hybride und Multi-Cloud-Umgebungen mit umsetzbaren Einblicken zu verwalten. Die Elastic-Investition in KI und Machine Learning zusammen mit einer einheitlichen, offenen und flexiblen Plattform erfüllt auch weiterhin die Anforderungen der Kunden und trägt dazu bei, die Zukunft der Observability zu transformieren. Mehr über unsere Vision erfahren Sie in diesem Gespräch mit Kelly Fitzpatrick, Senior Analyst bei RedMonk.

Die Entscheidung über die Veröffentlichung von Features oder Leistungsmerkmalen, die in diesem Blogpost beschrieben werden, oder über den Zeitpunkt ihrer Veröffentlichung liegt allein bei Elastic. Es ist möglich, dass nicht bereits verfügbare Features oder Leistungsmerkmale nicht rechtzeitig oder überhaupt nicht veröffentlicht werden.

In diesem Blogeintrag haben wir möglicherweise generative KI-Tools von Drittanbietern verwendet oder darauf Bezug genommen, die von ihren jeweiligen Eigentümern betrieben werden. Elastic hat keine Kontrolle über die Drittanbieter-Tools und übernimmt keine Verantwortung oder Haftung für ihre Inhalte, ihren Betrieb oder ihre Anwendung sowie für etwaige Verluste oder Schäden, die sich aus Ihrer Anwendung solcher Tools ergeben. Gehen Sie vorsichtig vor, wenn Sie KI-Tools mit persönlichen, sensiblen oder vertraulichen Daten verwenden. Alle Daten, die Sie eingeben, können für das Training von KI oder andere Zwecke verwendet werden. Es gibt keine Garantie dafür, dass Informationen, die Sie bereitstellen, sicher oder vertraulich behandelt werden. Setzen Sie sich vor Gebrauch mit den Datenschutzpraktiken und den Nutzungsbedingungen generativer KI-Tools auseinander.

Elastic, Elasticsearch, ESRE, Elasticsearch Relevance Engine und zugehörige Marken, Waren- und Dienstleistungszeichen sind Marken oder eingetragene Marken von Elastic N.V. in den USA und anderen Ländern. Alle weiteren Marken- oder Warenzeichen sind eingetragene Marken oder eingetragene Warenzeichen der jeweiligen Eigentümer.