Información sensible al contexto con Elastic AI Assistant para Observability

Elastic® extiende Elastic AI Assistant (el asistente de AI generativa abierto impulsado por Elasticsearch Relevance Engine™ [ESRE]) para mejorar el análisis de observabilidad y habilitar a los usuarios de todos los niveles de habilidades. Elastic AI Assistant (en vista previa técnica para Observability) transforma la identificación y resolución de problemas y elimina la búsqueda manual de datos en silos gracias al asistente interactivo que brinda información sensible al contexto para los SRE.

Elastic AI Assistant mejora la comprensión de errores de las aplicaciones, la interpretación de mensajes de logs, el análisis de alertas y las sugerencias para una eficiencia óptima del código. Además, la interfaz de chat interactivo de Elastic AI Assistant permite a los SRE chatear y visualizar todos los datos de telemetría relevantes de manera cohesiva en un mismo lugar, al mismo tiempo que aprovecha los datos privados y runbooks para contexto adicional.

Si bien AI Assistant está configurado para conectarse al LLM que prefieras, como OpenAI o Azure OpenAI, Elastic también permite a los usuarios brindar información privada a AI Assistant. La información privada puede incluir elementos como runbooks, un historial de incidentes pasados, un historial de casos y más. Mediante un procesador de inferencias impulsado por Elastic Learned Sparse EncodeR (ELSER), Assistant obtiene acceso a los datos más relevantes para responder preguntas específicas o realizar tareas.

AI Assistant puede obtener y aumentar su base de conocimientos mediante el uso continuo. Los SRE pueden enseñar al asistente un problema específico para que este pueda brindar soporte para dicho escenario en el futuro y asistir en la composición de reportes de interrupciones, la actualización de runbooks y la mejora del análisis automatizado de causa raíz. Los SRE pueden identificar y resolver problemas más rápido y de manera más proactiva a través del poder combinado de las capacidades de Elastic AI Assistant y de machine learning eliminando la recuperación manual de datos en los silos y turbocargando así de manera efectiva las AIOps.

En este blog, proporcionaremos una revisión de algunas de las características iniciales disponibles en la vista previa técnica en la versión 8.10:

- Capacidad de chat disponible en cualquier pantalla en Elastic

- Consultas de LLM específicas prediseñadas a fin de obtener más información contextual para lo siguiente:

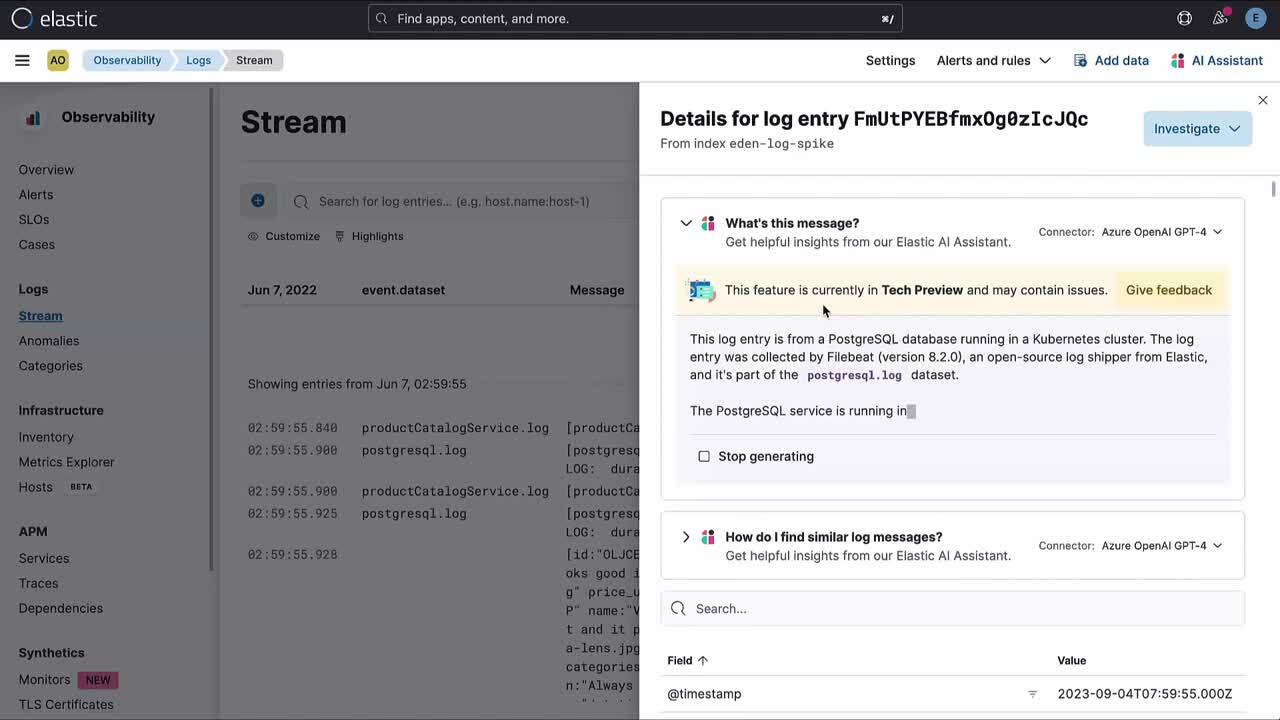

- Comprender el significado de message en un log

- Obtener mejores opciones para la gestión de errores de servicios que está gestionando Elastic APM

- Identificar más contexto y cómo gestionarlo potencialmente para las alertas de logs

- Comprender cómo optimizar el código en Universal Profiling

- Comprender qué son los procesos específicos en los hosts que se monitorean en Elastic

Además, puedes echar un vistazo al video siguiente, que es una revisión de nuestras características en este escenario en particular.

Elastic AI Assistant para Observability

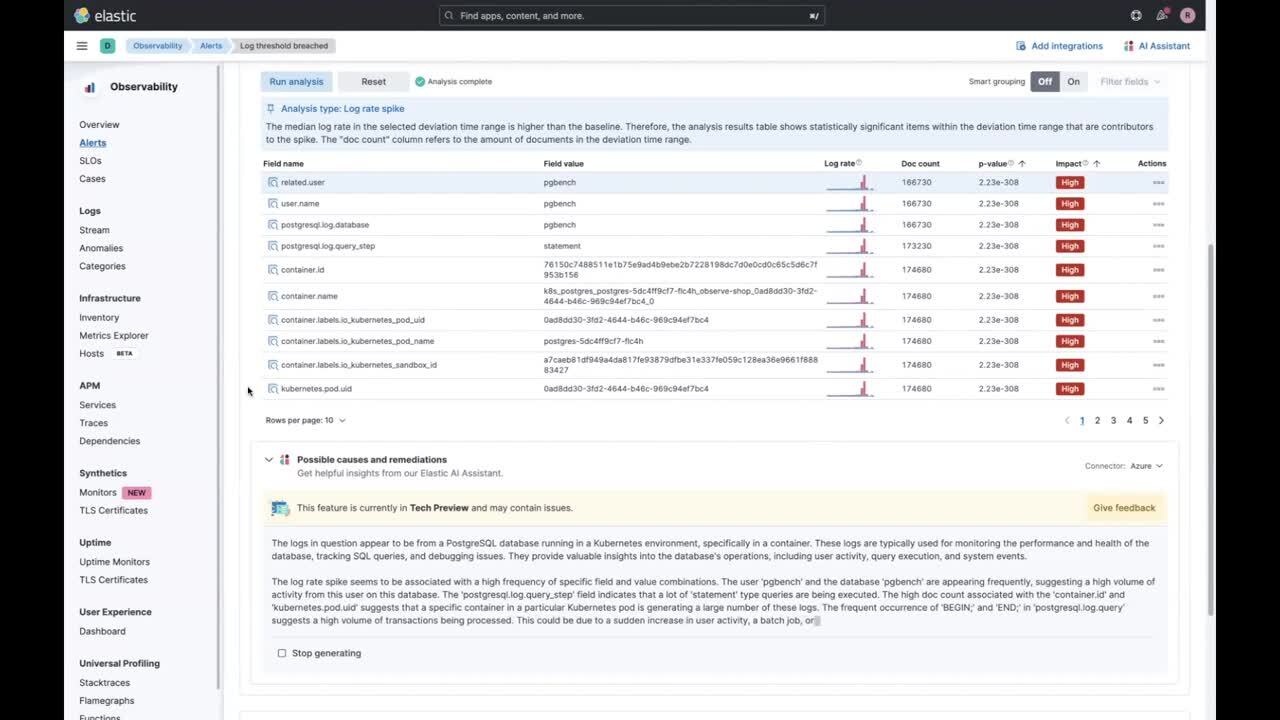

Como SRE, puedes recibir una alerta, relacionada con un umbral que se supera en cuanto a la cantidad de entradas de log. En Elastic Observability, no solo recibes la alerta, sino que Elastic Observability suma un análisis de picos de logs relevantes en tiempo real para brindarte mejor asistencia a fin de comprender el problema.

Usar AI Assistant para analizar un problema

Sin embargo, como SRE no necesariamente entiendes en profundidad todos los sistemas de los que eres responsable y necesitas más ayuda para interpretar el análisis de picos de logs. Aquí es donde AI Assistant puede resultar útil.

Elastic prediseña una solicitud que se envía tu LLM configurado, y obtienes no solo la descripción y el contexto del problema, sino también algunas recomendaciones sobre cómo proceder.

Además, puedes iniciar un chat con AI Assistant y profundizar en tu investigación.

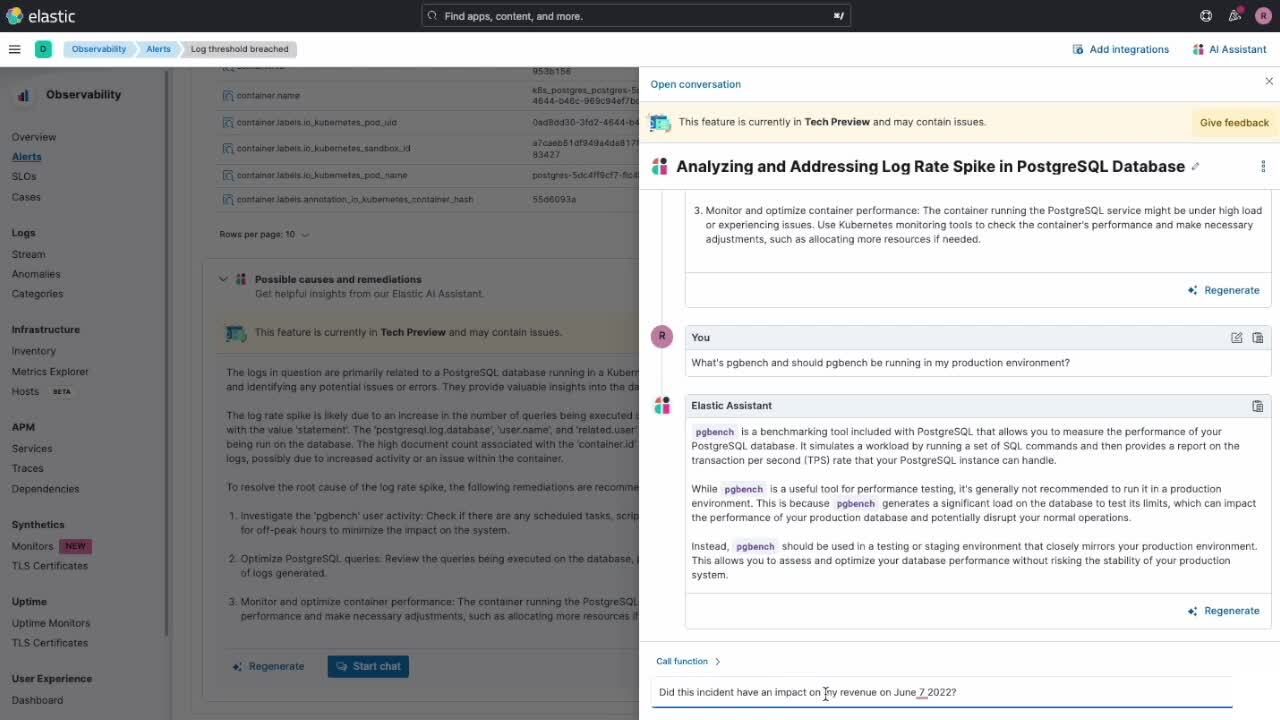

Como viste en el video anterior, no solo puedes obtener más información sobre el problema "pgbench", sino que también puedes investigar cómo esto impacta en tu empresa obteniendo información interna de la organización. En esta caso, comprendimos lo siguiente:

- Cómo el cambio significativo en la tasa de rendimiento de transacciones puede afectar nuestros ingresos

- Cómo disminuyeron los ingresos cuando ocurrió este pico de logs

Ambos componentes de información se obtuvieron a través del uso de ELSER, que ayuda a recuperar datos de la organización (privados) para ayudar a responder preguntas específicas, con las que los LLM nunca se entrenarán.

Interfaz de chat de AI Assistant

Entonces, ¿qué puedes lograr con la nueva interfaz de chat de AI Assistant? La capacidad de chat te permite tener una sesión/conversación con Elastic AI Assistant, lo que te permitirá hacer lo siguiente:

- Usar una interfaz de lenguaje natural, como "¿Hay alguna alerta relacionada con este servicio hoy?" o "¿Puedes explicar qué son estas alertas?", como parte de los procesos de determinación del problema y análisis de causa raíz

- Ofrecer conclusiones y contexto, y sugerir recomendaciones y pasos siguientes a partir de tus propios datos privados internos (impulsados porELSER) y de información disponible en el LLM conectado

- Analizar respuestas de búsquedas y la salida del análisis realizado por Elastic AI Assistant

- Recuperar y resumir información a lo largo de la conversación

- Generar visualizaciones de Lens mediante la conversación

- Ejecutar API de Kibana® and Elasticsearch® en nombre del usuario a través de la interfaz de chat

- Realizar un análisis de causa raíz usando funciones de APM específicas, como: get_apm_timeseries, get_apm_service_summary, get_apm_error_docuements, get_apm_correlations, get_apm_downstream_dependencies, etc.

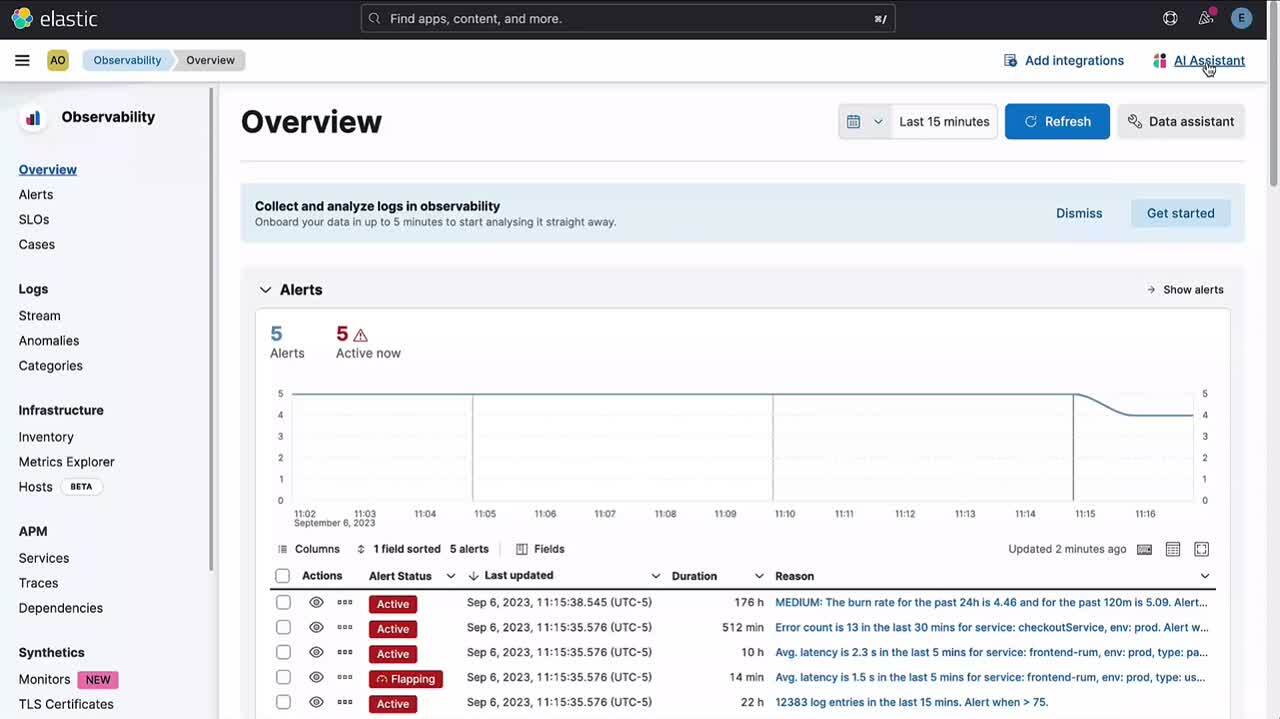

Otras ubicaciones para acceder a AI Assistant

La capacidad de chat en Elastic AI Assistant para Observability inicialmente será accesible desde varias ubicaciones en Elastic Observability 8.10, y se agregarán puntos de acceso adicionales en versiones futuras.

- Todas las aplicaciones de Observability tienen un botón en el menú de acciones superior para abrir AI Assistant e iniciar una conversación:

- Los usuarios pueden acceder a conversaciones existentes y crear nuevas haciendo clic en el enlace Go to conversations (Ir a conversaciones) en AI Assistant.

La nueva capacidad de chat en Elastic AI Assistant para Observability mejora las capacidades introducidas en Elastic Observability 8.9 proporcionando la capacidad de iniciar una conversación relacionada con la información que ya proporciona Elastic AI Assistant en APM, registros, hosts, alertas y perfilado.

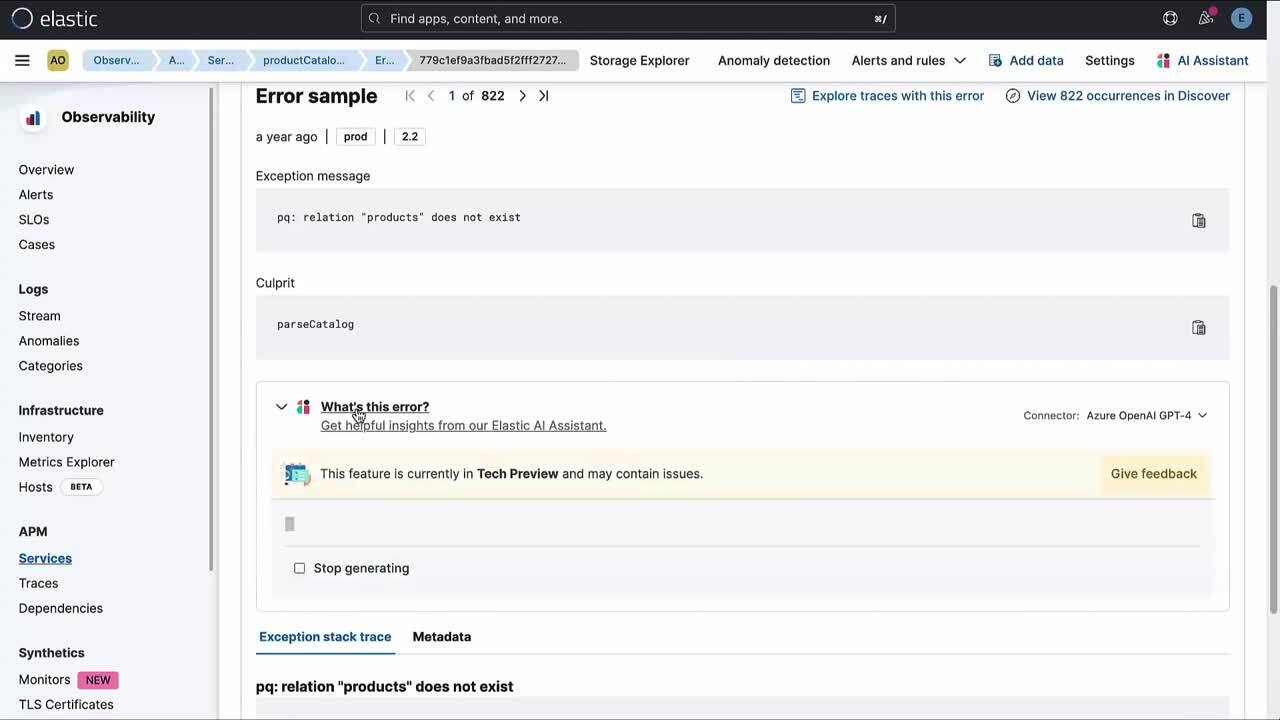

Por ejemplo:

- En APM, explica el significado de un error o una excepción específicos y ofrece las causas comunes y los posibles impactos. Además, puedes investigar en más profundidad con una conversación.

- En los registros, AI Assistant toma un registro y te ayuda a comprender el mensaje con información contextual, y además puedes investigar en más profundidad con una conversación.

Configuración de AI Assistant

Puedes encontrar detalles sobre cómo configurar Elastic AI Assistant en la documentación de Elastic.

Se basa en conectores e inicialmente brindará soporte para la configuración de OpenAI y configuración Azure OpenAI. Ten en cuenta que se recomienda el uso de GPT-4 y que se requiere soporte para la llamada de funciones. Si usas directamente OpenAI, tendrás estas capacidades automáticamente. Si usas la versión de Azure, asegúrate de desplegar uno de los modelos más recientes según la documentación de Azure.

Adicionalmente, se puede configurar usando yaml como un conector preconfigurado:

xpack.actions.preconfigured:

open-ai:

actionTypeId: .gen-ai

name: OpenAI

config:

apiUrl: https://api.openai.com/v1/chat/completions

apiProvider: OpenAI

secrets:

apiKey: <myApiKey>

azure-open-ai:

actionTypeId: .gen-ai

name: Azure OpenAI

config:

apiUrl: https://<resourceName>.openai.azure.com/openai/deployments/<deploymentName>/chat/completions?api-version=<apiVersion>

apiProvider: Azure OpenAI

secrets:

apiKey: <myApiKey>La característica solo está disponible para usuarios con una licencia Enterprise. Elastic AI Assistant para Observability se encuentra en vista previa técnica y es una característica que se incluye en la licencia Enterprise. Si no eres usuario Enterprise de Elastic, actualiza tu licencia para obtener acceso a Elastic AI Assistant para Observability.

Tus comentarios son importantes para nosotros. Usa el formulario de comentarios disponible en Elastic AI Assistant, los foros de debate (etiquetados con AI Assistant o AI operations [operaciones de AI]), o Elastic Community Slack (#observability-ai-assistant).

Por último, queremos destacar que esta característica aún se encuentra en vista previa técnica. Dada la naturaleza de los modelos de lenguaje grandes, en ocasiones, el asistente puede realizar acciones inesperadas. Es posible que no reaccione de la misma manera, incluso si la situación es la misma. A veces, también te indica que no puede ayudarte, aunque debería ser capaz de responder la pregunta que realizaste.

Estamos trabajando para hacer que el asistente sea más robusto ante fallas, más predecible y mejor, en general; así que, asegúrate de estar al corriente de las versiones futuras.

Pruébalo

Lee sobre estas capacidades y más en nuestra página de Elastic AI Assistant o en las notas de lanzamiento.

Los clientes existentes de Elastic Cloud pueden acceder a muchas de estas características directamente desde la consola de Elastic Cloud. ¿No estás aprovechando Elastic en el cloud? Comienza una prueba gratuita.

El lanzamiento y el plazo de cualquier característica o funcionalidad descrita en este blog quedan a la entera discreción de Elastic. Cualquier característica o funcionalidad que no esté disponible actualmente puede no entregarse a tiempo o no entregarse en absoluto.

En este blog, es posible que hayamos usado o mencionado herramientas de AI generativa de terceros, que son propiedad de sus respectivos propietarios y operadas por estos. Elastic no tiene ningún control sobre las herramientas de terceros, y no somos responsables de su contenido, funcionamiento o uso, ni de ninguna pérdida o daño que pueda resultar del uso de dichas herramientas. Ten cautela al usar herramientas de AI con información personal o confidencial. Cualquier dato que envíes puede ser utilizado para el entrenamiento de AI u otros fines. No hay garantías de que la información que proporciones se mantenga segura o confidencial. Deberías familiarizarte con las prácticas de privacidad y los términos de uso de cualquier herramienta de AI generativa previo a su uso.

Elastic, Elasticsearch, ESRE, Elasticsearch Relevance Engine y las marcas asociadas son marcas comerciales, logotipos o marcas comerciales registradas de Elasticsearch N.V. en los Estados Unidos y otros países. Todos los demás nombres de empresas y productos son marcas comerciales, logotipos o marcas comerciales registradas de sus respectivos propietarios.