Elastic and NVIDIA help you deploy AI apps faster without draining IT infrastructure

Remove bottlenecks. Scale smarter. Control costs. With Elastic and NVIDIA, you get the power of a GPU-accelerated vector database for high-performance AI.

Dive deeper

Unleash AI performance with GPU-accelerated vector search

Elasticsearch is teaming up with NVIDIA to bring GPU power to your search stack. By leveraging the cuVS library and the CAGRA algorithm, Elasticsearch has unlocked massive parallelism to deliver fast and ultra-low latency indexing for your most demanding retrieval augmented generation (RAG) pipelines and AI applications.

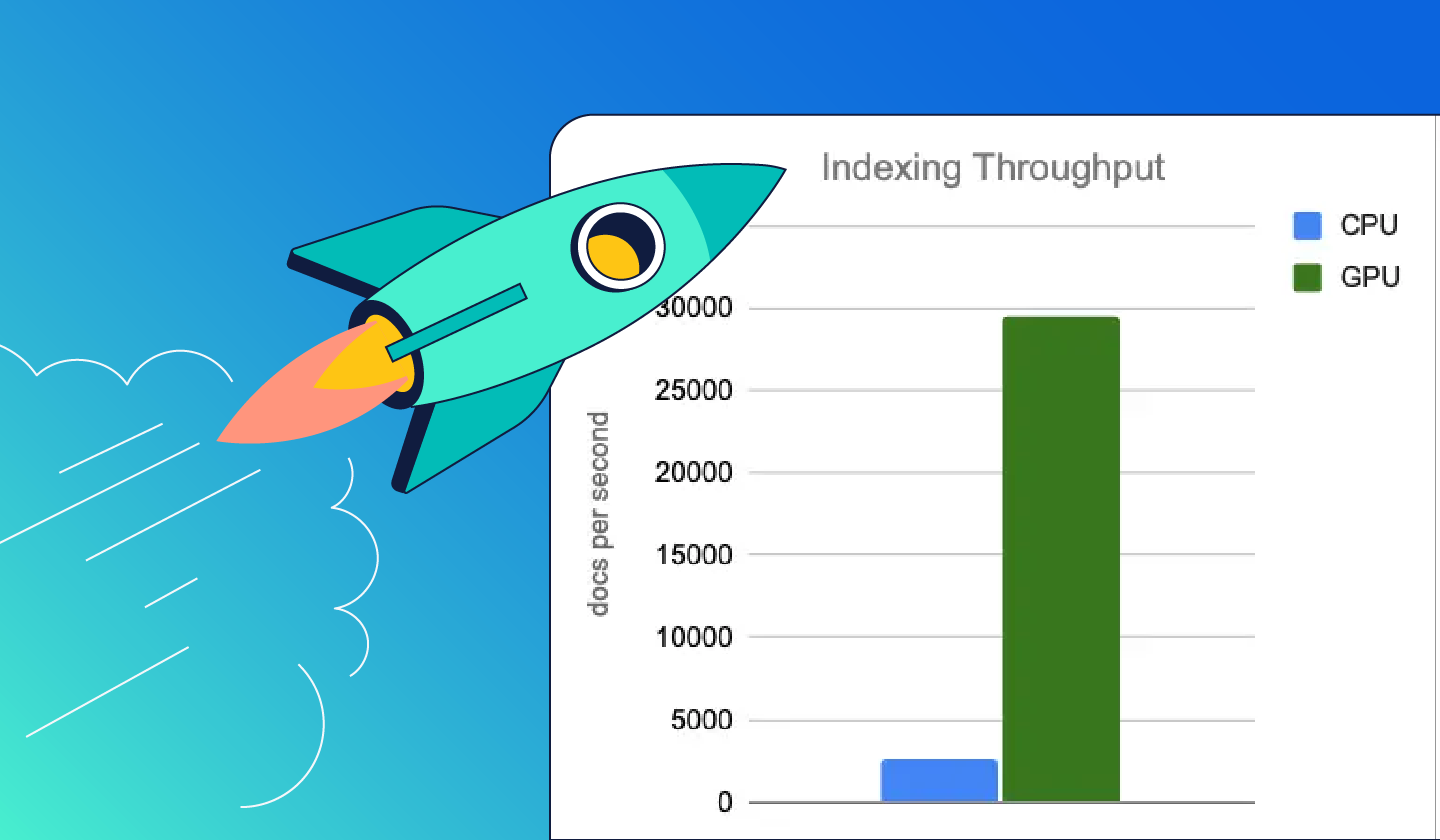

Index on GPUs for maximum throughput. Search on CPUs for cost efficiency. Optimize for both performance and price.

Frequently asked questions

Is GPU-accelerated vector indexing for Elasticsearch available as open source?

Is GPU-accelerated vector indexing for Elasticsearch available as open source?

Yes, the code implementing GPU-accelerated vector indexing is open source (under a dual license: AGPL and ELv2). Elasticsearch exposes the GPU-accelerated vector indexing functionality via a plugin that is licensed under the ELv2 license and is available under the Enterprise subscription tier. NVIDIA cuVS, the library that powers the GPU indexing features in Elasticsearch, is also available as open source under the Apache 2.0 license.

What should I do if I run into issues or have suggestions?

What should I do if I run into issues or have suggestions?

In case of any issues, try our troubleshooting instructions. If your problem persists, create an issue on the Elasticsearch GitHub if it's an Elasticsearch-specific problem. If the problem pertains to NVIDIA cuVS and its dependencies, open an issue on the NVIDIA cuVS GitHub. If you have an Enterprise subscription, reach out to us via Elastic customer support channels for resolution. Use the same channels for suggestions and feature requests.

How do I install NVIDIA cuVS on an Elasticsearch data node to enable GPU vector indexing?

How do I install NVIDIA cuVS on an Elasticsearch data node to enable GPU vector indexing?

You can install NVIDIA cuVS as a precompiled package via tarball from NVIDIA channels for database users or via pip or conda package managers for data science users. You can also build cuVS from source and maintain the binary yourself. For more information, see the NVIDIA cuVS installation page. For users with NVIDIA AI Enterprise (NVAIE) subscription with your GPUs, CVE fix supported cuVS tarball with support guarantees for CVEs will be available via NGC catalog in a few months. Reach out to the NVAIE support team or your NVIDIA sales representative for more information.

Can vector indexing scale across multiple GPUs across one or multiple servers?

Can vector indexing scale across multiple GPUs across one or multiple servers?

Yes, you can use a container orchestration system like Kubernetes to map each Elasticsearch process to one available GPU. A single Elasticsearch process should have exclusive use of a single GPU. In this way, scaling to use multiple GPUs becomes scaling nodes in the cluster.

Is the vector index size limited by the available GPU memory?

Is the vector index size limited by the available GPU memory?

We support building indices that are larger than GPU memory (a.k.a. out-of-core) by building them in batches. Overall, GPU indexing does not introduce any additional limitations beyond those already present with CPU-based indexing.

Is GPU acceleration available for vector search?

Is GPU acceleration available for vector search?

No, only HNSW index construction is GPU-accelerated today. The resulting HNSW graph is then loaded into host (CPU) memory, and vector retrieval runs on the CPU. The reasoning for this decision is the immense advantage that GPUs have in bulk vector operations. Further extending the use of GPU will be considered as the technology and the use cases evolve.

How do I evaluate performance and cost benefits of GPU vector indexing?

How do I evaluate performance and cost benefits of GPU vector indexing?

You can use Elastic's Rally tool to evaluate the impact of GPUs on indexing throughput, force merge latency, and vector search accuracy and latency/throughput. View instructions and best practices to run E2e vector indexing benchmarking on GPUs via Rally.

Which element and index types are supported?

Which element and index types are supported?

Elasticsearch supports several different indexing parameters. Both the hnsw and int8_hnsw values are supported for the index_options.type parameter. For the element_type, only float is supported. At this time, no other index and element types are supported.