Logstash 6.0.0 GA Released

We’re glad to announce that Logstash 6.0.0 has launched! Today marks the first day of 6.0’s inter-planetary mission of making life easier for systems administrators and engineers. Can’t wait another minute to try it? Head over to our downloads page and give it a shot! However, you may want to take a minute to read about breaking changes and release notes first. Read on for what's new in Logstash 6.0!

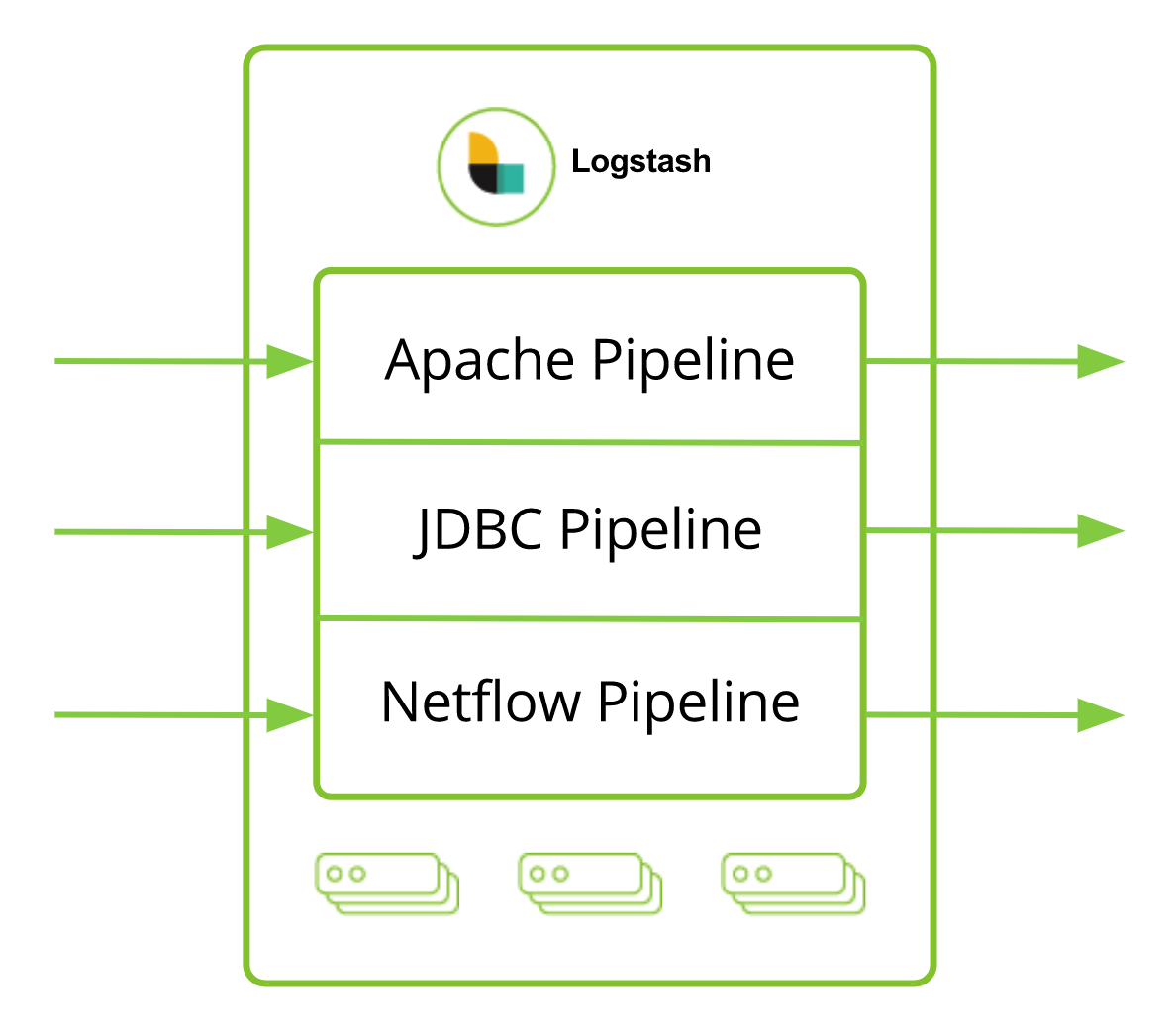

Streamline Processing with Multiple Pipelines

One pipeline no longers rules them all. Logstash 6.0 introduces the ability to run multiple pipelines concurrently for different use cases. The pipelines run together in the same instance, but with independent inputs, filters, and outputs to enable users to isolate processing logic per data source. This keeps your pipeline logic focused and concise. Historically, many Logstash users combine multiple use cases into a single pipeline which requires adding complex conditional logic. With multiple pipelines that’s no longer necessary; now, you can organize your config more cleanly, and execute your pipeline more efficiently by using dedicated pipelines per use case.

To add to the fun, each pipeline also has its own independent settings and lifecycles which you can tune to match the respective workload profile desired for that data source. For instance, you may want to allocate more pipeline worker threads for a high volume logging pipeline, and throttle back resources for a lower intensity local metrics pipeline. For more details on multiple pipelines, take a peek at the documentation and blog post!

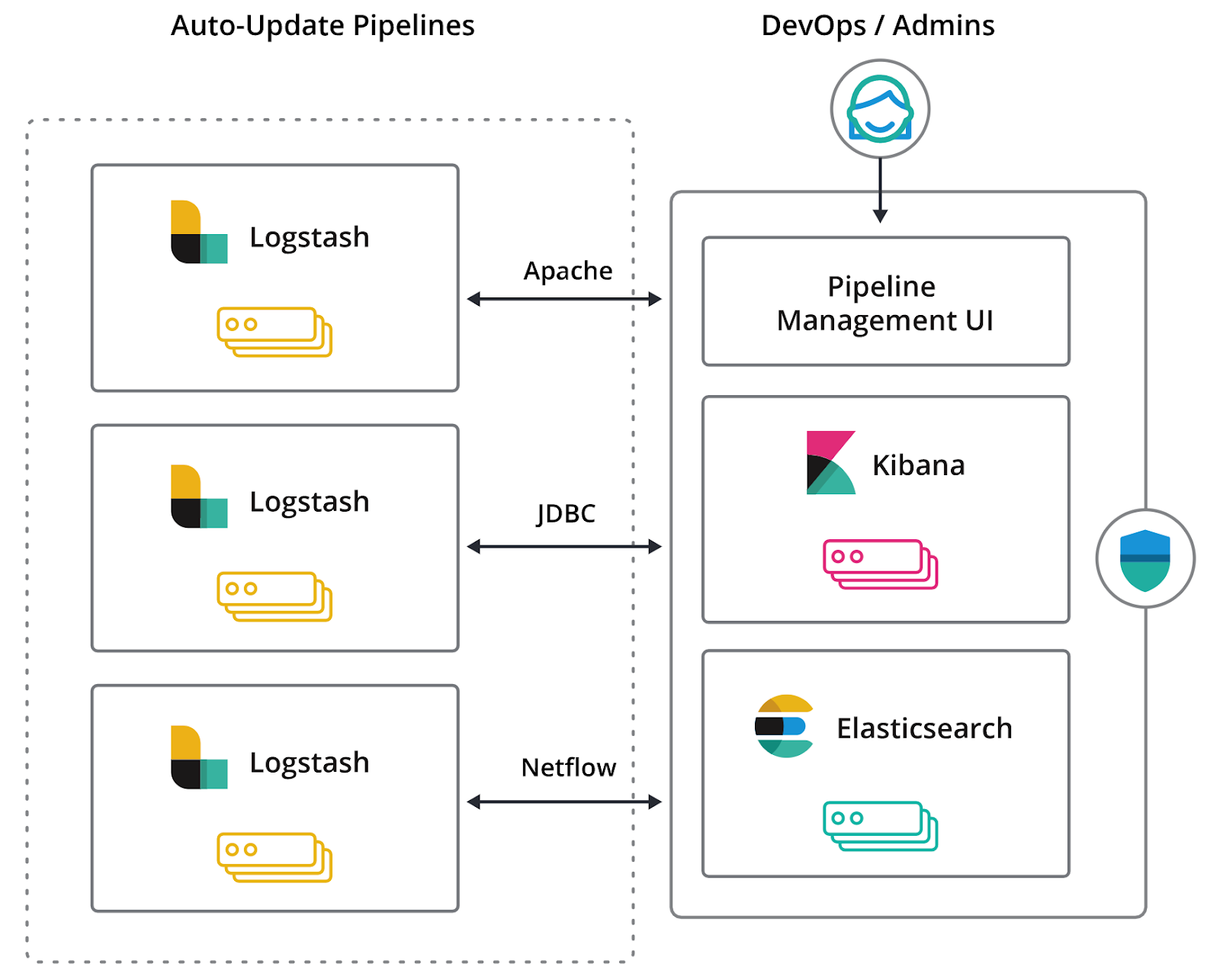

Manage Pipelines Centrally with the Elastic Stack

In the past, managing pipeline configurations was either a manual task, or you would use config management tools like Puppet or Chef to assist with operational automation. With Logstash 6.0, the centralized pipeline management feature now enables you to manage and automatically orchestrate your Logstash deployments directly with the Elastic Stack through the Kibana single pane of glass.

This feature brings a Pipeline Management UI to Kibana, which you can use to create, edit, and delete pipelines. Underneath this UI we use Elasticsearch to store your pipeline configurations. With a few simple settings, your Logstash nodes can be configured to watch for changes on these pipelines, letting you seamlessly push out pipeline changes without additional operational infrastructure beyond the Elastic Stack.

Centralized pipeline management is available as an X-Pack feature. To learn more, take a look at the documentation and the blog post!

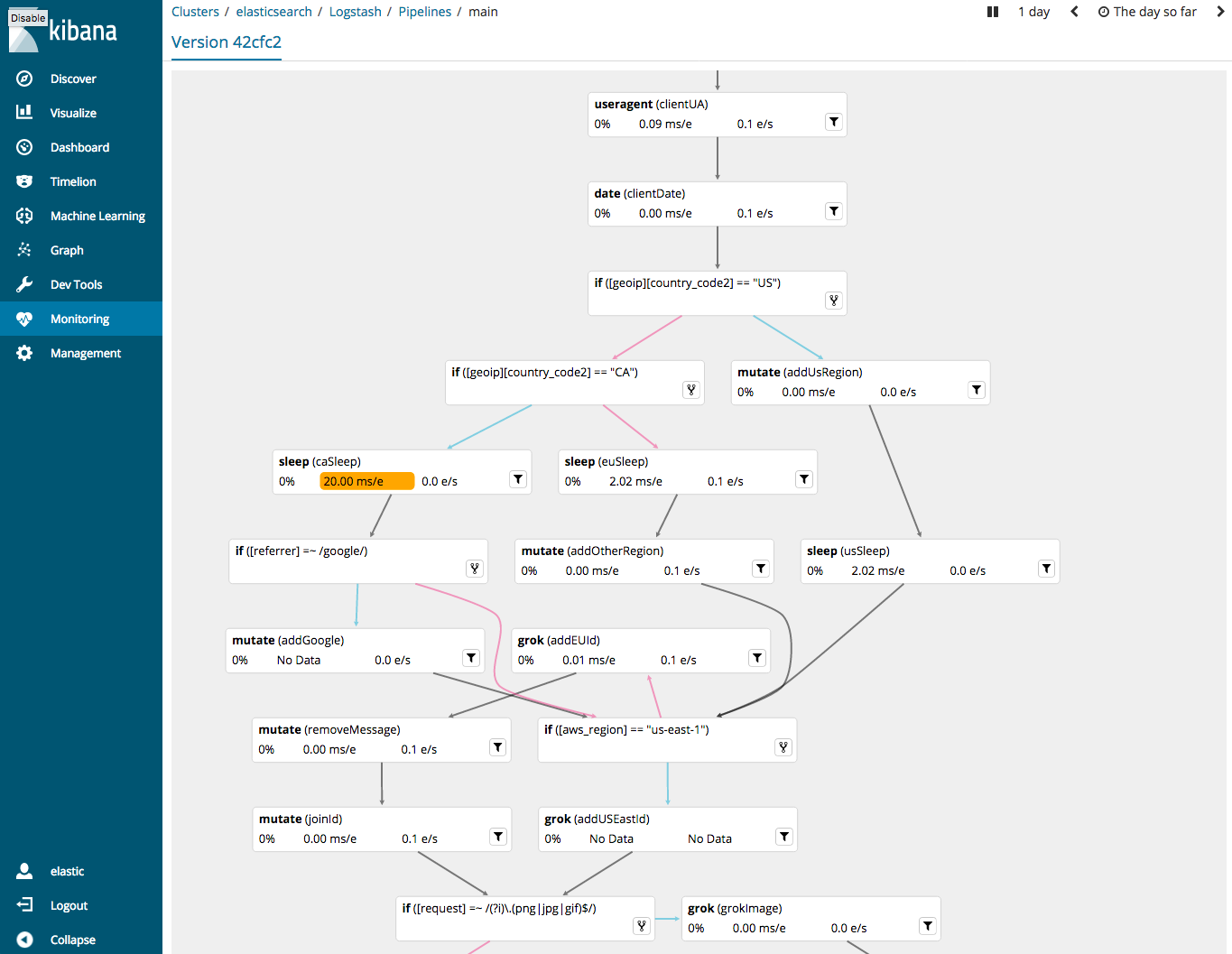

Visualize Pipeline Logic and Performance

We’re also proud to announce the long awaited Pipeline Viewer in this 6.0 release, which is a welcome addition to the Logstash Monitoring UI. With this new tool you can both visualize your pipeline configuration and troubleshoot performance bottlenecks at the plugin level. The pipeline viewer displays your Logstash pipelines as a DAG (Directed Acyclic Graph). Overlaid on this DAG are relevant performance metrics for individual inputs, filters, and outputs. Potential performance problems are highlighted to let you quickly determine which portions of your pipeline may be bottlenecks.

Users should note that this is a beta feature and may be subject to change. One known issue is the lack of ability to cleanly render very large pipelines. This is an area of active work, so expect significant improvements to this feature as we improve it further and move it into general availability.

A Smooth Path from Ingest Node to Logstash

Some users enjoy the convenience of Elasticsearch Ingest Nodes when getting started with the Elastic Stack. However, at a certain level of complexity, Ingest Nodes may not have all the features required to solve your problem and a migration to Logstash may be in order. To ease these transitions we’ve created an Ingest pipeline conversion tool that ships with Logstash 6.0. The converter takes an Ingest Node pipeline as an input and spits out the respective Logstash pipeline. See the blog post for more insight!

Now With JRuby 9k

We’ve spent a lot of time moving the Logstash project over to the latest major version of JRuby: JRuby 9000, which has support for modern Ruby syntax and enhanced internals more amenable to optimization. For plugin developers, this means that your old plugins will continue to work in all but the most rare of cases. This upgrade also means that you can use new Ruby features going forward, as well as using Ruby libraries only compatible with JRuby 9k.