State of Observability in Financial Services 2026: From implementation to business impact

.png)

The demands on financial services companies are intensifying rapidly. They must not only deliver seamless system performance but also control costs, secure sensitive data, and maximize the value of their observability investments.

To navigate these converging pressures, leaders are evolving their approach to system monitoring and telemetry. The 2026 State of Observability in Financial Services research report reveals a fundamental shift in how organizations manage their digital infrastructure. Observability is no longer just a technical requirement for keeping applications running. It has matured into a foundational enterprise strategy that supports cybersecurity, regulatory compliance, and operational resilience.

This transformation requires companies to rethink how they collect, analyze, and act on telemetry data. The focus is shifting from simple metrics collection to extracting unified insights that inform high-stakes business decisions. Read on to discover how your peers are adapting to these changes, optimizing their investments, and preparing for the next wave of AI-driven innovation.

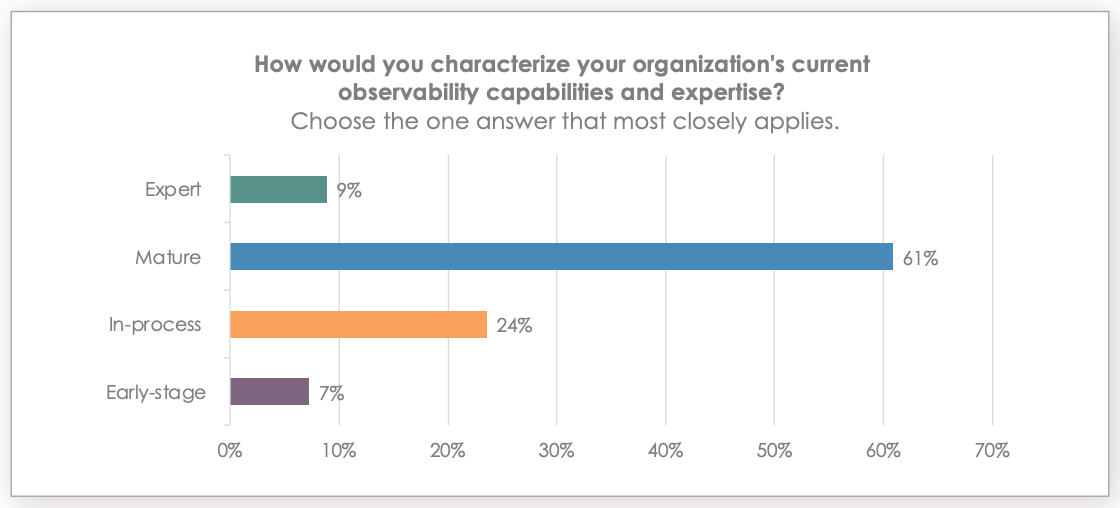

The maturity shift: 70% of teams report expert observability practices

Financial services companies are making rapid advancements in their ability to monitor and understand complex systems. Currently, 70% of IT leaders in the financial sector classify their observability practices as mature or expert, which is a sharp increase from 45% just one year ago. This dramatic leap indicates that organizations are successfully moving past the initial hurdles of implementation and data collection.

Reaching this level of maturity means that technology leaders can now focus on extracting strategic value from their data. Organizations are shifting their attention from tracking basic operational metrics to understanding how system performance impacts overarching business goals. As a result, 89% of teams now use observability data to report directly on business impact.

This evolution has profound implications for chief technology officers and chief information officers. When observability practices mature, teams can correlate technical performance with critical business outcomes, such as transaction success rates and customer satisfaction. This alignment enables leaders to make faster, data-backed decisions that protect revenue streams and improve the overall digital experience.

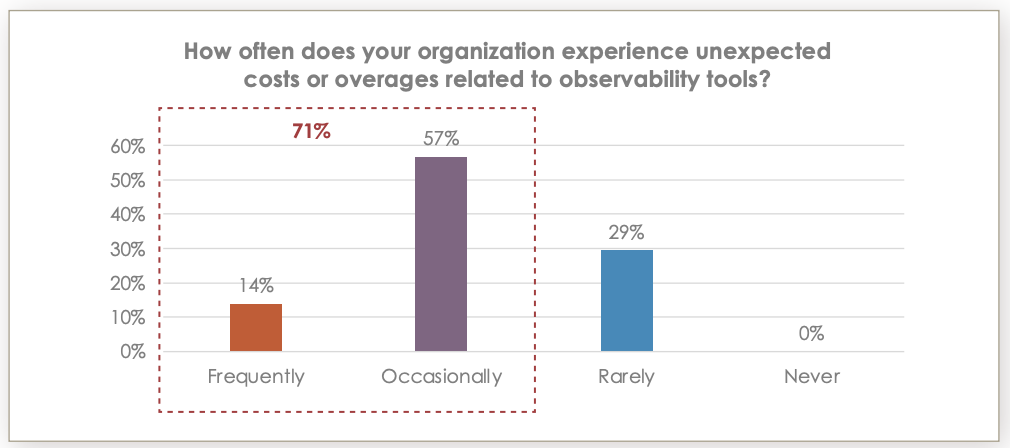

In response to these financial pressures, 99% of organizations are actively taking steps to reduce their observability costs. This near-universal focus on cost optimization reflects a broader mandate from executive leadership. Currently, 65% of teams report that their leadership increasingly requests detailed justification for observability expenses.

However, technology leaders are not simply slashing budgets at the expense of system visibility. Instead, 71% view observability as a prime opportunity to optimize their existing spending and extract more value. To achieve these efficiencies without compromising resilience, organizations are implementing several strategic measures:

They are consolidating fragmented tool sets to eliminate redundant licensing fees.

Teams are deploying data sampling techniques to manage the volume of ingested logs.

Organizations are routing lower-value telemetry data to cost-effective storage solutions.

Leaders are disabling nonessential data collectors in noncritical environments.

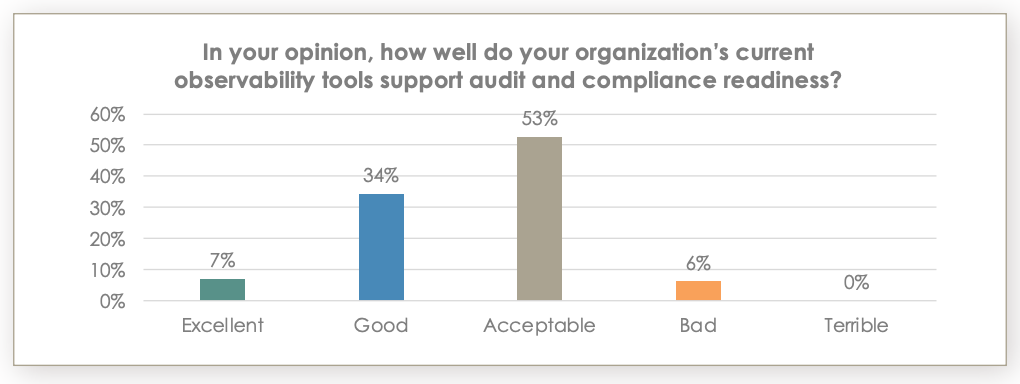

Regulatory pressures and real-time compliance: 95% face regulatory hurdles

Operating in a highly regulated industry means that financial services companies must maintain rigorous oversight of their digital environments. Compliance demands continue to accelerate, pushing organizations to adopt transparent and auditable technology practices. Currently, 95% of leaders report facing significant challenges when complying with regulatory frameworks.

The General Data Protection Regulation (GDPR) remains the most difficult framework to navigate, cited by 67% of respondents as their primary compliance challenge. To meet these stringent requirements, observability financial services strategies are converging with governance and risk management. Today, 61% of companies use their observability platforms for real-time compliance monitoring and audit trail generation.

Despite this widespread use, existing tools often fall short of meeting complex regulatory needs. Over half of leaders, 53%, rate their current observability tools as merely "acceptable" for audit and compliance readiness. This signals a pressing need for platforms that provide deep explainability and seamless data retention. By upgrading their capabilities, compliance officers can reduce the risk of costly penalties and streamline their reporting processes during intensive audits.

The role of AI and generative AI in observability: 94% adoption rates

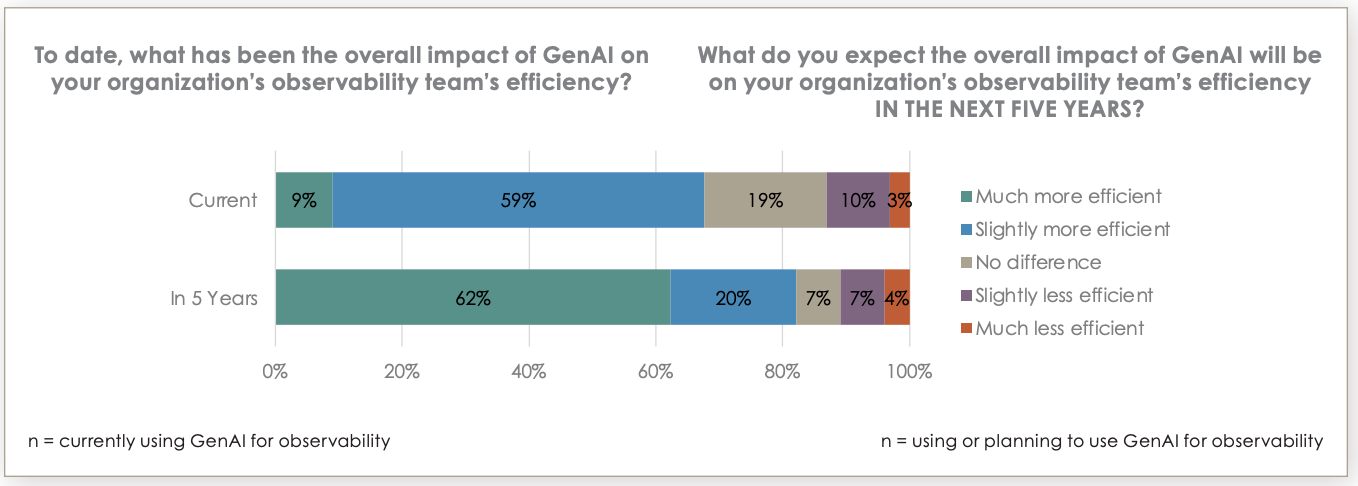

Generative AI (GenAI) is fundamentally transforming how technology teams detect anomalies and investigate system failures. Adoption within the financial sector is nearly universal with 94% of teams currently using some form of GenAI for observability. This overwhelming adoption rate highlights the urgency with which organizations are pursuing AI-driven automation to manage increasingly complex architectures.

The impact of this technology is already tangible. Currently, 68% of teams report that GenAI has improved their operational efficiency, and expectations are set to surge with 82% anticipating significant efficiency gains within five years. The most common use cases driving these improvements include remediation and automated operations, automated correlation, and root cause analysis.

For technology decision-makers, integrating GenAI means dramatically reducing the mean time to resolution during critical incidents. By automating the correlation of logs, metrics, and traces, engineering teams can bypass hours of manual investigation. This allows highly skilled personnel to focus on strategic innovation rather than routine troubleshooting, ultimately improving system uptime and operational resilience.

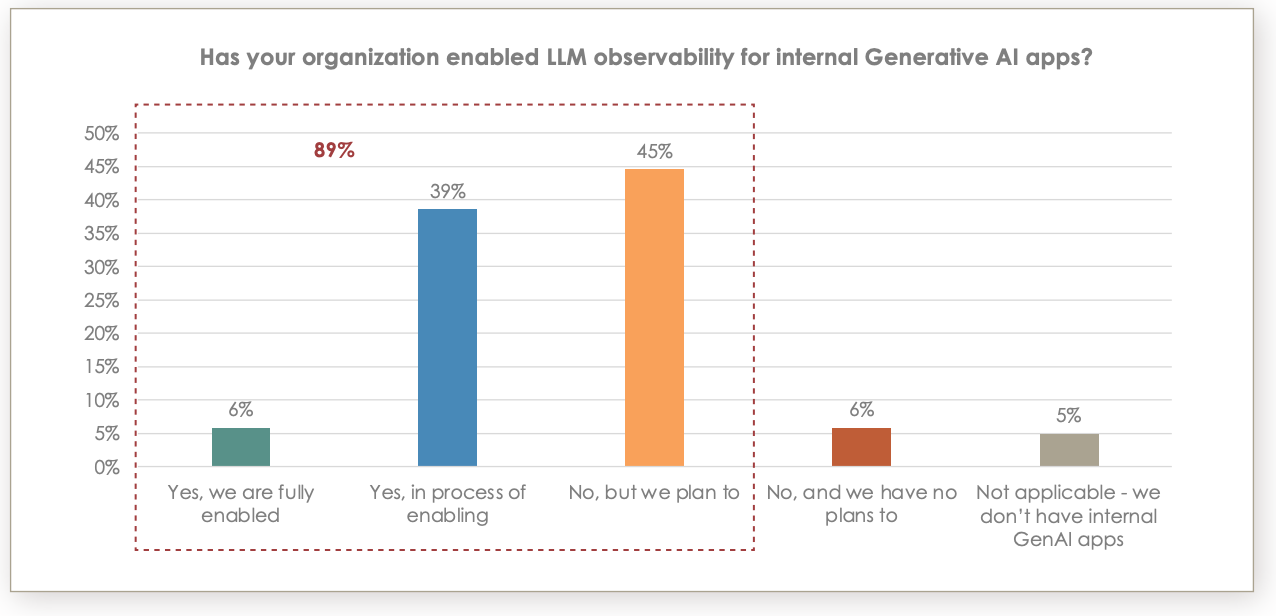

The LLM observability gap: 89% expect it, but only 6% have enabled it

While financial services companies are eager to use GenAI to monitor their infrastructure, they are also building their own internal large language models (LLMs). As these organizations deploy proprietary GenAI applications to serve customers and automate internal workflows, they must ensure these models operate securely and accurately.

This creates a new imperative for monitoring the AI models themselves. While 89% of leaders expect to enable observability for their internal GenAI applications, only 6% have successfully implemented these capabilities today. This massive gap between expectation and reality exposes organizations to significant operational and reputational risks.

Without proper oversight, internal models can suffer from hallucinations, performance degradation, and data leakage. To mitigate these risks, leaders must prioritize LLM observability. By implementing robust monitoring for AI applications, organizations can ensure their models deliver accurate, compliant, and performant results, safeguarding both customer data and brand integrity.

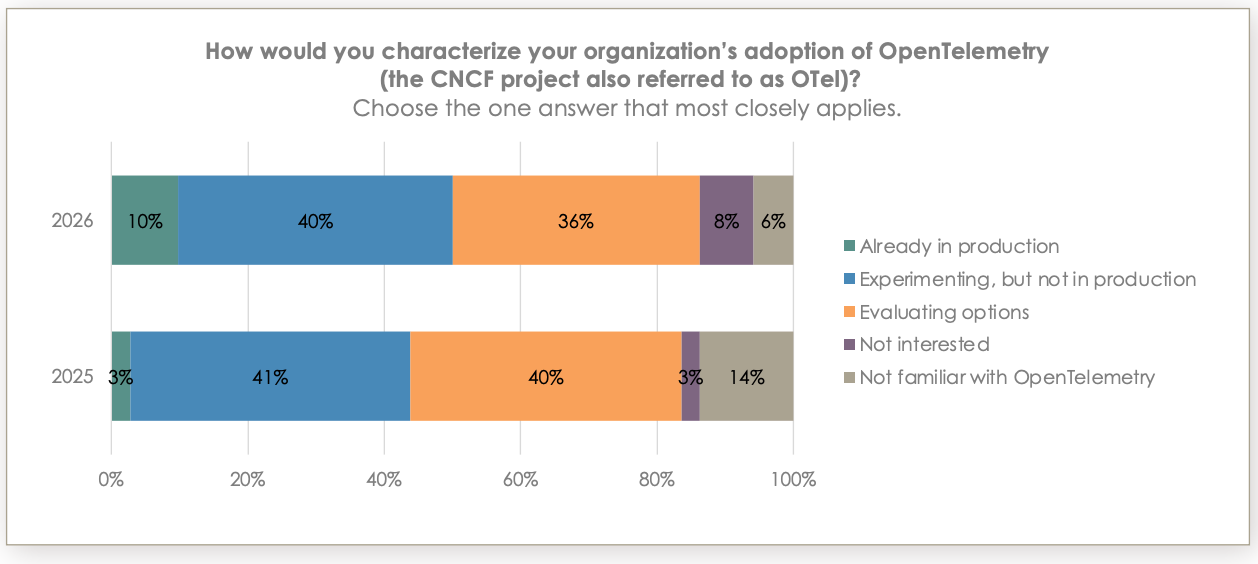

Standardizing on OpenTelemetry (OTel): Production usage triples to 10%

As hybrid cloud environments grow more complex, organizations are seeking ways to standardize how they collect and transmit telemetry data. OTel has emerged as the industry standard framework for generating and managing this data without being locked into a specific vendor. Production usage of OTel has tripled over the past year, rising from 3% to 10%.

This momentum is driven by the need for interoperability and future-proof architectures. Among those evaluating or using the framework, 89% state that OTel compliance is a critical or very important factor when selecting observability solutions. Furthermore, 58% of teams plan to use vendor-sourced OTel distributions in 2026.

Standardizing open frameworks provides immense strategic value for chief information officers. By decoupling data collection from proprietary analytics tools, organizations gain the flexibility to change backend platforms without undertaking massive re-instrumentation projects. This strategic flexibility prevents vendor lock-in, reduces long-term engineering costs, and ensures the organization remains agile as new technologies emerge.

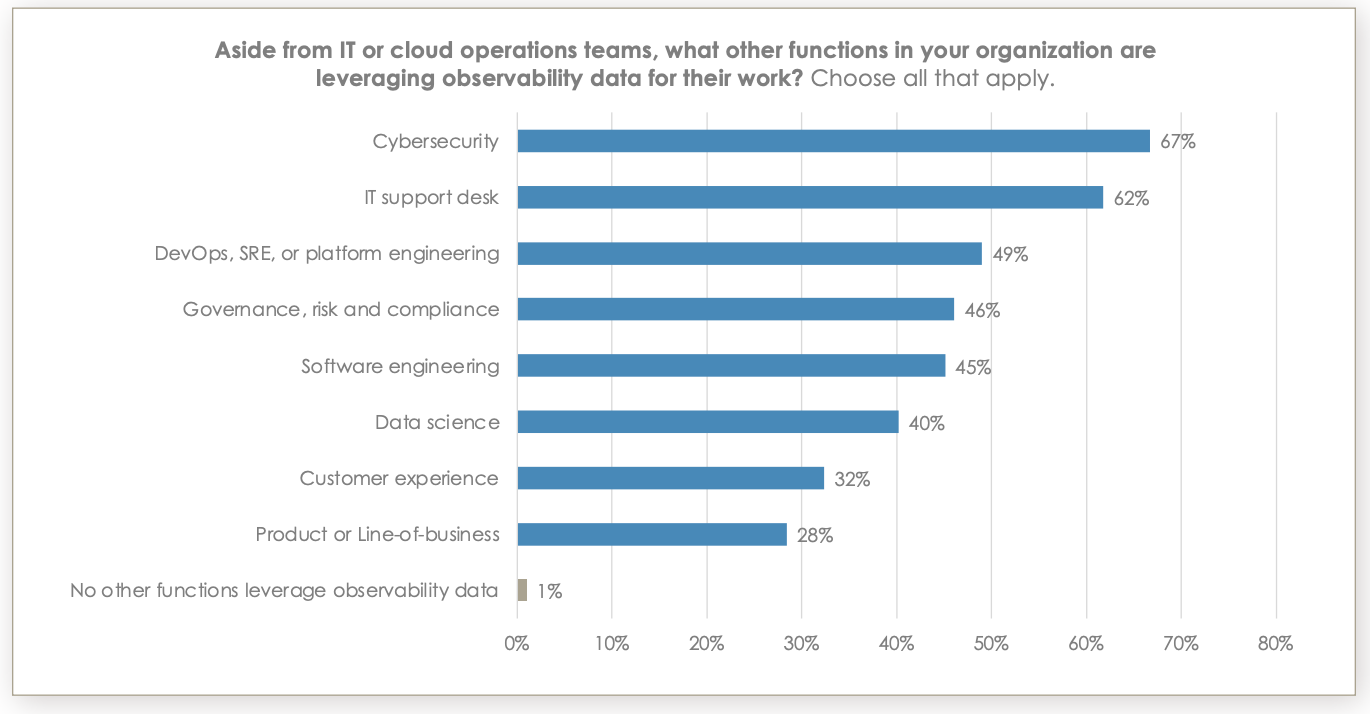

Expanding beyond IT: 67% of cybersecurity teams rely on observability data

The value of unified telemetry data extends far beyond the core engineering and cloud operations teams. In modern financial services companies, observability data acts as a shared source of truth across the enterprise. At 80% of organizations, three or more non-IT teams now actively use this data to inform their specific workflows.

Cybersecurity teams are the most frequent secondary users with 67% relying on observability platforms to detect and investigate threats. This convergence of security and observability is a natural evolution. The same logs and network traces that indicate a system performance issue can also reveal a sophisticated cyber attack or unauthorized data access.

By breaking down data silos and providing a unified view of the environment, chief information security officers can accelerate threat detection and response. When IT and security teams operate from the same dataset, they reduce the friction of cross-team collaboration. This unified approach strengthens the organization's overall security posture, allowing teams to quickly contain threats before they impact customer trust or regulatory standing.

Best practices for implementing a modern observability strategy

To fully capitalize on the advancements in AI and open standards, financial services leaders must adopt a proactive approach to their observability architecture. Building a resilient and cost-effective practice requires careful planning and continuous refinement. Organizations that successfully navigate this landscape follow several core principles to guide their implementation.

First, leaders should prioritize an open and scalable foundation. Standardizing data collection through open frameworks ensures long-term flexibility. Second, organizations must establish stringent cost governance policies early in the deployment lifecycle. By defining clear rules for data retention and storage tiering, teams can prevent unexpected budget overruns.

To maximize the return on their observability investments, leaders should focus on the following strategies:

Implement unified data platforms that consolidate security, performance, and business metrics.

Establish cross-functional centers of excellence to share insights across IT, security, and compliance teams.

Demand deep explainability features from any GenAI tools introduced into the monitoring ecosystem.

Align all technical performance indicators directly with overarching business outcomes and customer experience metrics.

Building resilience in a complex landscape

The evolution of observability within financial services marks a critical turning point for technology leaders. As system complexity deepens and regulatory scrutiny intensifies, relying on fragmented monitoring tools is no longer a viable strategy. The most successful organizations are transforming their telemetry data into a unified, actionable asset that drives both operational efficiency and business growth.

By standardizing open frameworks, embracing the power of generative AI, and enforcing strict cost governance, leaders can build architectures that are both highly performant and financially sustainable. This proactive approach ensures that systems remain secure, compliant, and ready to support the next generation of financial innovation.

The future belongs to organizations that view observability not as a reactive troubleshooting mechanism, but as a proactive engine for enterprise intelligence. When technology leaders align their system visibility with their strategic business goals, they build the resilience needed to thrive in a rapidly changing financial landscape.

Download the full Financial Services State of Observability Report today to explore the comprehensive data and discover how Elastic can help unify your digital infrastructure.

The release and timing of any features or functionality described in this post remain at Elastic's sole discretion. Any features or functionality not currently available may not be delivered on time or at all.