Diagnose unavailable nodes

This section provides a list of common symptoms and possible actions that you can take to resolve issues when one or more nodes become unhealthy or unavailable. This guide is particularly useful if you are not shipping your logs and metrics to a dedicated monitoring cluster.

What are the symptoms?

- Full disk on single-node deployment

- Full disk on multiple-nodes deployment

- JVM heap usage exceeds the allowed threshold on master nodes

- CPU usage exceeds the allowed threshold on master nodes

- Some nodes are unavailable and are displayed as missing

What is the impact?

- Only some search results are successful

- Ingesting, updating, and deleting data do not work

- Most Elasticsearch API requests fail

Some actions described here, such as stopping indexing or Machine Learning jobs, are temporary remediations intended to get your cluster into a state where you can make configuration changes to resolve the issue.

For production deployments, we recommend setting up a dedicated monitoring cluster to collect metrics and logs, troubleshooting views, and cluster alerts.

If your issue is not addressed here, then contact Elastic support for help.

AutoOps is a monitoring tool that simplifies cluster management through performance recommendations, resource utilization visibility, and real-time issue detection with resolution paths. Learn more about AutoOps.

Health check

Log in to the Elastic Cloud Console.

Click the Manage link corresponding to the deployment that you want to manage.

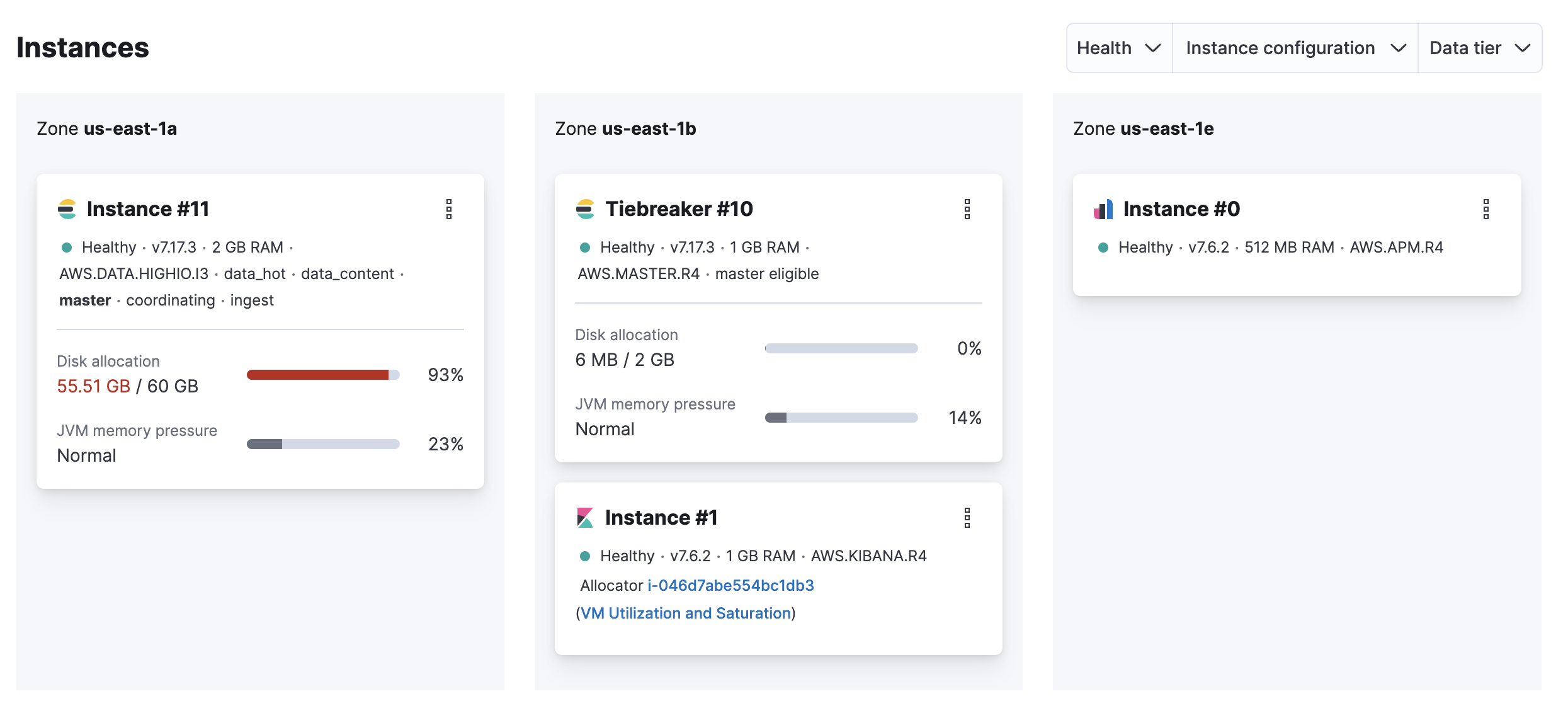

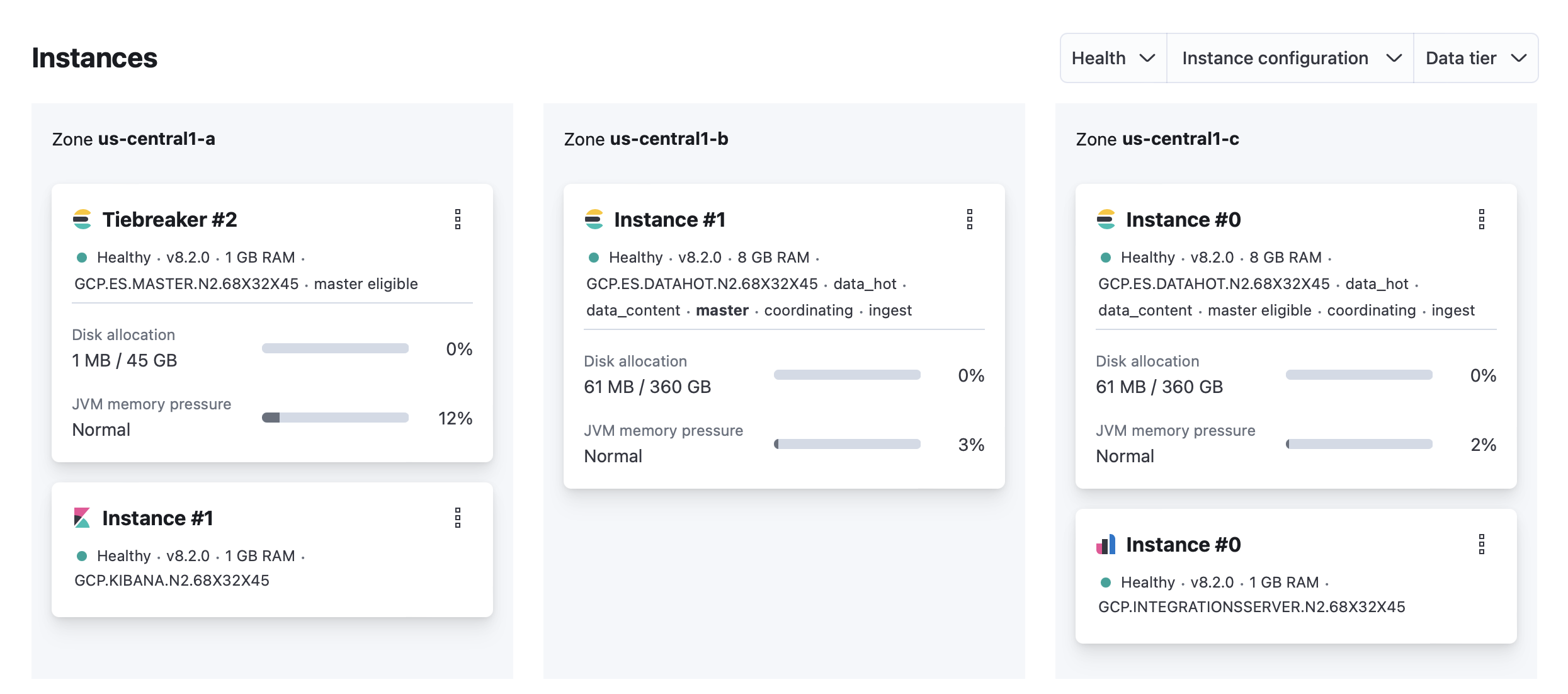

On your deployment page, scroll down to Instances and check if the disk allocation for your Elasticsearch instance is over 90%.

Possible cause

- The available storage is insufficient for the amount of ingested data.

Resolution

You can delete unused data by running either:

- API calls using the Kibana console, if available

- direct Elasticsearch API calls, when Elasticsearch has an elected quorum.

- Increase the disk size on your Hot data and Content tier (scale up).

If your Elasticsearch cluster is unhealthy and reports a status of red, then increasing the disk size of your Hot data and Content tier may fail. You might need to delete some data so the configuration can be edited. If you want to increase your disk size without deleting data, then reach out to Elastic support and we will assist you with scaling up.

Preventions

Increase the disk size on your Hot data and Content tier (scale up).

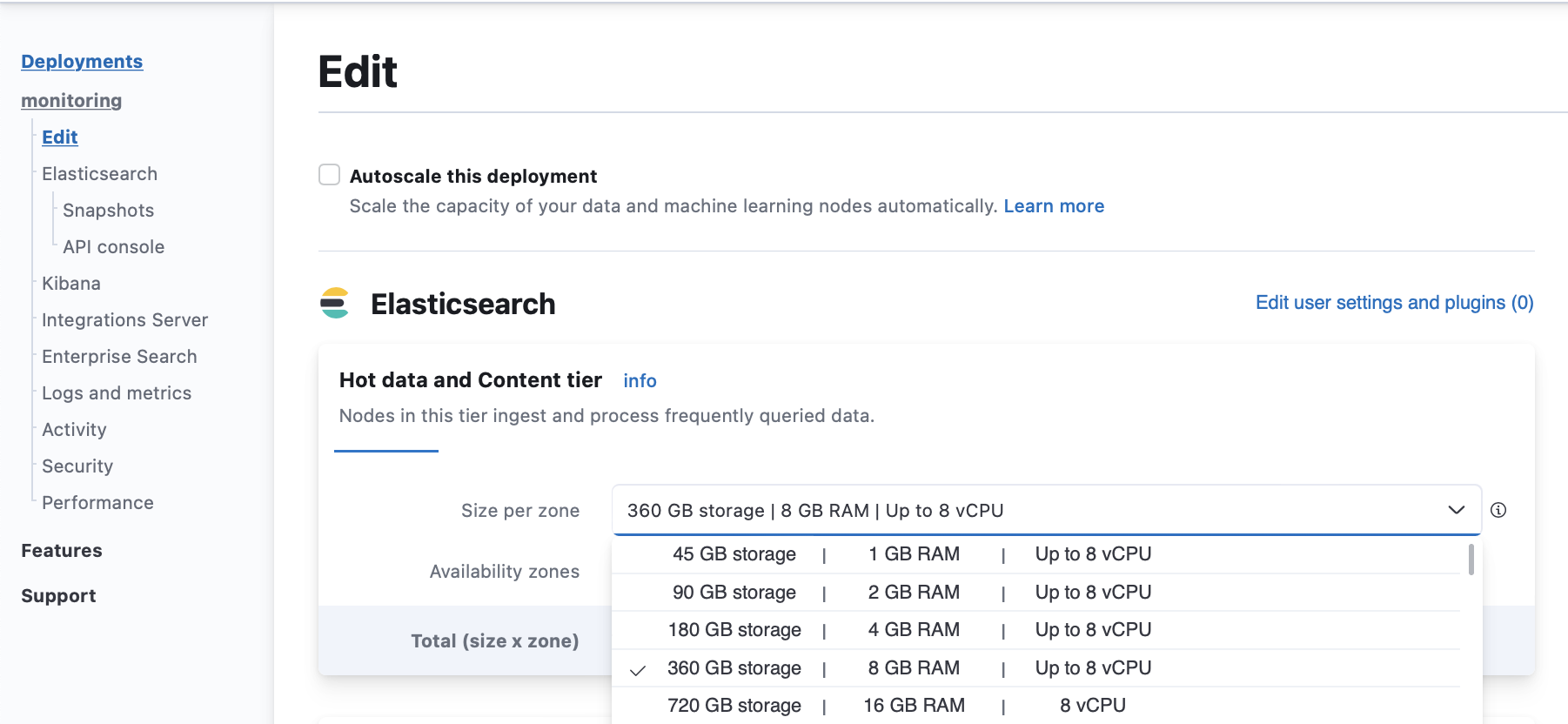

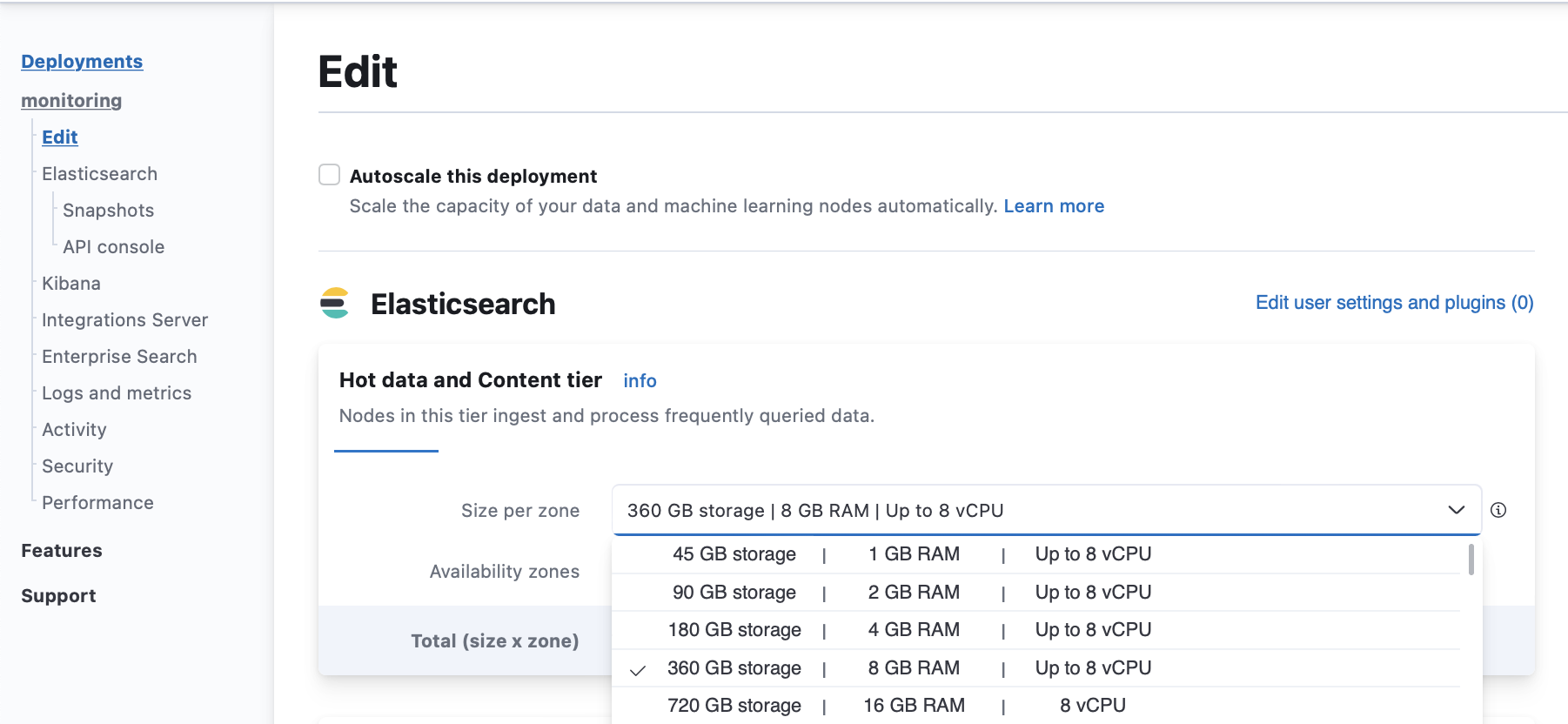

From your deployment menu, go to the Edit page and increase the Size per zone for your Hot data and Content tiers.

Enable autoscaling to grow your cluster automatically when it runs out of space.

Configure ILM policies to automatically delete unused data.

Add nodes to your Elasticsearch cluster and enable data tiers to move older data that you don’t query often to more cost-effective storage.

Health check

Log in to the Elastic Cloud Console.

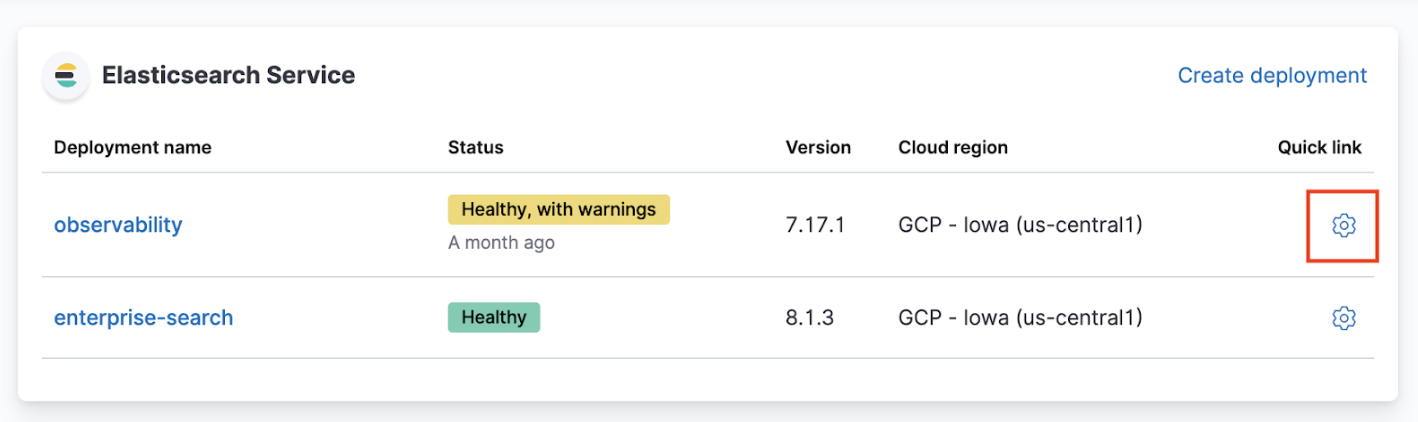

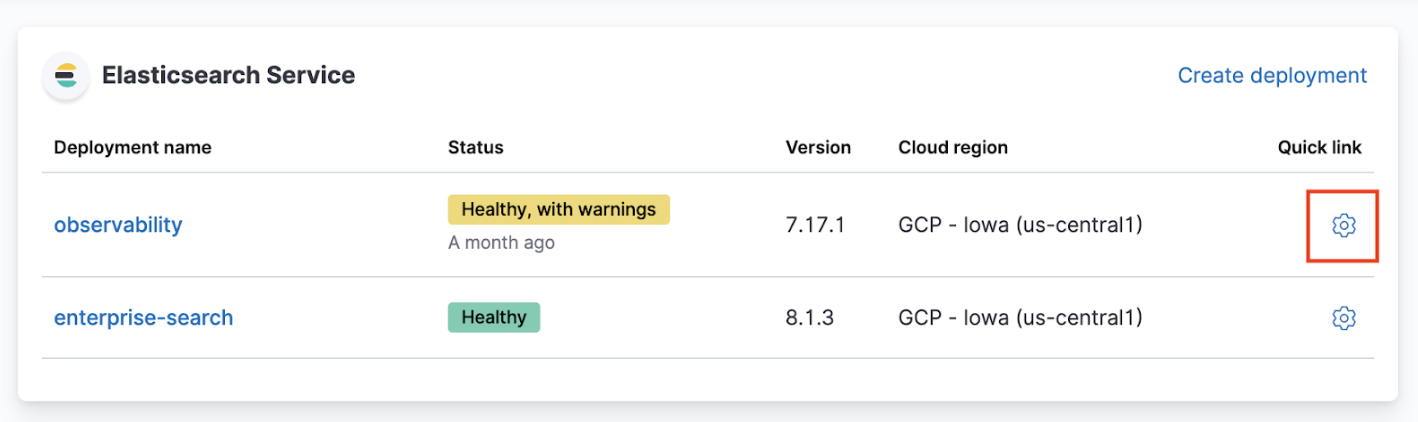

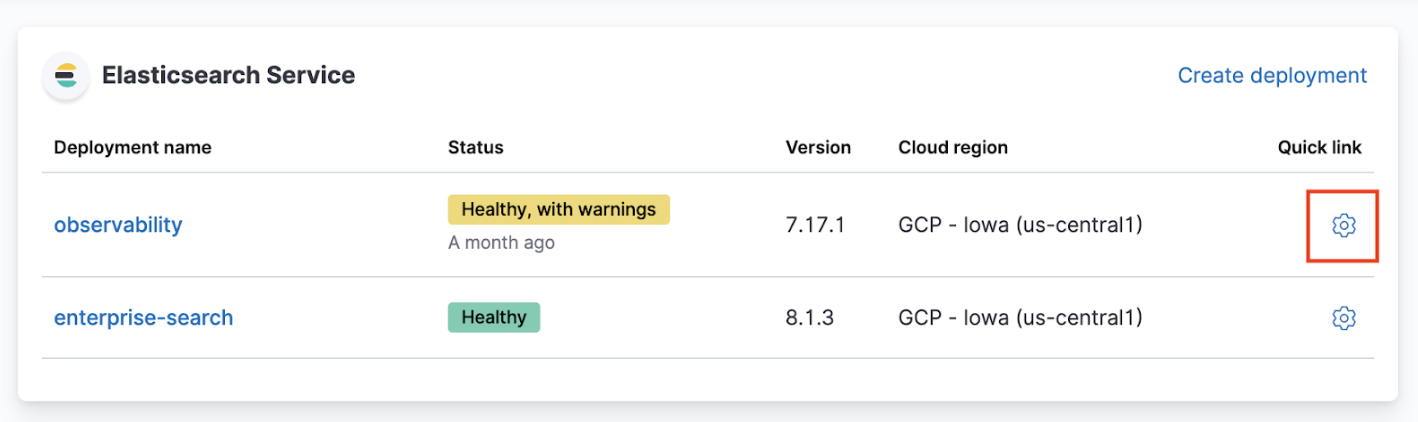

From the Elasticsearch Service panel, click the Quick link icon corresponding to the deployment that you want to manage.

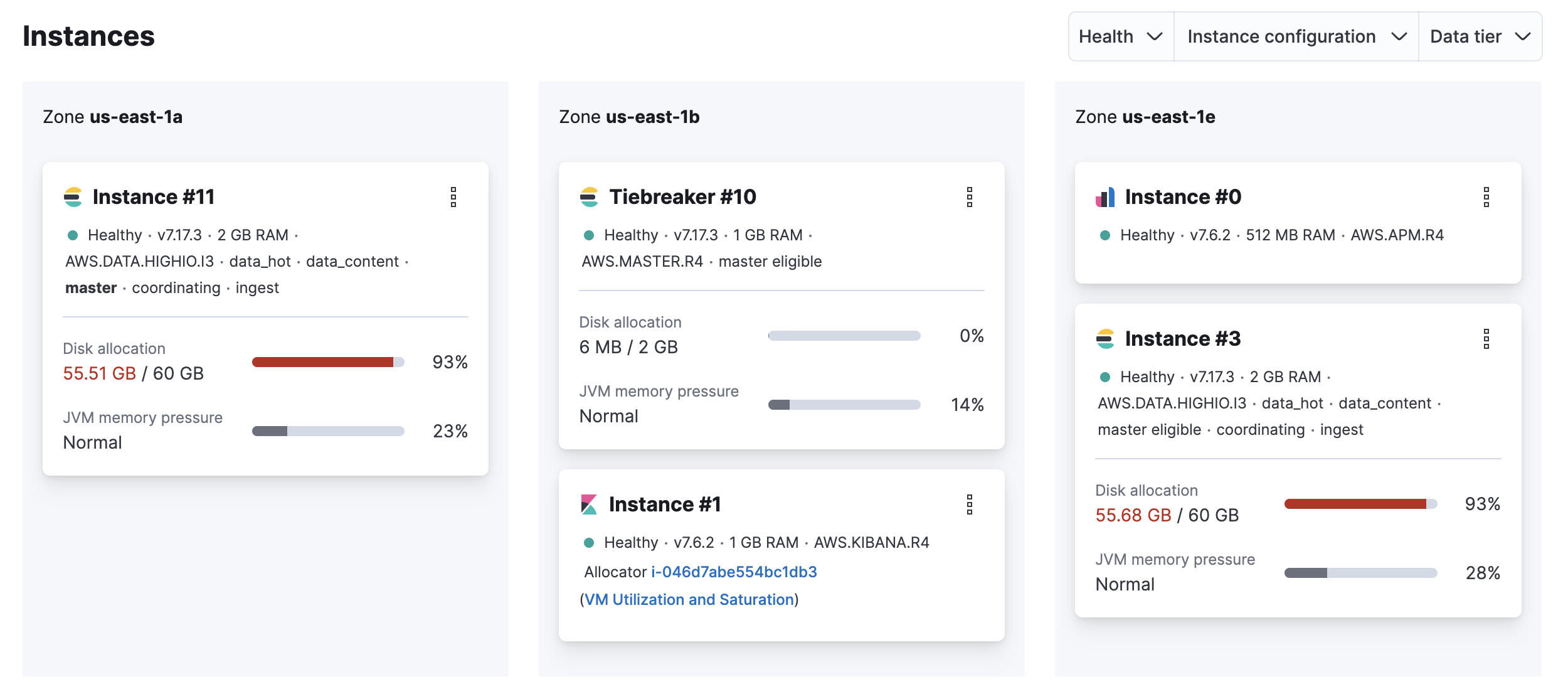

On your deployment page, scroll down to Instances and check if the disk allocation for any of your Elasticsearch instances is over 90%.

Possible cause

- The available storage is insufficient for the amount of ingested data.

Resolution

You can delete unused data by running either:

- API calls using the Kibana console, if available

- direct Elasticsearch API calls, when Elasticsearch has an elected quorum.

- Increase the disk size (scale up).

If your Elasticsearch cluster is unhealthy and reports a status of red, the scale up configuration change to increasing disk size on the affected data tiers may fail. You might need to delete some data so the configuration can be edited. If you want to increase your disk size without deleting data, then reach out to Elastic support and we will assist you with scaling up.

Preventions

Increase the disk size (scale up).

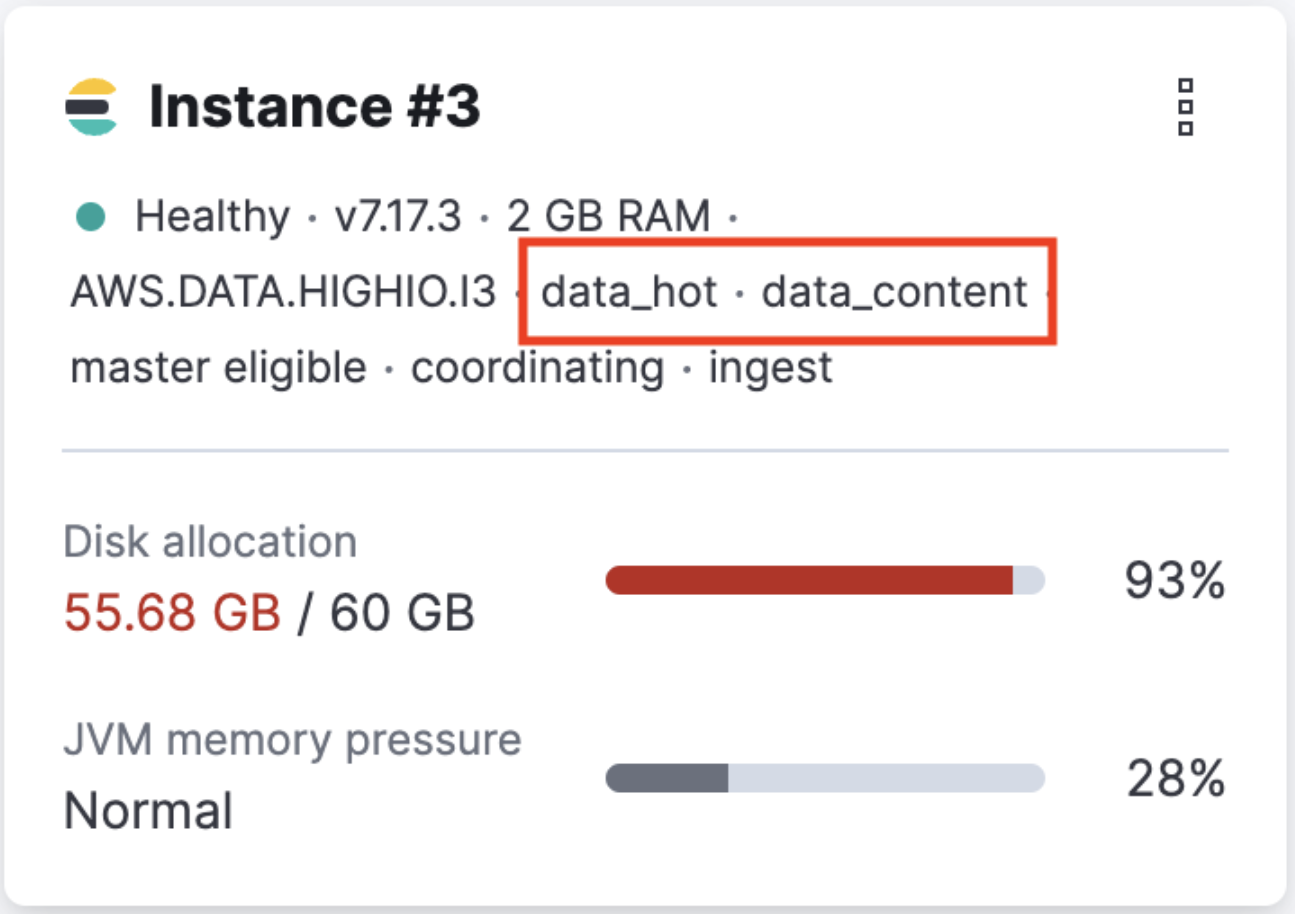

On your deployment page, scroll down to Instances and identify the node attribute of the instances that are running out of disk space.

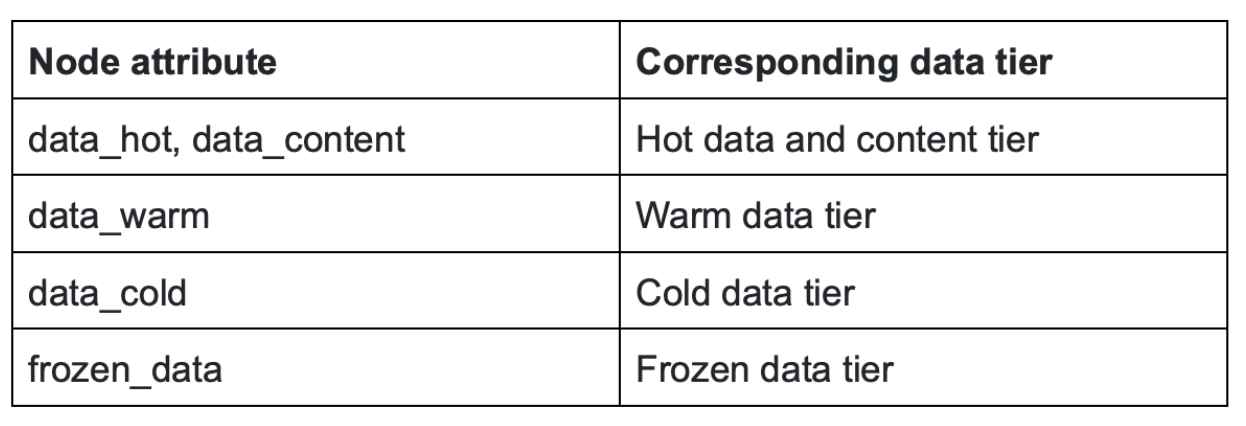

Use the node types identified at step 1 to find out the corresponding data tier.

From your deployment menu, go to the Edit page and increase the Size per zone for the data tiers identified at step 2.

Enable autoscaling to grow your cluster automatically when it runs out of space.

Configure ILM policies to automatically delete unused data.

Enable data tiers to move older data that you don’t query often to more cost-effective storage.

Health check

Log in to the Elastic Cloud Console.

From the Elasticsearch Service panel, click the Quick link icon corresponding to the deployment that you want to manage.

On your deployment page, scroll down to Instances and check if the JVM memory pressure for your Elasticsearch instances is high.

Possible causes

The master node is overwhelmed by a large number of snapshots or shards.

External tasks initiated by clients

- Index, search, update

- Frequent template updates due to the Beats configuration

Internal tasks initiated by users

- Machine Learning jobs, watches, monitoring, ingest pipeline

Internal tasks initiated by Elasticsearch

- Nodes joining and leaving due to hardware failures

- Shard allocation due to nodes joining and leaving

- Configuration of ILM policies.

Resolutions

If the master node is overwhelmed by external tasks initiated by clients:

Investigate which clients might be overwhelming the cluster and reduce the request rate or pause ingesting, searching, or updating from the client. If you are using Beats, temporarily stop the Beat that’s overwhelming the cluster to avoid frequent template updates.

If the master node is overwhelmed by internal tasks initiated by users:

- Check cluster-level pending tasks.

- Reduce the number of Machine Learning jobs or watches.

- Change the number of ingest pipelines or processors to use less memory.

If the master node is overwhelmed by internal tasks initiated by Elasticsearch:

For nodes joining and leaving, this should resolve itself. If increasing the master nodes size doesn’t resolve the issue, contact support.

For shard allocation, inspect the progress of shards recovery.

- Make sure

indices.recovery.max_concurrent_operationsis not aggressive, which could cause the master to be unavailable. - Make sure

indices.recovery.max_bytes_per_secis set adequately to avoid impact on ingest and search workload.

- Make sure

Check ILM policies to avoid index rollover and relocate actions that are concurrent and aggressive.

If the master node is overwhelmed by a large number of snapshots, reduce the number of snapshots in the repo.

If the master node is overwhelmed by a large number of shards, delete unneeded indices and shrink read-only indices to fewer shards. For more information, check Reduce a cluster’s shard count.

Health check

By default, the allowed CPU usage threshold is set at 85%.

Log in to the Elastic Cloud Console.

From the Elasticsearch Service panel, click the Quick link icon corresponding to the deployment that you want to manage.

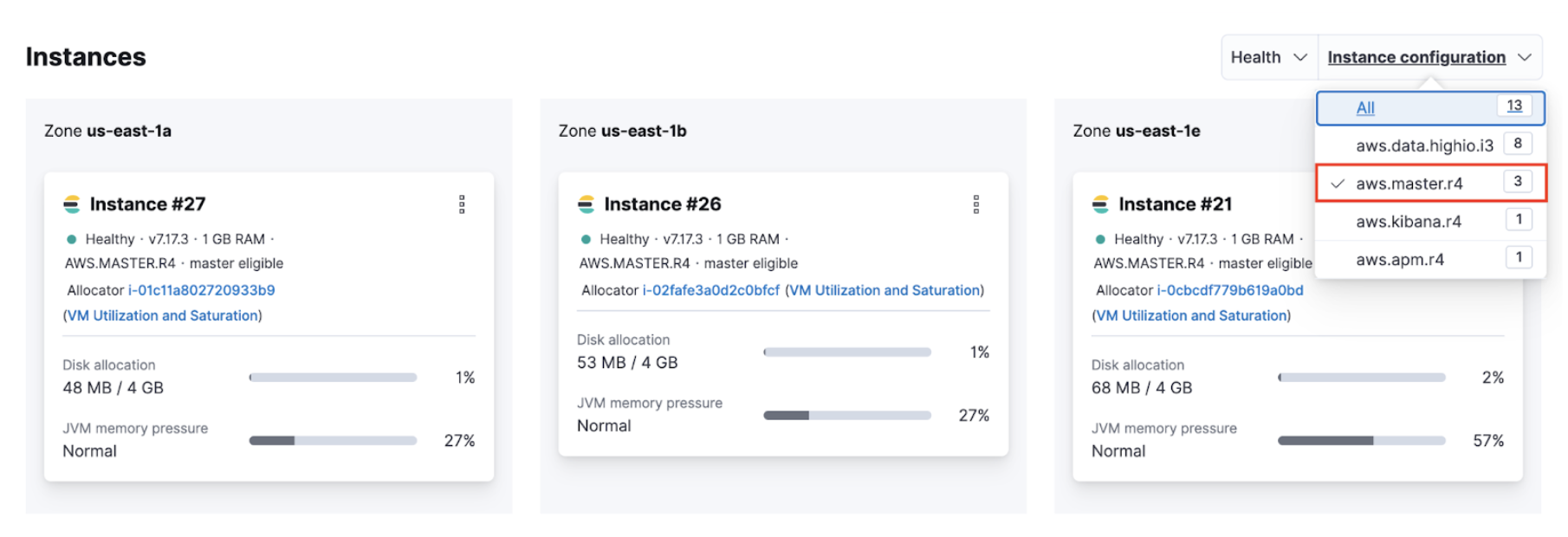

Identify the IDs of your master nodes. On your deployment page, scroll down to Instances and filter your instance configuration by master. The IDs of your master nodes are in the title. In this example, the IDs are 21, 26 and 27:

Note

NoteThe name of the instance configuration might differ depending on the cloud provider.

Navigate to the Performance page of your deployment. Check if the CPU usage of your master nodes exceeds 85%. Your master node has the format

instance-<ID>``, where<ID>`` is the ID of the master node.

If you use Stack Monitoring, open Kibana from your deployment page and select Stack Monitoring from the menu or the search bar.

Stack Monitoring comes with out-of-the-box rules, but you need to enable them when prompted.

Possible causes

The master node is overwhelmed by a large number of snapshots or shards.

The memory available on the master node is overwhelmed by these tasks:

External tasks initiated by clients

- Index, search, update

- Frequent template updates due to the Beats configuration

Internal tasks initiated by users

- Machine Learning jobs, watches, monitoring, ingest pipelines

Internal tasks initiated by Elasticsearch

- Nodes joining and leaving due to hardware failures

- Shard allocation due to nodes joining and leaving

- Configuration of ILM policies.

Resolutions

Navigate to the Edit page of your deployment and increase the master node size.

Upgrade the cluster to the latest version.

If the master node is overwhelmed by external tasks initiated by clients:

- Reduce the request rate or pause ingesting, searching, or updating from the client.

- Enable ingest and search-based autoscaling.

- Stop Beats to avoid frequent template updates.

If the master node is overwhelmed by internal tasks initiated by users:

- Check cluster-level pending tasks.

- Reduce the number of Machine Learning jobs or watches.

- Change the number of ingest pipelines or processors to use less memory.

If the master node is overwhelmed by internal tasks initiated by Elasticsearch:

For nodes joining and leaving, this should resolve itself. If increasing the master nodes size doesn’t resolve the issue, contact support.

For shard allocation, inspect the progress of shards recovery. If there’s no progress, contact support.

- Make sure

indices.recovery.max_concurrent_operationsis not aggressive, which could cause the master to be unavailable. - Make sure

indices.recovery.max_bytes_per_secis set adequately to avoid impact on ingest and search workload.

- Make sure

Check ILM policies to avoid index rollover and relocate actions that are concurrent and aggressive.

If the master node is overwhelmed by a large number of snapshots, reduce the number of snapshots in the repo.

If the master node is overwhelmed by a large number of shards, reduce the number of shards on the node. For more information, check Size your shards.

Health check

- Use the Metrics inventory to identify unavailable or unhealthy nodes. If the number of minimum master nodes is down, Elasticsearch is not available.

Possible causes

- Hardware issue.

- Routing has stopped because of a previous ES configuration failure.

- Disk/memory/CPU are saturated.

- The network is saturated or disconnected.

- Nodes are unable to join.

Resolutions

Hardware issue: Any unhealthy hardware detected by the platform is automatically vacated within the hour. If this doesn’t happen, contact support.

Routing stopped: A failed Elasticsearch configuration might stop the nodes routing. Restart the routing manually to bring the node back to health.

Disk/memory/CPU saturated:

-

Note

You can delete unused data by running either:

- API calls using the Kibana console, if available

- direct Elasticsearch API calls, when Elasticsearch has an elected quorum.

Increase disk size.

Configuration of ILM policies.

-

Network saturated or disconnected: Contact support.

Nodes unable to join: Fix the Elasticsearch configuration.

Nodes unable to join: Contact support.