Elasticsearch: The open source platform for high-performance search, context engineering, and AI

Store structured, unstructured, and vector data. Build AI applications and agents with relevance, real-time analytics, and advanced geospatial queries — delivered through a single unified platform.

More than just search: Get answers, not links

Build experiences beyond the search box with your data, your way.

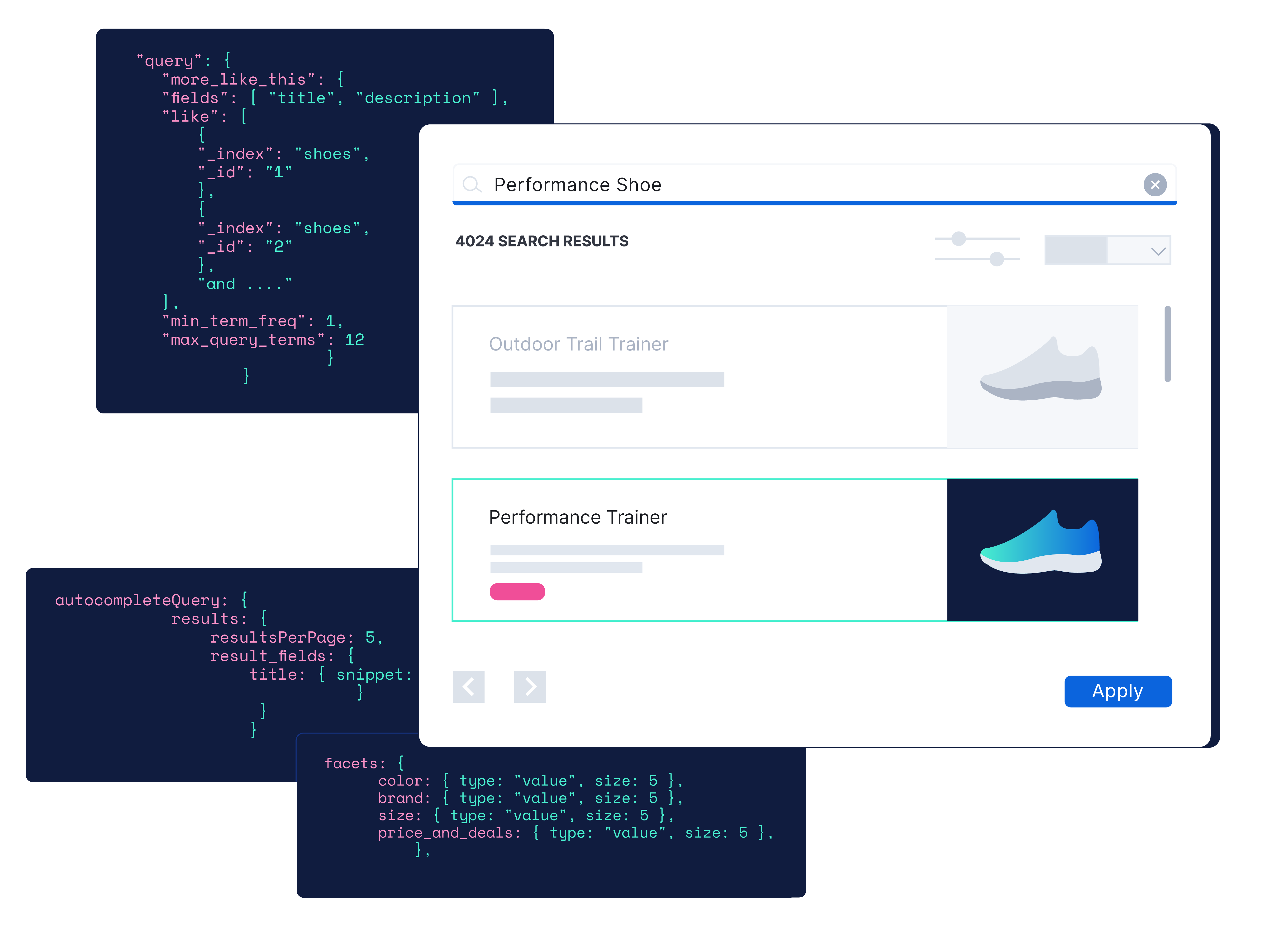

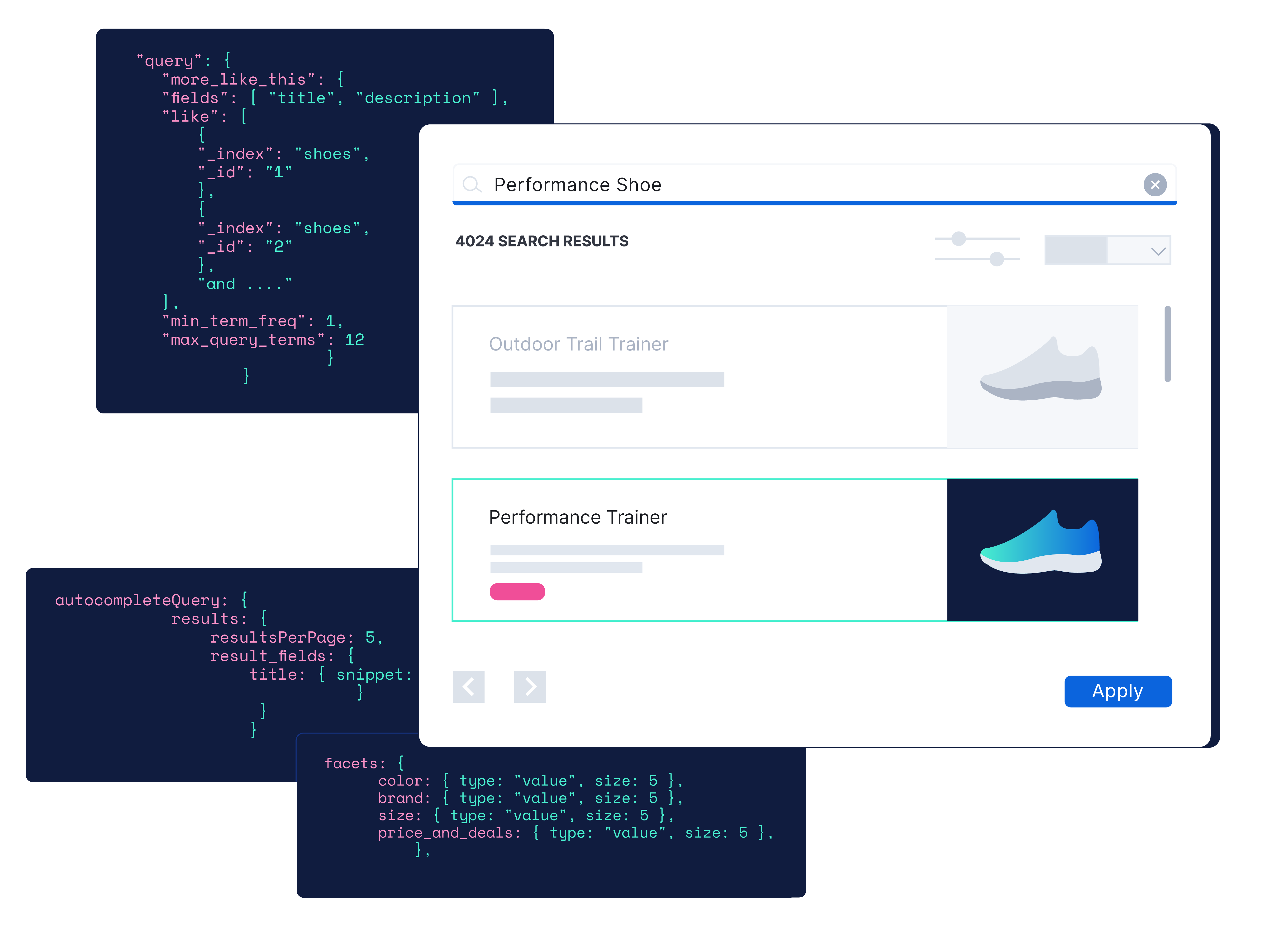

Ecommerce

Control speed and relevance at modern retail scale

Learn moreDrive product discovery with full control using semantic and vector search, personalization, query rules, and synonyms. Deliver effortless faceted browsing and precise recommendations across structured and unstructured data.

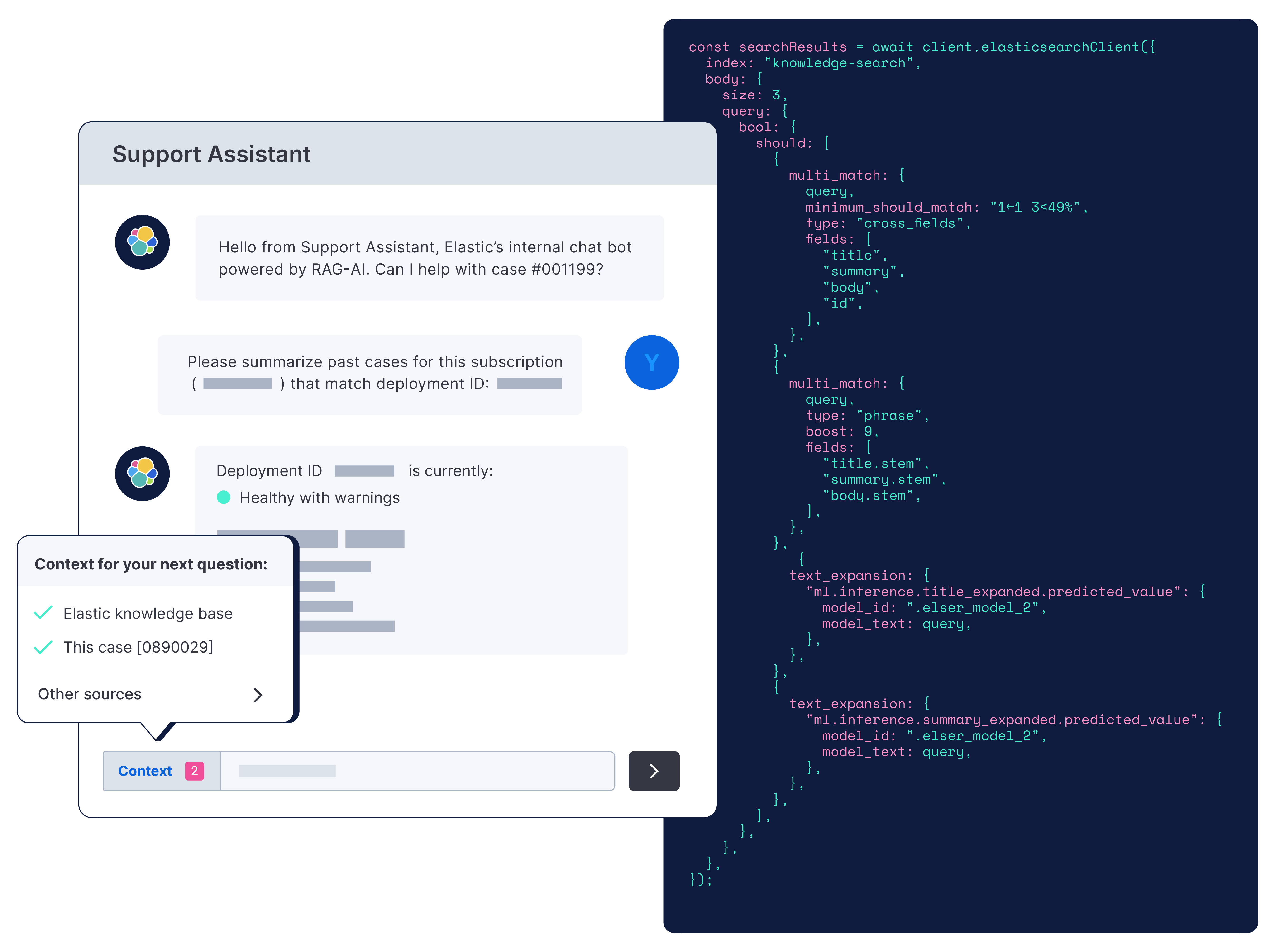

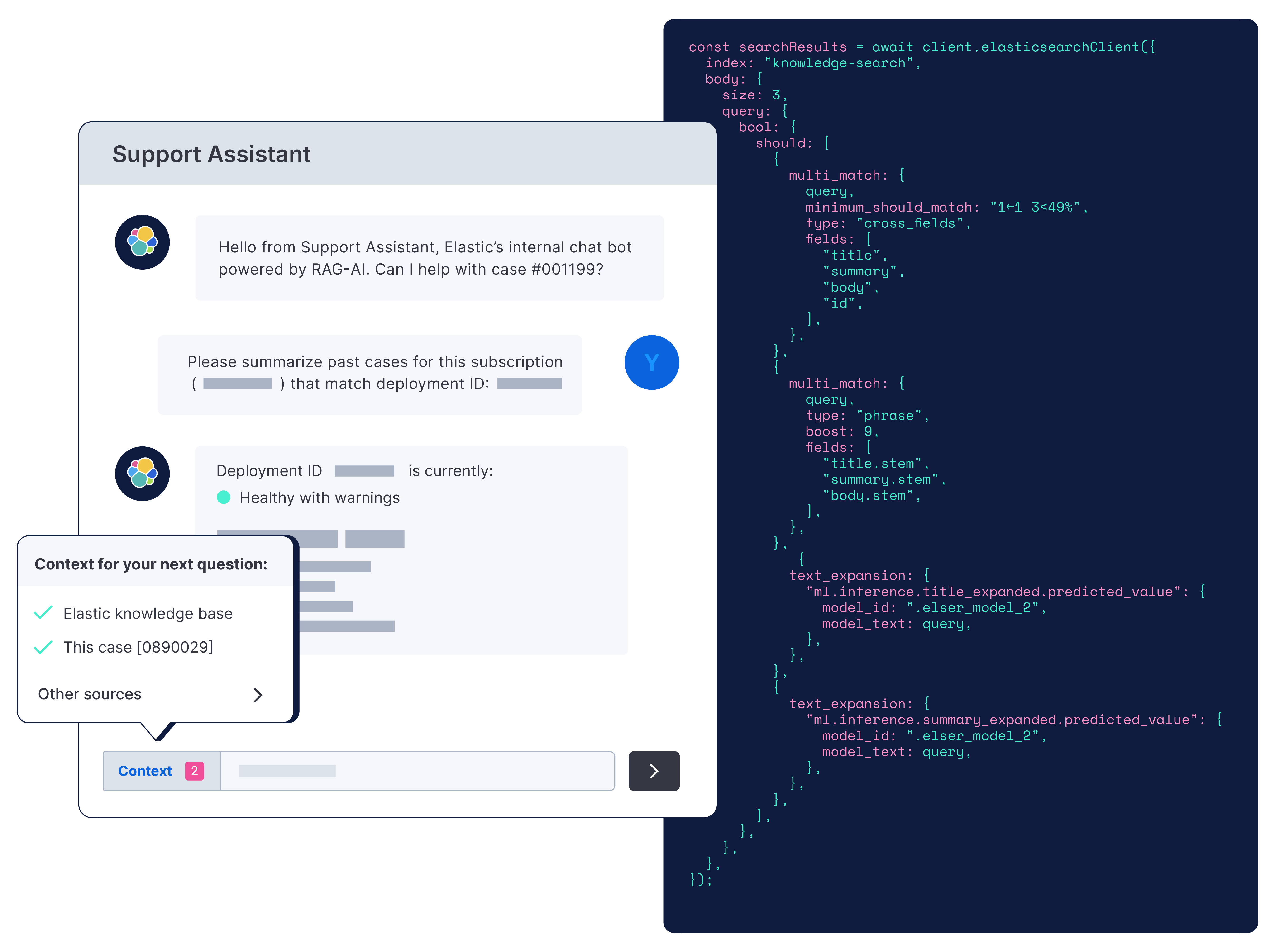

Customer support

Faster answers, greater efficiency

Connect support to the right answersBuild apps that deflect cases and drive self-service with semantic search — for all internal teams on call and customer support. Surface accurate answers with context from across data silos, secured with RBAC and doc-level controls.

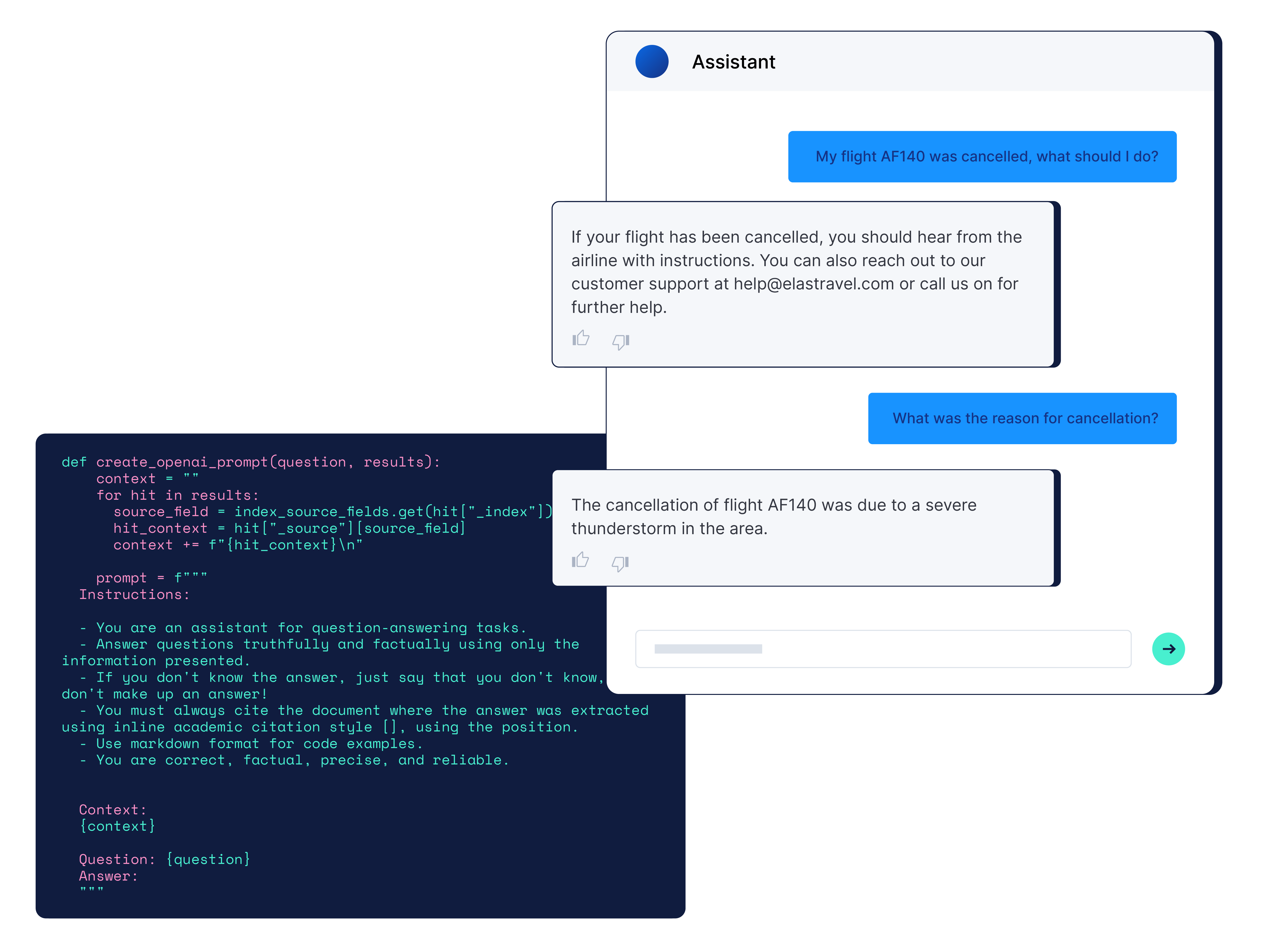

AGENTIC AI

Context shapes relevance — Elasticsearch delivers

Explore context engineeringYou need real-time, domain-specific context from every source and data type to return relevant responses. Build context-aware agents and conversational search experiences with hybrid search, personalization, reranking, and doc-level security across structured, unstructured, vectors, and signals.

SEARCH-DRIVEN APPLICATIONS

Smart search, no limits

Every search touchpoint — leveled up with Elasticsearch.

From bare metal to serverless. It's your call.

From a laptop to a hundred‑node cluster, Elasticsearch works the same everywhere. On‑premises, in the cloud, or across clouds — we'll be there.

Elastic Cloud

Built on a new stateless architecture

Hassle-free operations with a fully managed serverless offering — the easiest way to search, monitor, and secure your applications.

Self-Managed

Download Elasticsearch

Install locally to start running Elasticsearch on your machine in just a few steps.

They built it with Elasticsearch

… and shipped fast, relevant, production-ready search.

Customer spotlight

Docusign brings generative AI to customers worldwide.

Docusign brings generative AI to customers worldwide.Customer spotlight

Ernst & Young helps clients mine insights from unstructured data with generative AI.

Customer spotlight

Cypris supports research and development breakthroughs using vector search and RAG.

Frequently asked questions

Is Elasticsearch open source?

Is Elasticsearch open source?

Yes, Elasticsearch and Kibana are open source under the AGPL license. Built on Apache Lucene, we support open source projects like OpenTelemetry, Logstash, and Beats. This fosters a community of innovation and collaboration, ensuring Elasticsearch continues to evolve in new and exciting ways. The AGPL license reinforces our open source principles, ensuring security, extensibility, and community-driven progress.

Do I need separate Elastic products for text, vector, and hybrid search?

Do I need separate Elastic products for text, vector, and hybrid search?

No. Elastic's BM25 textual search algorithm, as well as its scalable vector database, semantic search, and reciprocal rank fusion (RRF) hybrid scoring, all come ready to use with Elasticsearch. Elastic even has its own semantic search model, the Elastic Learned Sparse EncodeR, that can be used out of the box. Explore Search AI with these interactive hands-on learning modules.

Is Elastic a vector database?

Is Elastic a vector database?

Yes. Elastic is the world’s most used, scalable vector database that lets developers create, store, and search vector embeddings. But that's not all. Elasticsearch also contains everything you need to build outstanding search experiences, including aggregations, filtering and faceting, auto-complete, multiple retrieval methods, and the flexibility to integrate with your own or third-party transformer models.

How does Elasticsearch enable context engineering?

How does Elasticsearch enable context engineering?

Elasticsearch is built for relevance at scale, which is the foundation of context engineering. It brings together vector, keyword, and structured search with analytics, inference, and observability in a single platform. This makes it easy for developers to store, retrieve, and rank structured and unstructured business data with precision, so agents always get the right context.

With Agent Builder, Elasticsearch takes this further by bringing chat, retrieval, tool creation, and orchestration directly into the platform. Developers can build, test, and scale context-driven agents in minutes using their own data, models, and tools, all supported by Elasticsearch relevance, security, and performance.

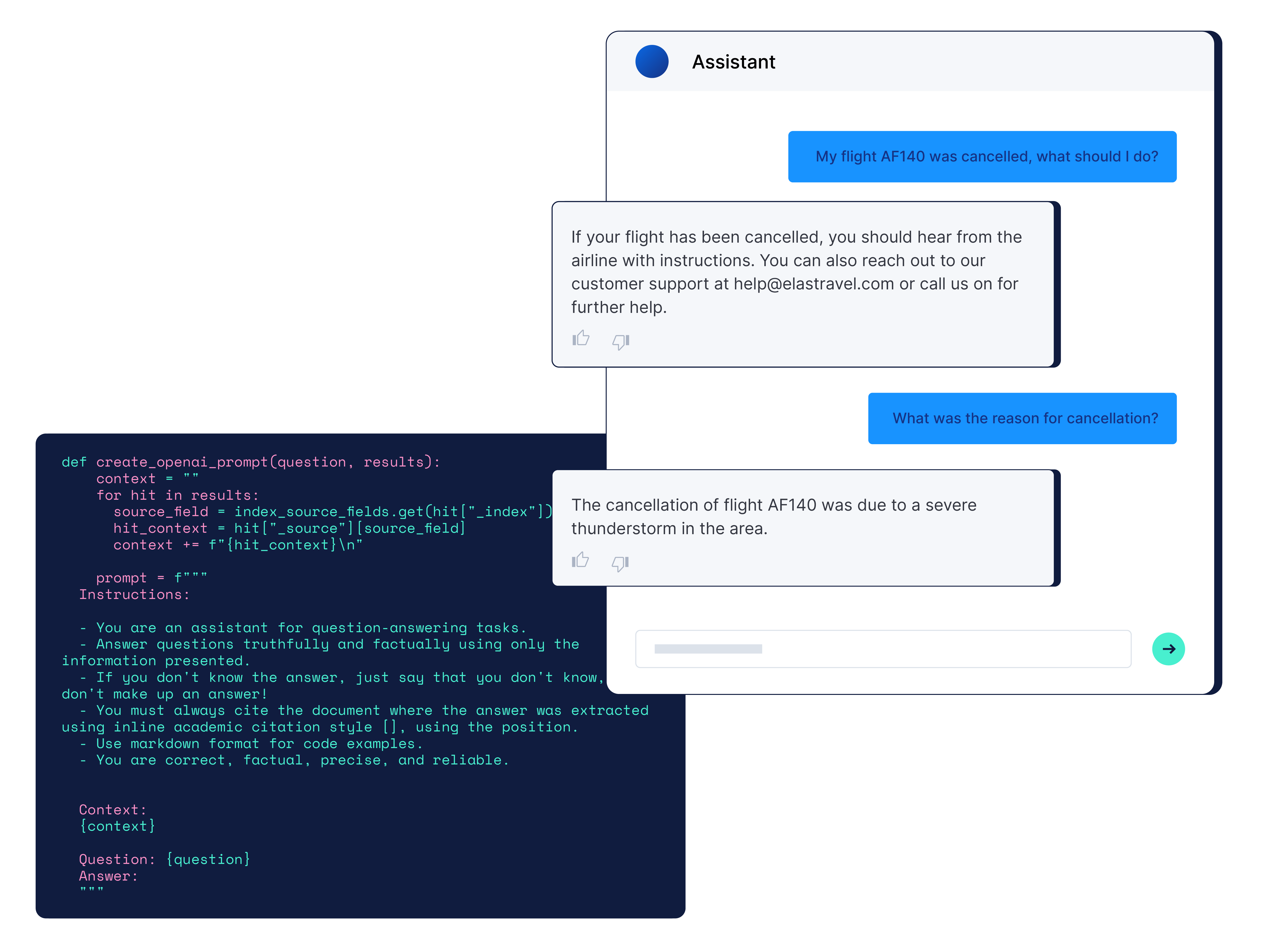

Why do I need a search product if I use a large language model in my app?

Why do I need a search product if I use a large language model in my app?

You need a search product if you use a large language model because it's a cost and time efficient approach for achieving more accurate results in your generative AI experience. By searching over your domain-specific data, you can minimize hallucinations from the large language model by providing highly relevant search results as additional context and limit the time it takes to fine tune the model. Using retrieval augmented generation (RAG), Elastic lets you query proprietary data to get more accurate, real-time results, requiring fewer compute and storage resources. Elastic also controls search access with its document-level security.

Where can I find code examples for implementing search?

Where can I find code examples for implementing search?

If you're a developer, one of the best places to get technical and practical information about implementing Elastic is through blogs, examples, and tutorials featured in Elasticsearch Labs. This resource is created and maintained by the technologists who work at Elastic for the technologists who use Elastic to help you learn about the latest in generative AI, vector search, and machine learning research.

What is Search AI Lake?

What is Search AI Lake?

Elastic's Search AI Lake is optimized for real-time, low-latency applications, making it an ideal architecture for your AI-driven future. It revolutionizes data lakes by offering low-latency querying and the powerful search and AI relevance capabilities of Elasticsearch. Search AI Lake powers a new Elastic Cloud Serverless deployment — removing all operational overhead so your teams can start innovating.