New to Elasticsearch? Join our getting started with Elasticsearch webinar. You can also start a free cloud trial or try Elastic on your machine now.

Part 1 in this blog series established why ecommerce search needs a governance layer between the user's query and the retrieval engine that classifies intent, enforces business constraints, and routes to the appropriate retrieval strategy. The natural next questions are: Who operates that layer, and how fast can they move?

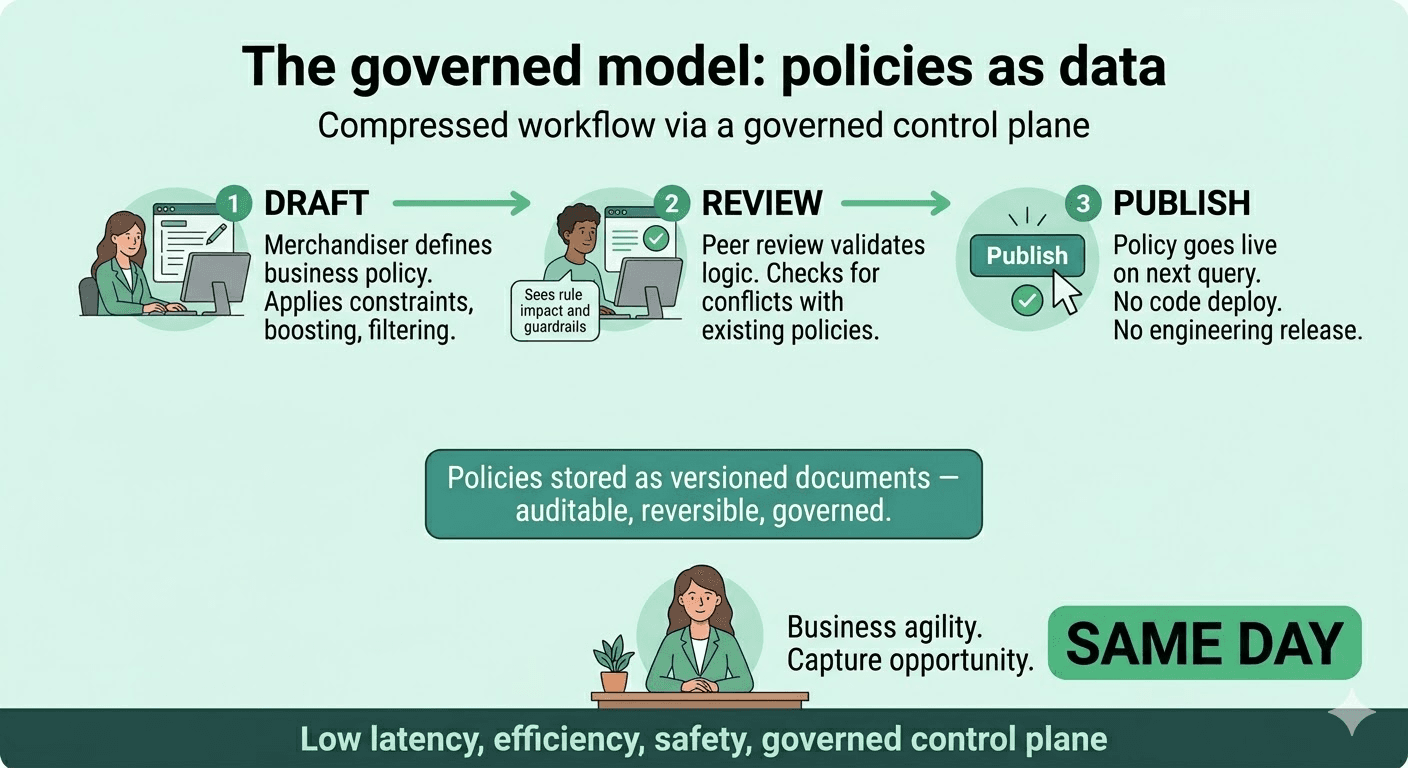

This post answers those questions. A governed control plane doesn't just improve search relevance; it changes the operating model. It moves search behavior changes from engineering deployment cycles to business-driven workflows, without sacrificing safety or accountability.

The scenario that exposes the operating model

Imagine that you’re in the weeks leading up to Christmas, and your merchandising team has identified three urgent changes that must immediately be made to search behavior:

- Campaign launch. Due to an ordering error, there’s an oversupply of in-house branded turkeys. Therefore any query for "turkey" must boost the in-house brand.

- Product recall. A supplier has recalled a product line. Queries that would surface those products shouldn’t be shown.

- Seasonal reinterpretation. Queries for "stocking" are returning women's hosiery and tights. During the holiday season, "stocking" should resolve to Christmas stockings and stocking stuffers. Once the season ends, the policy can be reverted in minutes.

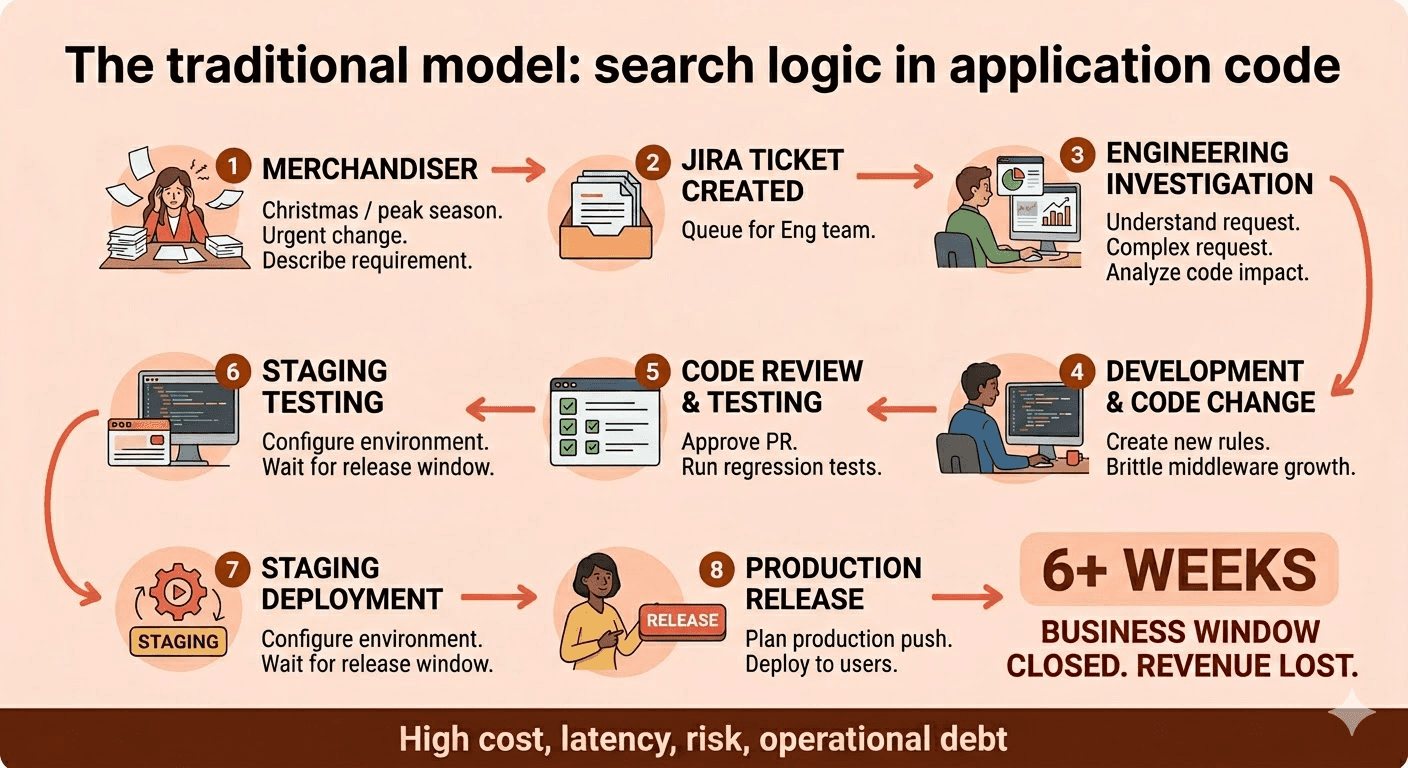

Under the traditional operating model, where search logic is embedded in application code, each of these changes requires an engineering ticket, a code change, a review cycle, a staging deployment, and a production release. In organizations with conservative release processes, that's a timeline measured in weeks, not hours or minutes. The Christmas shopping window closes before engineering can ship the necessary modifications.

The bottleneck isn’t the retrieval engine; it’s the operating model. The core challenge is that business intent cannot be translated into search behavior without engineering acting as a constant intermediary, turning every strategic pivot into a technical ticket.

The anti-pattern: Search logic in application code

Part 1 described how search logic embedded in application code can turn into a "spaghetti" implementation, which creates operational friction. Here’s what that friction looks like at scale. What starts as a few targeted overrides, a filter here, a boost there, grows over time into tens of thousands of lines of if/else branching, regex patterns, and conditional query modifications. This creates problems beyond just technical debt:

This model introduces four systemic frictions that hinder both organizational speed and system scalability:

Coupling. Business strategy changes daily. Application infrastructure should remain highly stable. When both live in the same codebase, a merchandiser's request to boost a seasonal product becomes a deployment risk, and a scoring function refactor can silently break a campaign.

Latency (organizational and computational). A single query behavior change can require a six-week deployment cycle: ticket, investigation, code change, review, staging, release. Furthermore, the application layer lacks any indexing mechanism to efficiently determine which policies apply to a given query, so policy evaluation often adds meaningful latency at query time as the system walks through sequential if/else checks.

Accountability gaps. When results change unexpectedly, nobody can quickly answer why. Was it a synonym update? A scoring change? A new filter added three releases ago? When business logic is distributed across thousands of lines of application code, shipped by different teams across different releases, tracing a relevance change back to its root cause becomes an archaeology project.

Misallocated engineering. This model turns skilled software engineers into full-time relevance mechanics. Instead of building platform capabilities, they spend their cycles translating merchandising requests into code changes and debugging interactions and conflicts between hard-coded business policies.

The paradigm shift: Policies as data

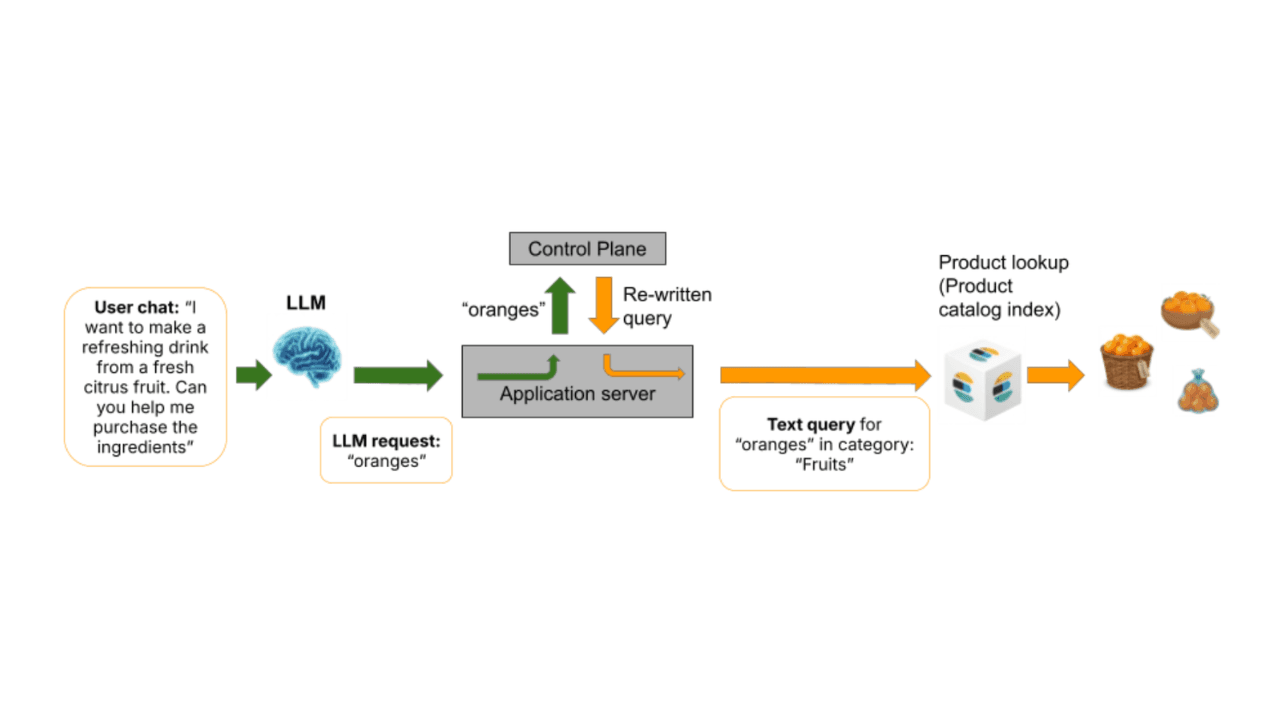

The solution is to decouple business policies from application code entirely. Instead of hard-coding query modifications in middleware, store governed policies as structured documents, each one expressing a discrete business intent, and evaluate them at query time in a dedicated governed control plane layer.

A policy is a first-class data object. It has match criteria (when should this policy fire?), an action (what should it do?), a priority (how does it interact with other policies?), and metadata (a title and a description). The control plane evaluates matching policies, resolves conflicts deterministically, and produces an execution plan including constraints, boosts, and routing decisions that Elasticsearch executes against a product catalog.

For each additional search requirement, the application code doesn't change. The retrieval engine doesn't change. What changes is that business decisions are no longer encoded in code. They live in a policy index as data that can be updated without a deployment.

This changes your org chart, not just your query.

Policies vs. triggers vs. rules

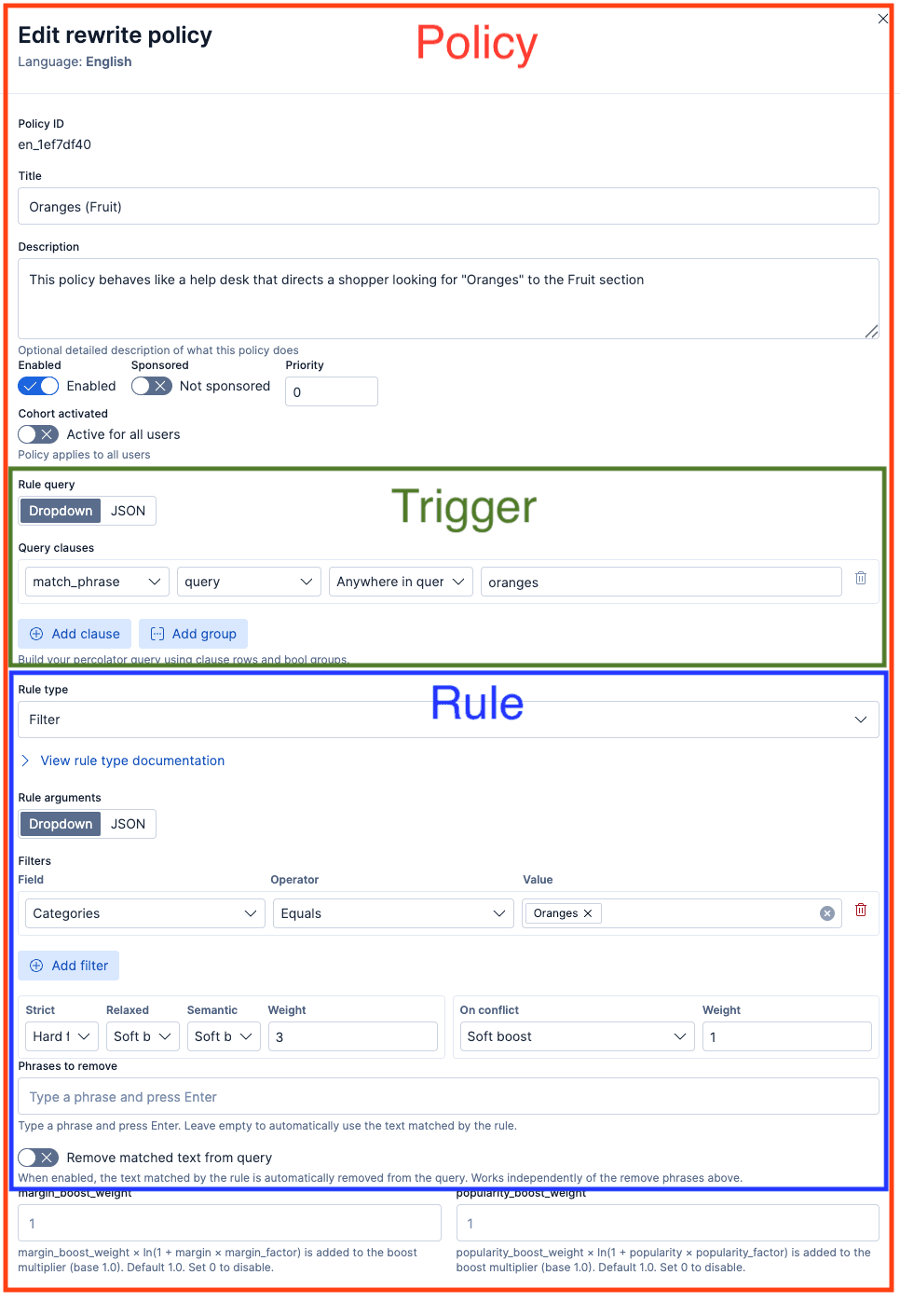

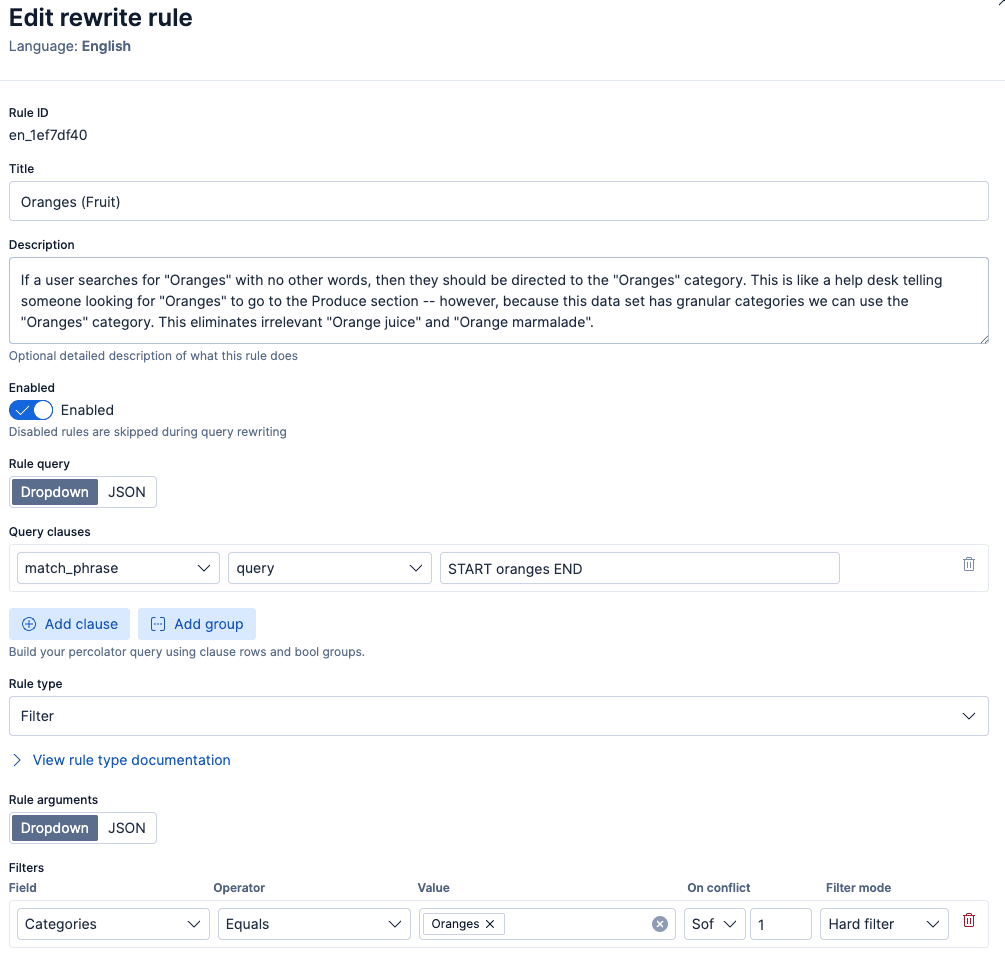

A note on terminology used in this series: a policy refers to this complete governed document, including a trigger (match criteria), rule (action), priority, enabled/disabled, and metadata. A trigger refers to the matching criteria that determines when this policy fires, and a rule refers specifically to the action inside the policy, such as applying a filter or changing the retrieval strategy.

The workflow: Author → Test → Promote

Moving policies out of code and into data opens the door for business-driven search management. But enabling non-technical teams to alter search behavior requires strict operational guardrails. The goal is fast and safe iteration with governance.

To empower non-technical teams to modify search behavior with confidence, we suggest a three-stage workflow: Author, Test, and Promote. Let’s examine the components of this workflow in detail.

Author. A merchandiser creates a policy using structured fields: what the policy should match, what action it should take, and at what priority. The interface guides the business user through what’s expressible.

Elastic Services has built and deployed a governed framework for enterprise ecommerce customers, which has an admin UI that looks as follows:

Test. The policy is validated in a non-production environment where the merchandiser can run representative queries and verify that the policy produces the expected behavior, including how it interacts with other active policies. Because the control plane infrastructure is identical across environments, what works in the test environment will work in production.

Review. Before a policy is promoted to production, it passes through review. Depending on the organization's risk tolerance, this might be a peer review from another merchandiser, an approval from a search lead, or an automated validation that checks for conflicts with existing policies.

Promote. Once approved, the policy is promoted to the production policy index. It takes effect on the next query: no code deployment, no engineering release, no staging build. The entire promotion is a data operation: the same JSON document, moved to a different index.

Disable. If a production policy produces unexpected behavior, it can be disabled immediately without engineering involvement. Disabling removes the policy from query evaluation instantly, without affecting any other policy in the system.

This is the "zero-deploy" promise. It doesn't mean "no process." It means the process operates on policy data, not application code. This distinction compresses the change cycle from weeks to hours or minutes.

Why "zero-deploy" matters for revenue-critical queries

The economics of ecommerce search are asymmetric. A small number of high-volume queries ("milk," "bread," "oranges," "diapers") drive a disproportionate share of revenue. When one of these queries returns unexpected results, the cost is immediate and measurable: Conversion drops, customer complaints spike, and the merchandising team opens an urgent ticket.

Under the traditional model, the response cycle is:

- The merchant notices the problem.

- The merchandiser files a ticket with engineering.

- Engineering investigates, identifies the cause, and writes a fix.

- The fix goes through code review, staging, and release.

- Production is updated.

Depending on the organization, steps 2 through 5 may take weeks. For a revenue-critical query during a peak sales period, that latency costs money.

Under a governed control plane, the response cycle compresses:

- The merchant notices the problem.

- The merchandiser drafts a policy fix (or modifies an existing policy).

- The policy goes through review and is published.

- The fix is live.

The difference isn't just speed. It's ownership. The person closest to the business context (the merchandiser who understands why "oranges" should resolve to produce, not beverages) is the person making the change. Engineering is freed from the daily merchandising loop to focus on the platform. This shift also unlocks something that's nearly impossible under the traditional model: attributing search performance changes to specific business decisions.

Measurability: Which policy moved conversion

When policies are discrete, versioned documents that are stored in an Elasticsearch index, each one becomes independently deployable and therefore its impact can be more easily measured. You can answer questions that are nearly impossible to answer when business logic is scattered across application code:

- Did the "cheap laptops" price threshold policy improve conversion for that query class, or did it suppress it?

- What was the click-through rate impact of the holiday campaign boost?

- When we rolled back the "oranges" category constraint last Thursday, what happened to add-to-cart rates?

This turns search governance into a data-driven discipline. Instead of vague "relevance tuning," where a release contains a dozen changes and nobody can attribute the outcome, you get measurable, attributable impact per policy. Merchandisers can iterate with evidence. Engineers can evaluate whether a policy schema change produced the expected downstream effect. Leadership can see which policies are driving revenue and which are inert.

What this means for each role

For merchandisers and business users

Search behavior becomes something you can directly influence through structured policies without understanding Elasticsearch syntax or scoring algorithms. You can see what policies are triggered for a given query to understand why it produces specific results, and make changes within hours instead of weeks. The same policy mechanism also supports sponsored product placement: A merchandiser can create a boost policy that elevates a product or brand and flags it for a 'Sponsored' indicator in the UI, without requiring engineering involvement or additional infrastructure.

For search engineers

The control plane separates two concerns that are currently entangled: retrieval optimization and business logic. Instead of maintaining tens of thousands of lines of application code that encodes business decisions, you maintain the retrieval engine and the control plane infrastructure. When a merchandiser needs a new campaign boost, they don't need engineering to write it.

This doesn't eliminate engineering involvement. Engineers design the policy schema, maintain the control plane, set guardrails on what policies can express, add new capabilities as required, and handle edge cases that fall outside the policy framework. But the day-to-day operational cadence of modifying query behavior shifts to the people who own the business context.

For site reliability engineers and platform teams

Because policies are structured documents rather than application code, they fit naturally into existing operational workflows. Policies can be stored in version control, reviewed through pull requests, and deployed through the same continuous integration and continuous deployment (CI/CD) pipelines the team already uses. Conflicts between policies are detected and resolved deterministically at query time through the control plane's priority system, not through unpredictable interactions between code branches shipped in different releases.

When something does go wrong, diagnosing the cause is straightforward: Policies are discrete, named, and individually toggleable. A problematic policy can be disabled or deleted immediately without affecting anything else in the system. Compare that to debugging a relevance regression caused by an interaction between a synonym update, a scoring function change, and a new analyzer, all shipped in the same release with no clear attribution.

Beyond manual authoring: Large language model–assisted (LLM-assisted) policy suggestions

The policies described so far are authored by humans (a merchandiser identifying a gap and drafting a fix). But the same governed workflow supports a second mode: LLM-assisted policy suggestion.

An LLM can run offline or in the background, analyzing query logs, identifying patterns where search results underperform, such as queries with high exit rates, low click-through, or frequent reformulations. An LLM can then suggest new policies that enter the same Author → Test → Promote pipeline, where a human evaluates each one before it reaches production.

Governance is the enabler, not the constraint

It might seem counterintuitive: Adding a governance layer makes the system faster to change, not slower. This is the same pattern that works in other domains. CI/CD pipelines don't slow down software delivery; they make it safe to ship frequently. Access control doesn't slow down collaboration; it makes it safe to share broadly.

A governed control plane works the same way. The reason a query behavior change takes six weeks isn't that the code change is complex; it's that nobody is confident enough to ship it faster, because the blast radius is unclear and the rollback path is uncertain.

Governance provides that confidence. When every policy is explicit, every conflict is resolved deterministically, and every change can be instantly disabled and then rolled back (because policies are structured JSON documents that can be version controlled using existing workflows), the cost of iteration drops dramatically. Business teams move at the speed of the market. Engineering focuses on the platform.

From operating model to architecture

The shift from business logic in code to business policies as data is more than a technical refactoring; it's an organizational change that puts relevance ownership with the teams closest to the business context. But it raises an architectural question: How do you evaluate policies at query time without adding latency or turning the control plane itself into a new form of spaghetti?

The next post will dig into exactly that: the design pattern that enables fast, deterministic policy evaluation at query time.

Put governed ecommerce search into practice

The workflow described here, merchandisers authoring, testing, and promoting search policies without engineering deployments, is already available. Elastic Services Engineering designed and built it, and Elastic Services has the skills to deploy it for enterprise ecommerce teams.

If your organization is ready to move from deployment-gated relevance tuning to business-editable search with governance and auditability, we can accelerate your implementation. Contact Elastic Professional Services.

Join the discussion

Have questions about search governance, retrieval strategies, or ecommerce search architecture? Join the broader Elastic community conversation.