Get hands-on with Elasticsearch: Dive into our sample notebooks in the Elasticsearch Labs repo, start a free cloud trial, or try Elastic on your local machine now.

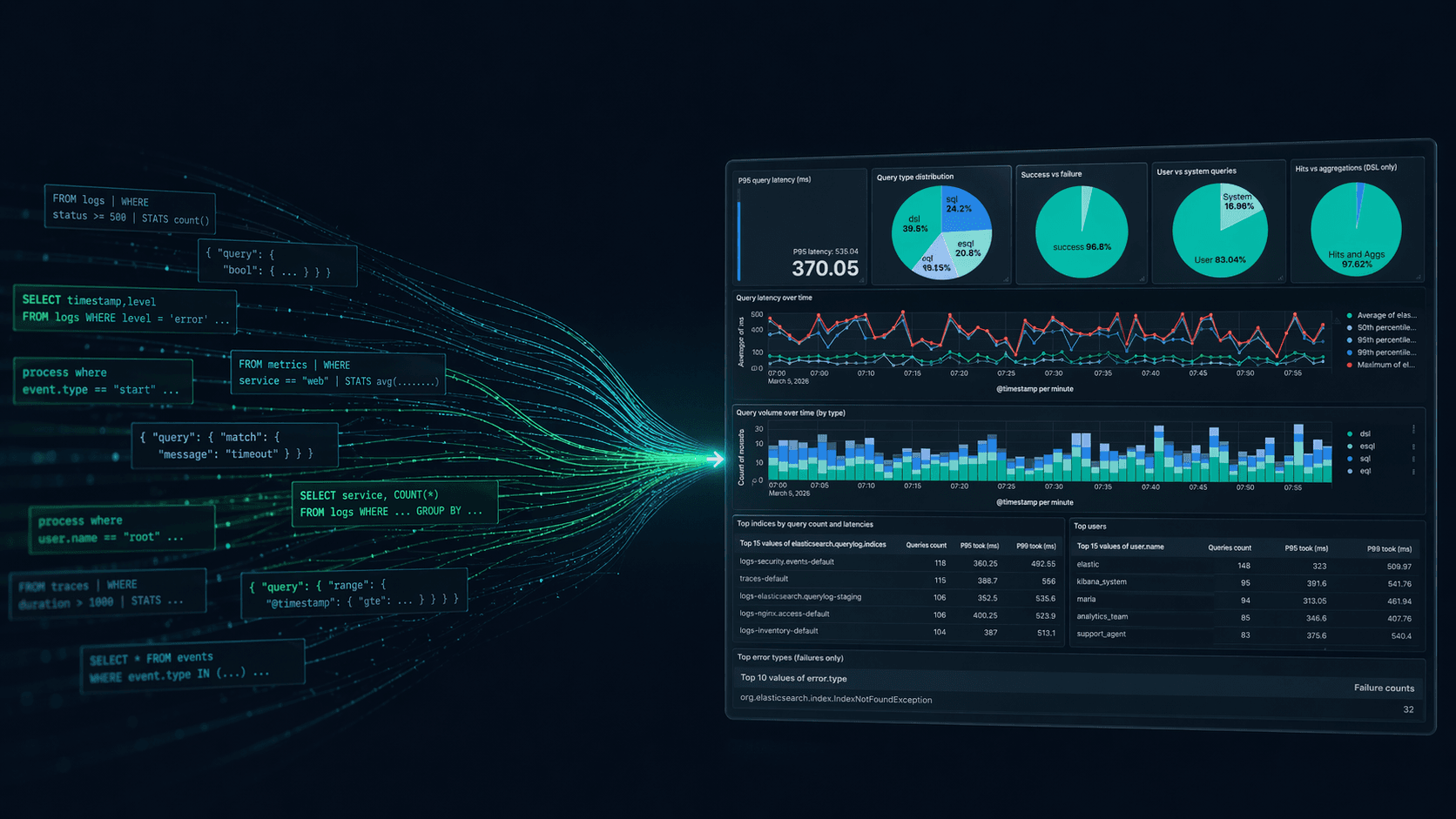

Elasticsearch runtime fields solve the problem of computing values at query time without reindexing. But they come with Painless scripting complexity and performance costs that scale with document count. Elasticsearch Query Language (ES|QL) offers a more powerful alternative with a dedicated execution engine, pipeline processing, and no scripting required. In this article, you’ll learn how to map five common runtime field patterns to their ES|QL equivalents, so you can modernize your queries and understand when each approach makes sense.

Prerequisites

- Elasticsearch 8.15+ (for

::cast operator support; core ES|QL features available from 8.11)

Runtime fields versus ES|QL

Runtime fields were introduced in Elasticsearch 7.11 as a way to define fields at query time. Instead of reindexing data, you could write a Painless script that computes values on the fly:

This works, but comes with trade-offs:

- Painless scripting overhead: Every runtime field requires scripting knowledge, and the syntax is Java-like, not query-like.

- Performance cost: Runtime fields evaluate per document at query time. Elasticsearch classifies them as "expensive queries" that can be rejected by cluster settings.

- Isolated computation: Each runtime field computes independently. There’s no way to chain transforms or use the output of one field in another within the same query.

ES|QL changes the equation. It has its own execution engine (not translated to Query DSL), runs queries concurrently across nodes, and provides a complete toolkit for field computation: EVAL, GROK, DISSECT, type casting, and pipeline chaining.

Let's see how each runtime field pattern maps to ES|QL.

Setting up the example data

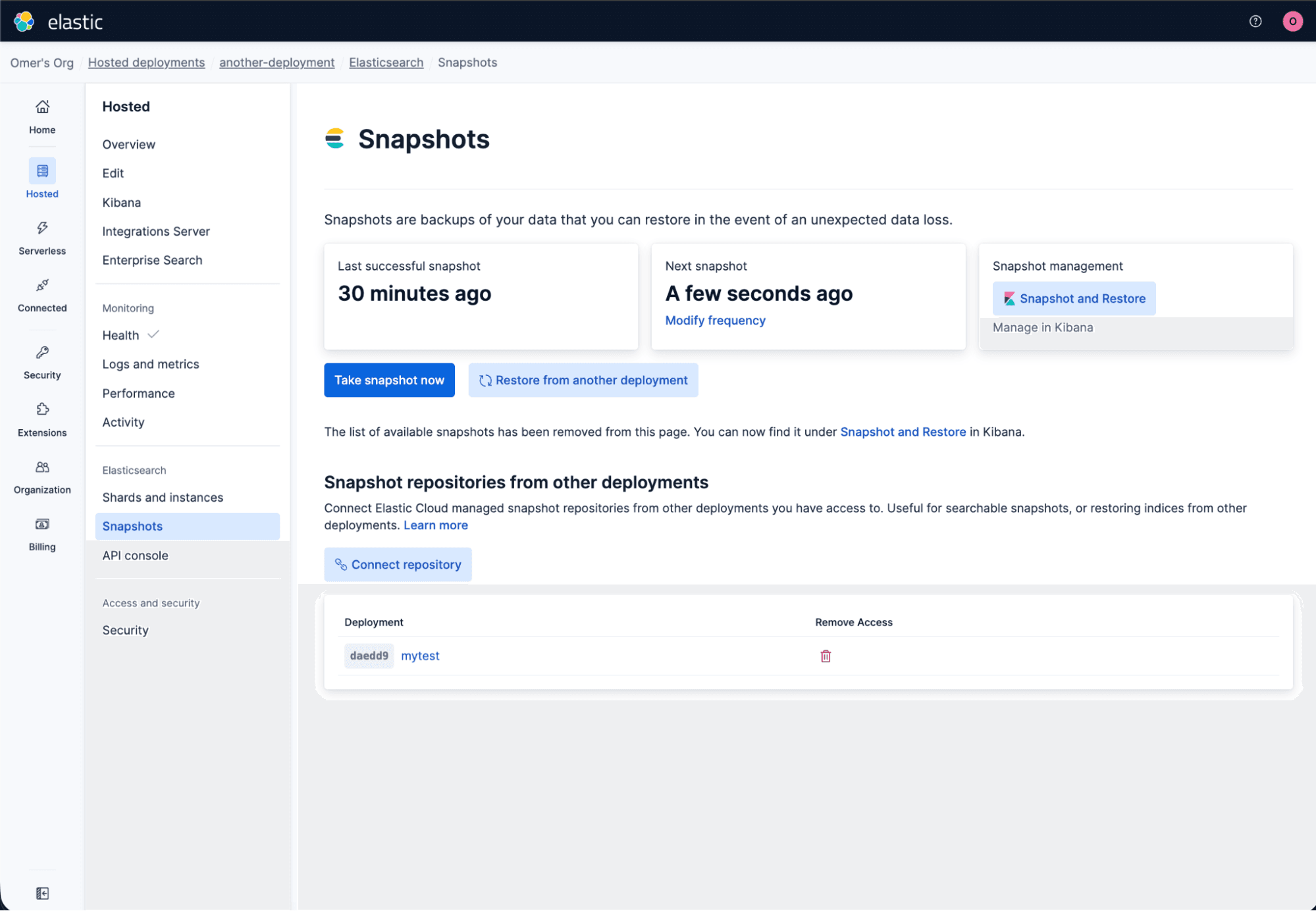

All the code snippets in this article can be executed in the Kibana Dev Tools console.

To follow along, create a sample index with data that exercises all five patterns. This simulates a server logs scenario with mixed field types, raw messages, and some intentional data quality issues:

Now index some sample documents:

Notice that response_time is stored as a keyword (a common real-world mistake), and the last document has "US-EAST" instead of "us-east" (a data quality issue we’ll fix later).

Pattern 1: Field concatenation

A common runtime field use case is combining two fields into one. For example, creating a host:port identifier.

The runtime field approach

You can define it inline at query time. Query-time approach avoids modifying the mapping, but you still need Painless scripting, scoping it to a single search request:

The ES|QL approach

You can run ES|QL queries using the _query API endpoint:

Response:

CONCAT accepts two or more arguments and always returns a keyword.

Note: For brevity, the remaining ES|QL examples in this article show just the query. Wrap them in POST _query { "query": "..." } to run them in Kibana Dev Tools.

When to use

If you need endpoint to persist across all queries and be available in Kibana dashboards, use a mapping-level runtime field. If you need it for a single search request within Query DSL, use a query-time runtime field. If you need it for ad-hoc analysis or exploratory work, ES|QL is simpler.

Pattern 2: Data extraction from unstructured text

Extracting structured data from raw log messages is another classic runtime field pattern.

The runtime field approach

Painless uses Java's regex Matcher class:

This is verbose. You need to know Painless regex syntax, handle the Matcher object, and call emit() correctly.

The ES|QL approach: GROK

ES|QL provides two purpose-built commands for text extraction. GROK uses regex-based patterns:

Response:

GROK uses the %{SYNTAX:SEMANTIC} pattern format. It extracts multiple fields in a single and readable command.

The ES|QL approach: DISSECT

For structured data with consistent delimiters, DISSECT is faster because it doesn’t use regular expressions:

The syntax is nearly identical to GROK, but DISSECT works by splitting on delimiters rather than matching regex patterns. This makes it faster for data that follows a consistent format.

When to use GROK vs DISSECT

Use DISSECT when your data has a predictable structure (same delimiters, same field order). Use GROK when you need regex flexibility, for example when fields may be optional or formats vary.

Pattern 3: Dynamic type conversion

When a field is mapped as keyword but contains numeric data (a surprisingly common scenario), runtime fields can cast it at query time.

The runtime field approach

You need to handle parsing exceptions manually. If Long.parseLong fails on an unexpected value, the script throws an error.

The ES|QL approach

ES|QL provides explicit conversion functions and a shorthand cast operator:

Or with the :: cast operator (available since 8.15):

Response:

Both produce the same result. The key difference from Painless: Failed conversions return null instead of throwing exceptions. The document with "not_available" simply gets null for response_ms, and ES|QL emits a warning.

Common conversion functions include:

| Function | Converts to |

|---|---|

| `TO_LONG()` | Long integer |

| `TO_INTEGER()` | Integer |

| `TO_DOUBLE()` | Double |

| `TO_DATETIME()` | Date |

| `TO_BOOLEAN()` | Boolean |

| `TO_IP()` | IP address |

| `TO_VERSION()` | Version |

The :: operator works with all these types (for example, field::double, field::datetime).

When to use

ES|QL's graceful null handling makes it safer for dirty data. Runtime fields with Painless give you fine-grained control over error handling but require more code. For type conversion specifically, ES|QL is almost always the better choice.

Pattern 4:

Runtime fields support "dynamic": "runtime" in mappings, which prevents mapping explosion by creating all new fields as runtime fields instead of indexed fields:

Any new field sent to this index becomes a runtime field automatically. This is useful when you ingest semi-structured data with unpredictable field names.

Where ES|QL fits

ES|QL provides query-time flexibility, but it still needs fields to be visible in the mapping. This is where runtime fields and ES|QL complement each other rather than compete.

If a field exists in _source but isn’t mapped, ES|QL cannot access it directly. The current workaround is to define a runtime field to make the unmapped field visible:

Once defined, ES|QL can query it:

This is one scenario where runtime fields remain essential. They act as a bridge, making unmapped data accessible to ES|QL.

Pattern 5: Field shadowing for error correction

Runtime fields can shadow (override) indexed fields by defining a runtime field with the same name as an existing field. This is useful for correcting data without reindexing.

The runtime field approach

Remember our data quality issue, where region has inconsistent casing ("US-EAST" versus "us-east")?

This overrides the indexed region field for all queries. Every search, aggregation, and Kibana visualization will see the lowercase version.

When you use EVAL with an existing column name, ES|QL drops the original column and replaces it with the computed value. This is the exact equivalent of field shadowing, but scoped to the current query.

You can also chain multiple corrections in a pipeline:

When to use

If the correction should apply to all queries and Kibana dashboards, use runtime field shadowing. If you need to correct data for a specific analysis, ES|QL is more flexible since you can apply different transformations in different queries without modifying the mapping.

The ES|QL pipeline advantage: Going beyond runtime fields

This is where ES|QL fundamentally surpasses runtime fields. Runtime fields are isolated: each one computes independently, and you cannot use the output of one runtime field as input for another in the same query.

ES|QL pipelines chain transforms. Here’s a single query that combines multiple patterns:

This single query:

- Extracts fields from raw text (

GROK). - Converts the duration to a number (

EVALwith cast). - Normalizes region casing (

EVALwithTO_LOWER). - Filters for errors with high duration (

WHERE). - Aggregates by region (

STATS).

To achieve the same result with runtime fields, you would need to define at least three separate runtime fields (for extraction, conversion, and normalization) and then write a Query DSL query with filters and aggregations. The ES|QL version is a single, readable pipeline.

You can even use expressions directly inside aggregations:

Conclusion

What we covered:

- ES|QL provides a full toolkit (

EVAL,GROK,DISSECT, type casting with::) that replaces most runtime field patterns without any Painless scripting. - Failed type conversions in ES|QL return

nullinstead of throwing exceptions, making it safer for real-world data. - Pipeline processing (chaining

GROKintoEVALintoWHEREintoSTATS) goes beyond what runtime fields can do in isolation. - Runtime fields remain valuable for persistent computed fields, field shadowing across all queries, and as a bridge for unmapped data in ES|QL.

One important caveat: Both runtime fields and ES|QL compute values at query time, which means they pay the cost on every query. If you find yourself applying the same transformation repeatedly (type corrections, field extraction, data normalization), consider using ingest pipelines to fix the data at index time instead. Ingest pipelines let you parse, enrich, and transform documents before they’re stored, so queries can work with clean, properly typed fields directly. Runtime fields and ES|QL are great for exploration and ad-hoc analysis, but for production workloads, indexing the right data from the start is almost always the better choice.

The key takeaway: Runtime fields aren’t deprecated, and they aren’t going away. But for most query-time computation patterns, ES|QL offers a simpler, more powerful, and more performant approach. And when the transformation is known up front, an ingest pipeline is the most efficient option of all.