jina-embeddings-v5-omni reúne texto, imágenes, video y audio en un único índice de Elasticsearch. Ampliando los mejores modelos de jina-embeddings-v5-text en su clase, la suite v5-omni agrega codificación visual y de audio mediante una arquitectura innovadora que mantiene la columna vertebral de texto idéntica y otorga un rendimiento de vanguardia en un modelo de incrustación muy compacto.

Ahora puedes crear incrustaciones semánticas de alto rendimiento para texto, imágenes, videos y grabaciones de audio, que abarcan casi 100 idiomas, y utilizarlas para clasificar, agrupar, medir la similitud semántica e indexar para recuperar información. Si tus datos se encuentran en archivos PDF, grabaciones y videos junto al texto, ya no necesitas pipelines separados para cada uno.

La familia jina-embeddings-v5-omni es el modelo de incrustación más compacto actualmente en el mercado con soporte para imágenes, voz, impresión y video. Ofrece:

jina-embeddings-v5-textincrustaciones de texto de clase vanguardia para recuperación, análisis y aplicaciones de agentes de IA.- Incrustaciones líderes en su categoría de tamaño para similitud semántica visual, comprensión visual y recuperación de imágenes.

jina-embeddings-v5-omni-smalltiene el mejor rendimiento en benchmarks de imagen de cualquier modelo de la categoría de 1000 millones (10⁹) de parámetros y es superior a nuestro propiojina-clip-v2anterior. Solo unos pocos modelos que poseen tres a 30 veces más parámetros pueden superarlo. - Incrustaciones de vanguardia para la comprensión y recuperación visual multilingüe que superan modelos hasta 20 veces más grandes.

- Incrustaciones de audio de los mejores en su categoría de tamaño, con solo modelos que tienen el doble o más de parámetros que logran mejores resultados en las referencias estándar.

- Soporte para video, especialmente para localizar objetos y eventos en el metraje.

Esto tiene aplicaciones en todos los ámbitos de la recuperación de información, el procesamiento de documentos y el análisis de datos. jina-embeddings-v5-omni abre el acceso a la información encerrada en diferentes silos de medios y la pone a disposición para su recuperación, análisis y uso por parte de agentes de IA. La información contenida en grabaciones de audio y video, archivos PDF, escaneos de páginas impresas e infografías tiene el mismo valor que los textos digitalizados en tu ecosistema de datos.

Al igual que jina-embeddings-v5-text, estos modelos vienen en dos tamaños: small y nano. Ambos modelos amplían su equivalente textual con módulos adicionales que admiten entradas de audio y vídeo. Los usuarios pueden seleccionar módulos en el momento de la carga. Además, las extensiones específicas para cada tarea —como la similitud semántica, la clasificación, la agrupación y la recuperación de información— se han implementado como adaptadores compactos de rango bajo (LoRA) y están todas cargadas, así que los usuarios pueden seleccionarlas en el momento de la inferencia.

Ambos modelos son muy compactos. jina-embeddings-v5-omni-small puede ejecutarse en servidores convencionales equipados con GPU y jina-embeddings-v5-omni-nano es lo suficientemente pequeño como para ejecutarse en hardware estándar. Esto representa un gran ahorro potencial en costos de cómputo y hace posible la instalación local con licencia y el procesamiento perimetral, lo que reduce la latencia y aumenta tu control sobre tus propios datos.

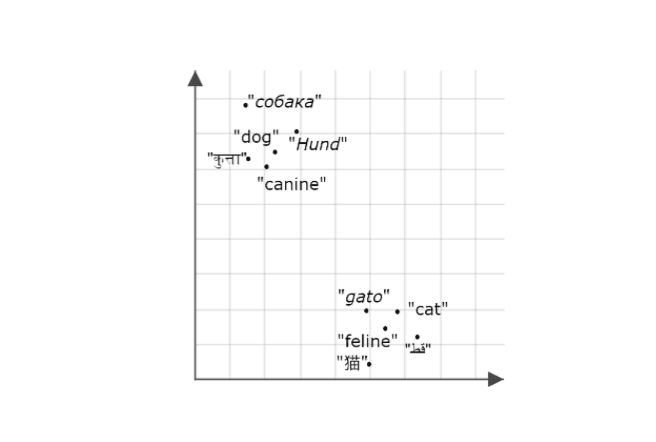

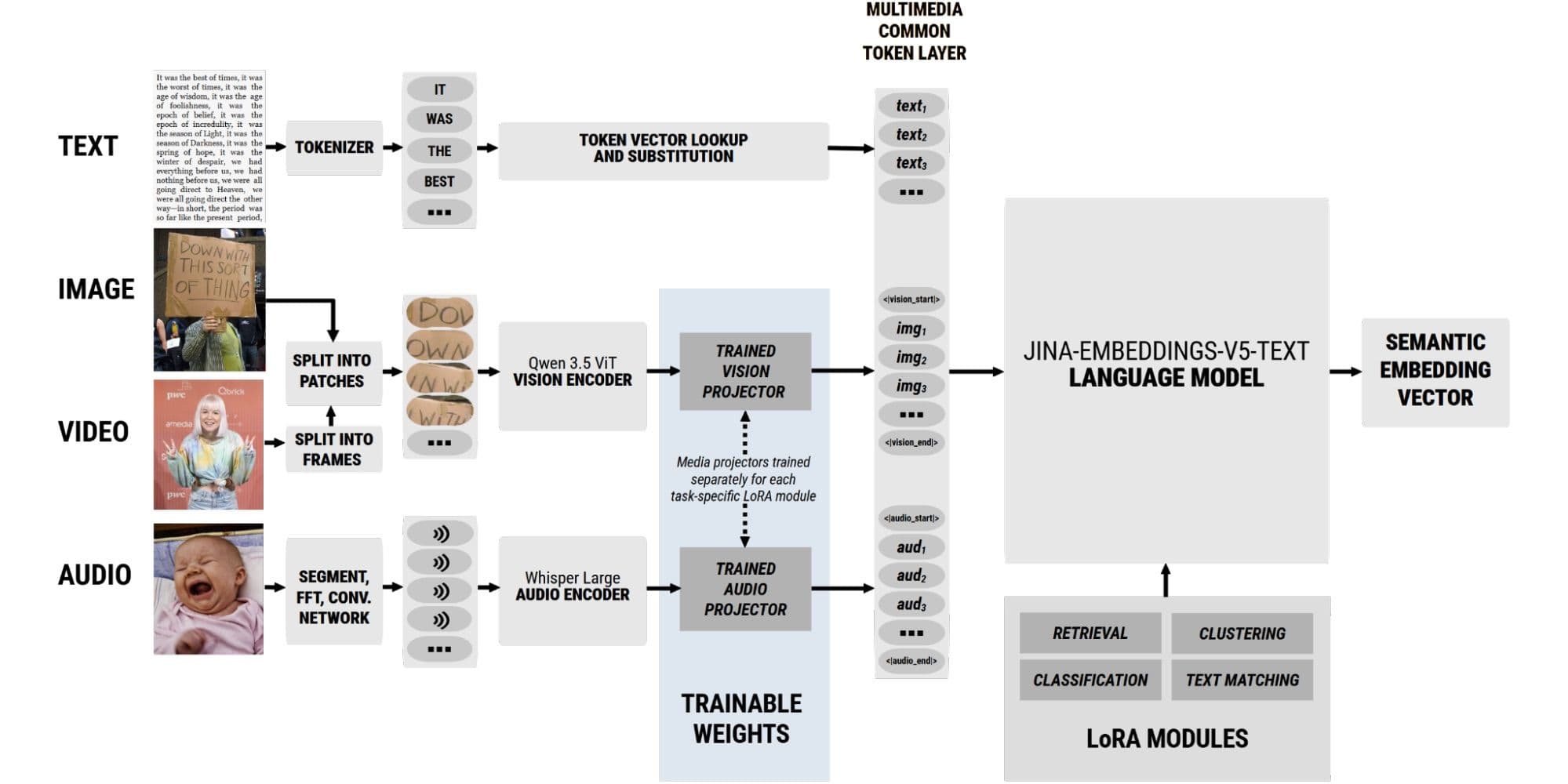

La suite v5-omni utiliza técnicas innovadoras de diseño de modelos y machine learning para crear nuevos modelos de incrustación a partir de otros ya entrenados, sin necesidad de volver a entrenarlos. Usamos codificadores de modelos preentrenados y alineados con el lenguaje para medios de audio y video como preprocesadores de entrada para nuestro conjunto de modelos de jina-embeddings-v5-text existente. Los modelos resultantes generan incrustaciones para imágenes y grabaciones de audio que son semánticamente compatibles con las incrustaciones que genera para los textos.

Los modelos v5-omni producen incrustaciones de texto idénticas a las jina-embeddings-v5-text (es decir, jina-embeddings-v5-omni-small con jina-embeddings-v5-text-small; y jina-embeddings-v5-omni-nano con jina-embeddings-v5-text-nano), por lo que puedes extender los repositorios existentes de recuperación de texto a aplicaciones multimedia sin reconstruir tus índices.

Los codificadores integrados derivan de fuentes de código abierto. Para imágenes y video, hemos utilizado codificadores de los modelos Qwen3.5:

- Para

jina-embeddings-v5-omni-nano, el codificador SigLIP2 Base ajustado de Qwen3.5-0.8B. - Para

jina-embeddings-v5-omni-small, el codificador SigLIP2 So400m ajustado específicamente a partir de Qwen3.5-2B. - Para soporte de audio, agregamos el codificador de Whisper-large-v3, extraído de Qwen2.5-Omni-7B, tanto a la versión pequeña como a la nano.

Hemos conectado estos codificadores específicos para cada tipo de medio a la columna vertebral de procesamiento de texto mediante proyectores multimodales entrenados. Estos proyectores traducen sus salidas nativas a incrustaciones de entrada compatibles con jina-embeddings-v5-text. Las únicas partes recién entrenadas de los modelos jina-embeddings-v5-omni son los pesos en esos proyectores.

Un esquema de los modelos jina-embeddings-v5-omni . Solo los proyectores de medios cruzados tienen nueva capacitación.

Esta arquitectura significa que solo necesitamos entrenar los proyectores entre modelos, aproximadamente 5.5 millones de parámetros para jina-embeddings-v5-omni-small y menos de 3.5 millones para jina-embeddings-v5-omni-nano, para cada uno de los cuatro adaptadores LoRA. Este enfoque reduce al mínimo el entrenamiento adicional necesario para conectar diferentes modelos de incrustación, y aprovecha el entrenamiento específico de cada uno de ellos para crear un conjunto de incrustaciones modular, extremadamente compacto y de alto rendimiento.

Propiedades del modelo seleccionado

Entrada/salida

| Nombre del modelo | Tamaño de la ventana de contexto de entrada | Tamaño de incrustación |

|---|---|---|

| jina-embeddings-v5-omni-small | 32,768 tókenes* | 1024 dims (mínimo: 32) |

| jina-embeddings-v5-omni-nano | 8,192 tókenes* | 768 dims (mínimo: 32) |

* Consulta Uso de jina-embeddings-v5-omni a continuación para obtener más información sobre cómo se tokenizan los medios que no son de texto.

Tamaño

| Nombre del modelo | Tamaño total |

|---|---|

| jina-embeddings-v5-omni-small (modelo base de solo texto + 4 adaptadores LoRA) | 700 millones de parámetros |

| Soporte de imagen/video (codificador SigLIP2 So400m extraído de Qwen3.5-2B) | 1.006 millones de parámetros |

| soporte de audio (codificador Whisper-large-v3 extraído de Qwen2.5-Omni-7B) | 1.354 millones de parámetros |

| ambos | 1.660 millones de parámetros |

| Adaptadores LoRA (cada uno) | 20M |

| jina-embeddings-v5-omni-nano (modelo base de solo texto + 4 adaptadores LoRa) | 266M parámetros |

| soporte de imagen/video (codificador SigLIP2 Base extraído de Qwen3.5-0.8B) | 354M parámetros |

| soporte de audio (codificador Whisper-large-v3 extraído de Qwen2.5-Omni-7B) | 916M parámetros |

| ambos | 1 004 millones de parámetros |

| Adaptadores LoRA (cada uno) | 7M |

* Consulta Uso de jina-embeddings-v5-omni a continuación para obtener más información sobre cómo se tokenizan los medios que no son de texto.

Capacitación específica por tarea

La familia de jina-embeddings-v5-omni admite los mismos adaptadores LoRA específicos para tareas que jina-embeddings-v5-text:

| Tarea | Ejemplos de usos |

|---|---|

| Recuperación | Recuperación de información, por sí sola o en conjunto con otras técnicas de recuperación y evaluación de candidatos. Con los modelos v5-omni, puedes recuperar audio, video e imágenes en una sola búsqueda desde un solo índice. |

| Agrupación | Descubrimiento de temas y organización automática de temas en todos los medios. |

| Clasificación | Categorización, análisis de sentimiento y tareas relacionadas. |

| Similitud semántica | Deduplicación de datos en medios, sistemas de recomendación, medios relacionados, búsqueda de textos que coincidan con el discurso, identificación de traducciones y tareas similares. |

Las representaciones de salida dependen de la categoría de tarea seleccionada. Por ejemplo, no deberías usar representaciones orientadas a recuperación para agrupar o representaciones de similitud semántica para clasificación.

Multimedia, multimodal, multilingüe, multifuncional

Para mostrar lo que jina-embeddings-v5-omni puede hacer, tomemos los famosos pasajes iniciales de dos novelas y midamos su similitud semántica:

A Tale of Two Cities (Charles Dickens)

Pride and Prejudice (Jane Austen)

Si se usa jina-embeddings-v5-omni-small, con su adaptador de similitud semántica, estos textos tienen una similitud de 0,5329.

Ese número no significa mucho sin algo con qué compararlo, así que comparemos estos dos textos con sus traducciones al francés usando el mismo modelo y adaptador:

Puntuaciones de similitud semántica para textos en diferentes idiomas

| A Tale of Two Cities (inglés) | Pride and Prejudice (inglés) | |

|---|---|---|

| Tale of Two Cities (francés) (París y Londres en 1783, tr. H. Loreau) | 0.9095 | 0.5074 |

| Orgullo y prejuicio (francés) (Orgueil et Préjugés, tr. Leconte et Pressoir) | 0.4826 | 0.8784 |

Los dos textos se parecen mucho más a sus traducciones que a otros textos del mismo idioma o de otro idioma. Esto refleja el rendimiento muy alto de las incrustaciones semánticas multilingües de jina-embeddings-v5-text-small, incluidas sin cambios en jina-embeddings-v5-omni-small.

Si se agrega soporte multimedia a jina-embeddings-v5-omni significa que podemos extender este experimento a otros tipos de datos. Por ejemplo, obtuvimos escaneos de las primeras páginas de ambas novelas de ediciones impresas antiguas:

Figura 2: A Tale of Two Cities, edición no fechada del siglo XIX, y Pride and Prejudice, edición Macmillan de 1903.

Comparemos ambos textos con los escaneos, usando de nuevo el adaptador de similitud semántica:

Puntuaciones de similitud semántica entre textos e imágenes

| Historia de dos ciudades (escaneo) | Pride and Prejudice (escaneo) | |

|---|---|---|

| Tale of Two Cities (texto) | 0.7336 | 0.4891 |

| Orgullo y prejuicio (texto) | 0.4804 | 0.7213 |

Observarás que las puntuaciones de similitud semántica favorecen fuertemente a los textos que coinciden con el contenido de las imágenes.

También podemos comparar los textos con una captura de pantalla de una publicación en redes sociales y un meme que hacen referencia a esos textos, usando la misma configuración:

Figura 3: Un tuit de Elon Musk haciendo referencia a A Tale of Two Cities y un meme haciendo referencia al famoso inicio de Pride and Prejudice.

Puntuaciones de similitud semántica entre textos e imágenes

| A Tale of Two Cities | Pride and Prejudice | |

|---|---|---|

| Tweet de Musk (imagen) | 0.7156 | 0.4912 |

| Meme «Keep calm» (imagen) | 0.4555 | 0.6244 |

Podemos hacer lo mismo con la voz. Obtuvimos grabaciones de lecturas de ambos textos, en inglés y en francés:

- A Tale of Two Cities (audio en inglés de Librivox).

- Una historia de dos ciudades (audio en francés generado por OmniVoice AI).

- Orgullo y prejuicio (audio en inglés de Librivox).

- Pride and Prejudice (audio en francés generado por OmniVoice AI).

Puntuaciones de similitud semántica entre textos y audio en diferentes idiomas

| A Tale of Two Cities (audio en inglés) | A Tale of Two Cities (audio en francés) | Pride and Prejudice (audio en inglés) | Pride and Prejudice (audio en francés) | |

|---|---|---|---|---|

| A Tale of Two Cities (Texto en inglés) | 0.3816 | 0.3106 | 0.1607 | 0.1774 |

| A Tale of Two Cities (texto en francés) | 0.3528 | 0.3253 | 0.1598 | 0.1721 |

| Pride and Prejudice (texto en inglés) | 0.1910 | 0.1682 | 0.3511 | 0.3398 |

| Orgullo y prejuicio (texto en francés) | 0.1667 | 0.1474 | 0.3018 | 0.3702 |

Esta capacidad multilingüe y multimedia se extiende a la recuperación de información.

Los adaptadores de recuperación de los modelos de jina-embeddings-v5-omni implementan recuperación asimétrica. Esto significa que generan incrustaciones de las búsquedas de forma distinta a las incrustaciones de los documentos objetivo de recuperación; por eso, las búsquedas multimodales siempre tienen una dirección, con búsquedas en un archivo multimedia y documentos en otra y la puntuación cambia si se invierten.

Las tablas a continuación muestran las puntuaciones de recuperación para texto, audio e imágenes de escaneo de página para A Tale of Two Cities y Pride and Prejudice, cuando el texto de A Tale of Two Cities (en inglés) se codifica como la búsqueda:

Texto a texto

| Documento | Puntuación de recuperación |

|---|---|

| A Tale of Two Cities (extracto de texto en francés) | 0.7597 |

| Pride and Prejudice (fragmento del texto en inglés) | 0.1482 |

| Pride and Prejudice (extracto de texto en francés) | 0.0523 |

Texto a imagen

| Documento | Puntuación de recuperación |

|---|---|

| A Tale of Two Cities (Escaneo de página en inglés) | 0.5517 |

| A Tale of Two Cities (Escaneo de página en francés) | 0.3576 |

| Orgullo y prejuicio (escaneo de página en inglés) | 0.1917 |

De texto a audio

| Documento | Puntuación de recuperación |

|---|---|

| A Tale of Two Cities (audio en inglés) | 0.3277 |

| A Tale of Two Cities (audio en francés) | 0.1980 |

| Pride and Prejudice (audio en inglés) | 0.1419 |

| Pride and Prejudice (audio en francés) | 0.1759 |

Los usuarios también pueden ejecutar la búsqueda al revés, es decir, realizar búsquedas de audio a texto y de imagen a texto.

A continuación se muestran las puntuaciones utilizando el audio en inglés de A Tale of Two Cities como búsqueda y varios textos como documentos:

De imagen a texto

| Documento | Puntuación de recuperación |

|---|---|

| A Tale of Two Cities (extracto de texto en inglés) | 0.3352 |

| A Tale of Two Cities (extracto de texto en francés) | 0.2650 |

| Pride and Prejudice (fragmento del texto en inglés) | 0.1626 |

| Pride and Prejudice (extracto de texto en francés) | 0.1385 |

Y las puntuaciones usando un escaneo de la página uno de A Tale of Two Cities (en inglés) como búsqueda:

De audio a texto

| Documento | Puntuación de recuperación |

|---|---|

| A Tale of Two Cities (extracto de texto en inglés) | 0.5304 |

| A Tale of Two Cities (extracto de texto en francés) | 0.4845 |

| Pride and Prejudice (fragmento del texto en inglés) | 0.1467 |

| Pride and Prejudice (extracto de texto en francés) | 0.0761 |

Búsqueda de vídeos

Las capacidades de jina-embeddings-v5-omnipara la indexación y la búsqueda de video aportan nuevas funcionalidades a las bases de datos de Elasticsearch, pero están sujetas a muchas de las mismas advertencias que aplican a los textos. Generar una única incrustación para una película larga es como incrustar una novela muy larga: la información detallada se verá inundada y la incrustación resultante será una buena coincidencia para muchas búsquedas irrelevantes.

Si incorporas el texto completo de "Lord of the Rings" (unas 500 000 palabras), es probable que sea una coincidencia buena para la mayoría de las búsquedas, sin importar lo que estés buscando. Del mismo modo, si indexas una película de Hollywood de dos horas, obtendrás muchas coincidencias irrelevantes y detalles que se pierden por completo. jina-embeddings-v5-omni es óptimo con clips cortos.

Para este ejemplo, descargamos el tráiler de la película de 1961 Breakfast At Tiffany’s, que dura solo 158 segundos y es de dominio público. Puedes ver el tráiler en Internet Archive.

Figura 4: El póster teatral para Breakfast at Tiffany’s.

Usamos PySceneDetect para dividir el tráiler en 28 escenas individuales, con duraciones que van desde los 1.877 segundos (45 fotogramas) hasta los 18.393 segundos (441 fotogramas). La detección de escenas no es perfecta, pero ofrece un mecanismo adecuado para dividir el video en fragmentos más pequeños que faciliten su búsqueda. Luego generamos representaciones de documentos para cada uno de los 28 segmentos, usando jina-embeddings-v5-omni-small, para poder comprobar si las búsquedas de texto servían para encontrar elementos específicos en el video.

Por ejemplo, la búsqueda de “gato” devolvió los siguientes clips como los tres resultados principales. La única escena con un gato está en la parte superior, con una puntuación de 0.1634:

La siguiente coincidencia más alta, con una puntuación de 0.1237, es mucho más baja:

También puedes hacer búsquedas de acciones. Si haces una búsqueda con el texto "beso", las cuatro primeras coincidencias contienen todas besos:

Mira el clip 3. Su puntuación es 0.2864.

Puntuaciones: Para la segunda coincidencia (0.2494), tercera coincidencia (0.2099) y cuarta coincidencia (0.2068), respectivamente

Y puedes buscar el texto que se muestra en los videos, como “Buddy Ebsen”, que solo aparece una vez. jina-embeddings-v5-omni-small lo identifica fácilmente como la mejor coincidencia con una puntuación de 0.3885, considerablemente más alta que la siguiente mejor coincidencia:

Recuperación visual de documentos

Los modelos de incrustación multimodal de Jina AI son líderes en el procesamiento de documentos visuales y están a la vanguardia en el procesamiento de documentos visuales multilingües. Esto significa manejar datos de imágenes que contienen texto, figuras e información estructurada. Los datos importantes suelen estar en forma de escaneos impresos, archivos PDF, diagramas, dibujos técnicos, capturas de pantalla, imágenes, infografías y similares. Estos tipos de imágenes a menudo son compuestas mecánicamente o generadas por computadora. No suelen poder reducirse a texto sin pérdida de significado y son poco adecuados para los modelos de visión computacional diseñados para la fotografía de escenas naturales.

jina-embeddings-v5-omniLas incrustaciones abarcan información sobre las cosas en la imagen, el texto impreso en ellas y las relaciones entre ambos. La recuperación visual de documentos hace posible indexar imágenes enriquecida que contienen tanto objetos como texto relevante y hacerlo a través de diferentes idiomas.

A modo de ejemplo, vamos a usar cuatro imágenes de productos de diferentes sitios web de comercio electrónico:

Ahora, veamos qué tan bien jina-embeddings-v5-omni-small puntúa estas cuatro imágenes para la búsqueda «fideos ramen»:

| Sopa de pollo con trozos de Campbell’s (envase canadiense) | Kraft Dinner (envase canadiense) | Ramen Maruchan fresco con sabor a miso (envase japonés) | Birkel Spaghetti (embalaje alemán) |

|---|---|---|---|

| 0.0872 | 0.0711 | 0.1123 | 0.0886 |

Fácilmente encuentra la coincidencia japonesa.

Ahora, intentemos una búsqueda para «マカロニチーズ» (macarrones con queso en japonés):

| Sopa de pollo con trozos de Campbell’s (envase canadiense) | Kraft Dinner (envase canadiense) | Ramen Maruchan fresco con sabor a miso (envase japonés) | Birkel Spaghetti (embalaje alemán) |

|---|---|---|---|

| 0.2207 | 0.3487 | 0.2760 | 0.2674 |

Encuentra la coincidencia correcta con la misma facilidad que una búsqueda en inglés.

jina-embeddings-v5-omni también sobresale en la interpretación de imágenes con abundante información, como los gráficos. Para ver esto en acción, mira estos dos gráficos de barras:

Dos gráficos, Gráfico 1 a la izquierda, sobre la carga mundial de enfermedad, y Gráfico 2 a la derecha, sobre la esperanza de vida de las razas de perros.

Veamos qué tal se adaptan a dos posibles preguntas de texto, cada una de ellas relacionada con uno de los gráficos, pero no con ambos, utilizando jina-embeddings-v5-omni-small para la búsqueda:

| Pregunta de texto | Gráfico 1 | Gráfico 2 |

|---|---|---|

| “¿Cuáles son algunos de los problemas médicos más comunes para las personas mayores?” | 0.2787 | 0.1099 |

| “¿Cuánto tiempo viven los perros?” | 0.1350 | 0.3564 |

También puedes revertir la búsqueda, usando imágenes como búsquedas para encontrar textos. La tabla a continuación muestra los documentos objetivo extraídos de los resúmenes de artículos científicos relacionados con el tema y sus puntuaciones de recuperación, utilizando las imágenes del gráfico como búsquedas:

| Texto 1 | Texto 2 | |

|---|---|---|

| La salud de las poblaciones que viven en la pobreza extrema ha sido desde hace tiempo un tema central de los esfuerzos de desarrollo a nivel mundial, y sigue siendo una prioridad en la era de los Objetivos de Desarrollo Sostenible. Sin embargo, no ha habido un intento sistemático de cuantificar la magnitud y las causas de la carga en esta población específica por casi dos décadas. Hemos estimado las tasas de enfermedades por causa en los mil millones de personas más pobres del mundo y comparamos estas tasas con las de las poblaciones de ingresos altos. | El perro de compañía es una de las especies fenotípicamente más diversas. La variabilidad entre razas no solo se extiende a la morfología y a aspectos del comportamiento, sino también a la longevidad. A pesar de ello, pocos estudios se han dedicado a evaluar la variación en la esperanza de vida entre razas o el potencial de caracterización filogenética de la longevidad. | |

| Gráfico 1 | 0.2377 | 0.1357 |

| Gráfico 2 | 0.0673 | 0.3576 |

Características

Incrustaciones truncables

Entrenamos los modelos base jina-embeddings-v5-text que sustentan jina-embeddings-v5-omni con Aprendizaje de representación Matryoshka, para que puedas truncar tanto las incrustaciones de texto como las de multimedia de estos modelos.

De forma predeterminada, jina-embeddings-v5-omni-small genera incrustaciones con 1024 dimensiones, lo que toma 2 KB para almacenarse con una precisión de 16 bits. Las incrustaciones de jina-embeddings-v5-omni-nanotienen 768 dimensiones y ocupan aproximadamente 1.5KB. Puedes reducir el tamaño de estas incrustaciones a 32 dimensiones (64 bytes), lo que supone una cierta pérdida de precisión, pero a cambio obtienes una gran mejora en la velocidad de procesamiento y una reducción en los costos de recursos. En general, reducir el tamaño de las incrustaciones a la mitad disminuye la precisión en un 2 %, hasta llegar a 128 dimensiones; por debajo de ese nivel, la precisión cae mucho más rápido.

Las incrustaciones truncables permiten a los usuarios decidir el equilibrio óptimo entre precisión, velocidad y costo, según sus propios casos de uso.

Cuantificación

La familia de jina-embeddings-v5-omni también hereda un rendimiento sólido bajo cuantización de la base de jina-embeddings-v5-text. Esto aumenta aún más la velocidad y reduce los costos de cálculo y almacenamiento al almacenar números menos precisos. Los capacitamos para trabajar con Better BinaryQuantization(BBQ) de Elasticsearch para ofrecer un rendimiento casi idéntico a las incrustaciones no cuantizadas. En el conjunto de referencias de recuperación Massive Text Embedding Benchmark (MTEB), la binarización reduce el rendimiento en menos de un 3 % en comparación con los valores completos de 16 bits, a la vez que ahorra el 93 % del espacio y aumenta significativamente las velocidades de procesamiento y recuperación.

Rendimiento entre idiomas

jina-embeddings-v5-textEl extenso entrenamiento multilingüe se traslada a jina-embeddings-v5-omni, con casi 100 idiomas en el preentrenamiento de jina-embeddings-v5-text-smally 15 idiomas globales principales en el de jina-embeddings-v5-text-nano. Para medios de audio, el modelo Whisper-large-v3 cuenta con aproximadamente 100 idiomas en su entrenamiento, y los modelos de visión SigLip2 modificados por Qwen integrados en jina-embeddings-v5-omni-small y -nano fueron entrenados con datos de 201 lenguas y dialectos distintos.

Rendimiento de referencia

Texto

jina-embeddings-v5-omni los modelos son idénticos a los modelos de jina-embeddings-v5-text cuando se usan solo para texto. Son los de mejor rendimiento en el conjunto de referencias MMTEB en sus respectivas categorías de tamaño para incrustaciones de texto semánticas.

Figura 5: Tamaño y rendimiento de jina-embeddings-v5-omnien puntos en referencias de texto, en comparación con los modelos de la competencia. El tamaño citado no tiene extensiones de carga para otros medios.

Similitud semántica visual

En las referencias estándar de similitud semántica visual, jina-embeddings-v5-omni obtiene las mejores puntuaciones entre los modelos de tamaño comparable. Los modelos jina-embeddings-v5-omni muestran, con diferencia, el mejor rendimiento entre los modelos públicos de pesos abiertos de tamaño comparable. jina-embeddings-v5-omni-small solo es superado por un modelo tres veces más grande en tareas de similitud semántica visual, y jina-embeddings-v5-omni-nano solo es superado por jina-embeddings-v5-omni-small y por modelos entre 10 y 25 veces más grandes.

Figura 6: Puntuaciones promedio de referencia de similitud semántica visual para jina-embeddings-v5-omni-small, jina-embeddings-v5-omni-nano y modelos comparables, así como sus tamaños incluyendo extensiones de visión.

Recuperación visual de documentos

jina-embeddings-v5-omni-small es competitivo con modelos de tres y siete mil millones de parámetros, mientras se mantiene por debajo de mil millones de parámetros. jina-embeddings-v5-omni-nano destaca igualmente por su tamaño y supera a modelos diez a sesenta veces más grandes.

Figura 7: Puntuaciones medias de ViDoRe en la recuperación visual de documentos en seis referencia: DocVQA, InfoVQA, ShiftProj, SynAI, Tabfquad y TatDQA.

Recuperación de audio

En las referencias estándar de recuperación de audio MAEB (Massive Audio Embedding Benchmark), tanto jina-embeddings-v5-omni-small como jina-embeddings-v5-omni-nano se clasifican entre los mejores rendimientos. Solo modelos muy grandes —más de tres veces más grandes que jina-embeddings-v5-omni-small — superaban su puntuación.

Figura 8: Puntuación media para varios modelos en las referencias de recuperación de audio MAEB.

Aunque el modelo larger_clap_general de LAION mejora el puntaje de jina-embeddings-v5-omni-nano con menos parámetros, es un modelo solo de audio sin ninguna de las características multimodales adicionales de la suite v5-omni.

Video

En video, jina-embeddings-v5-omni-small sobresale en encontrar el lugar en un video que coincida con una búsqueda de texto. Las pruebas Charades-STA y MomentSeeker son las referencias estándar para esta tarea, y puedes ver en los gráficos a continuación que jina-embeddings-v5-omni-small es el que obtuvo la puntuación más alta entre los modelos de peso abierto comparables, a pesar de tener un tamaño mucho más pequeño.

Figura 9: puntuaciones de Charades-STA para varios modelos, junto con sus tamaños.

Figura 10: puntuaciones de MomentSeeker para varios modelos, junto con sus tamaños.

También comparamos jina-embeddings-v5-omni-small con Seed 1.6 de ByteDance, un modelo de peso cerrado con un número de parámetros no revelado. Nuestro modelo supera ampliamente a Seed 1.6 en la referencia de Charades-STA y casi lo iguala en MomentSeeker.

| Modelo | Puntuación Charades-STA | Puntuación MomentSeeker |

|---|---|---|

| seed-1.6-embedding | 29.30 | 59.30 |

| jina-embeddings-v5-omni-small | 55.57 | 58.93 |

Fortalezas y limitaciones

jina-embeddings-v5-omni los modelos amplían la capacidad de los usuarios para indexar, buscar y analizar información digitalizada de varias maneras, particularmente:

- Recuperación de voz multilingüe a partir de búsquedas de texto.

- PDF, escaneos y búsqueda visual de documentos.

- Localización temporal de videos, es decir, identificar partes de videos que coinciden con descripciones de texto en lenguaje natural.

- Clasificación de géneros de audio, incluyendo géneros musicales.

- Clasificación de imágenes basada en información de escena e identificación de objetos.

El rendimiento es más limitado en algunas otras áreas. Puede que sea posible usar jina-embeddings-v5-omni para realizar estas tareas, pero no nos capacitamos para ellas y los resultados pueden ser pobres.

Estamos trabajando activamente en mejorar nuestra tecnología en estas áreas:

- Encontrar videos específicos a partir de descripciones en lenguaje natural.

- Similitud semántica de imagen a imagen y recuperación.

- Clasificación de intención en la voz, como el reconocimiento de comandos verbales.

- Procesamiento de entradas de medios mixtos, es decir, imágenes y texto acompañantes, o audio, imágenes y textos combinados.

Usando

Esta suite de modelos admite la entrada a través de tres puntos de entrada: texto, audio e imágenes y video juntos. jina-embeddings-v5-omni se ejecuta dentro de un marco de trabajo que convierte una amplia gama de formatos estándar y realiza otros preprocesamientos.

Procesamos imágenes usando el mismo enfoque de NaFlex de la versión inicial de Siglip2: si la entrada es menor que 262,144 píxeles (equivalente a 512x512), se ampliará hasta que sea mayor que ese mínimo; y si es mayor que 3,072,000 píxeles, entonces se reduce hasta que sea menor que ese máximo. El proceso de conversión garantiza que tanto la altura como el ancho de la imagen sean múltiplos de 14 píxeles, con la menor distorsión posible de la relación de aspecto para lograr ese objetivo. El resultado se divide en mosaicos de 28x28 píxeles, por lo que el número total de mosaicos es la cantidad de cuadrados de 28x28 necesarios para cubrir la imagen. Cada parche se trata como un solo token en el momento de la inferencia, y cada entrada de imagen va acompañada de tokens especiales de inicio y fin para delimitar una sola imagen.

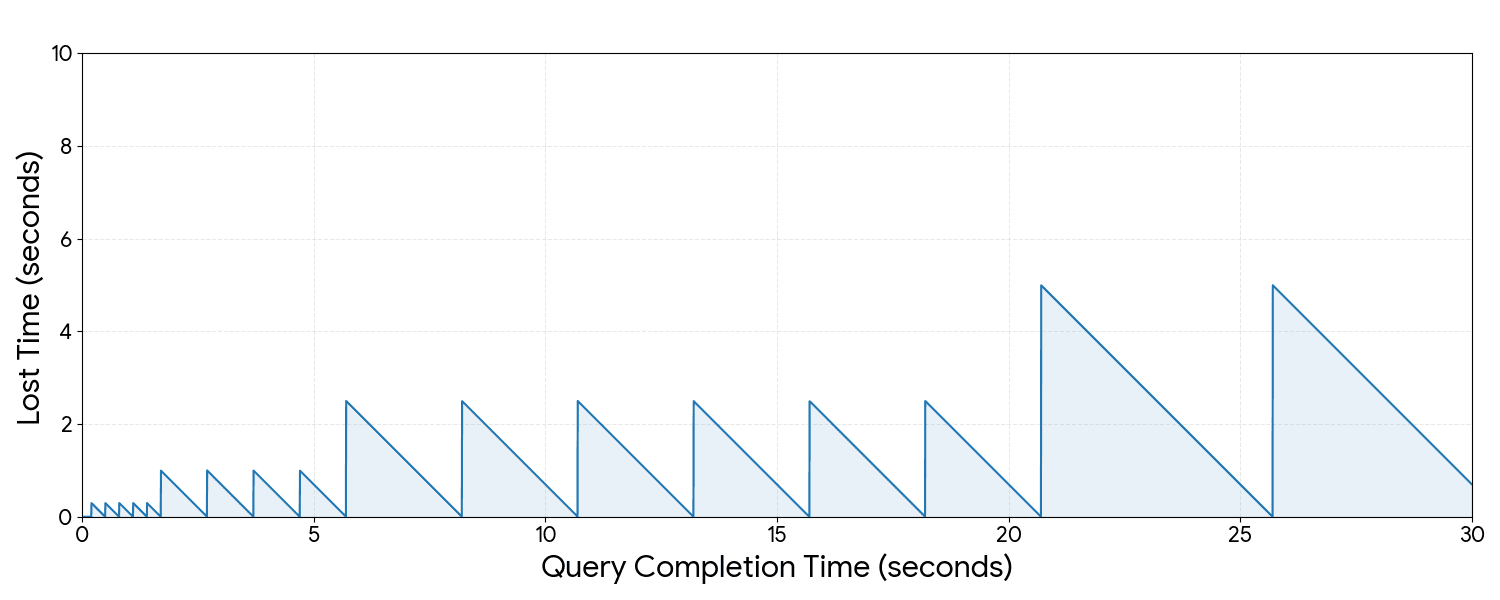

Aviso de Omni

Los modelos jina-embeddings-v5-omni modifican la resolución del video de la misma manera que se modifican las imágenes (ver arriba) y extraemos hasta 32 fotogramas del video. Si el video tiene más de 32 fotogramas (lo cual es probable, ya que los formatos estándar suelen ser de al menos 24 fotogramas por segundo), espaciamos uniformemente los fotogramas que extraemos. Luego, por cada dos fotogramas, el preprocesador de video genera un conjunto de tokens igual al número de cuadrados de 28x28 necesarios para cubrir el video.

Figura 11: jina-embeddings-v5-omni extrae 32 fotogramas equidistantes del video. Si tienes un video largo, esto significa que se perderá mucho.

Para obtener más detalles sobre el preprocesamiento de video, consulta la documentación técnica de SigLip2.

La tokenización de audio sigue el enfoque integrado en Qwen-2.5-Omni: Los archivos de audio se cortan en segmentos de 30 segundos; si duran más de 30 segundos, se remuestrean a 16 kHz y se transforman en un espectrograma Mel de 128 canales. Cada 40 ms se considera un solo token, así que cada segmento de 30 segundos se maneja como 750 tokens: un token por cada 40 ms de audio, más tokens especiales de inicio y fin para delimitar una sola muestra.

Para más detalles sobre el preprocesamiento de audio, consulta el reporte técnico de Qwen-2.5-Omni.

Disponibilidad

Primeros pasos

Para usar jina-embeddings-v5-omni para texto, puedes integrar usando el campo semantic_text igual que con jina-embeddings-v5-text. Solo tienes que poner la inference_id en .jina-embeddings-v5-omni-small o .jina-embeddings-v5-omni-nano. Consulta la Guía de referencia para las instrucciones.

Para incrustar otros medios con jina-embeddings-v5-omni, tienes que usar la API de inferencia. Por ejemplo:

Para jina-embeddings-v5-omni-nano, cambia el URI de POST a _inference/embedding/.jina-embeddings-v5-omni-nano.

Para codificar documentos en otros formatos o generar incrustaciones para la clasificación o el agrupamiento, debes crear un endpoint de inferencia con el servicio jinaai.

Para las búsquedas, usa el generador de búsquedas como en el ejemplo que aparece a continuación. Sustituye el valor inference_id por .jina-embeddings-v5-omni-nano para usar el modelo nano en lugar de small.

Consulta la documentación sobre el generador de búsquedas para obtener más información.

Para usar BBQ con jina-embeddings-v5-omni, sigue las instrucciones para la indexación de BBQ.

Más información

Para obtener más información sobre jina-embeddings-v5-omni, consulte el reporte técnico y la página del modelo en el sitio web de Jina AI. La página de la colección jina-embeddings-v5-omni en Hugging Face también contiene información técnica e instrucciones para descargar y ejecutar estos modelos localmente. Los modelos de jina-embeddings-v5-omni pueden descargarse con una licencia CC-BY-NC-4.0, por lo que puedes probarlos libremente, pero, para uso comercial, ponte en contacto con el departamento de ventas de Elastic.