New to Elasticsearch? Join our getting started with Elasticsearch webinar. You can also start a free cloud trial or try Elastic on your machine now.

When managing Elasticsearch indices, you may need to verify that all documents present in one index also exist in another, such as after a reindex operation, a migration, or a data pipeline. Elasticsearch doesn't provide a built-in "diff" command for this, but the right approach depends on one key question: Are your document IDs stable between the two indices?

The problem

Imagine you have two indices, index-a (source) and index-b (target), and you want to find all documents that exist in index-a but are missing from index-b.

A naive approach, querying both indices and comparing results in memory, won't scale. Elasticsearch is designed to handle millions of documents, and loading them all at once isn’t practical.

There are two scenarios:

- IDs are stable: Both indices use the same

_idfor the same document (for example,emp_noas the document ID). This is the easy case. - IDs are generated: Documents were ingested through different pipelines that assigned random or sequential IDs. You can't compare by

_id; you need to match on content.

Let's walk through both.

Step 0 — A lighter CLI for Elasticsearch

All the examples in this post use escli, a small Rust command line interface (CLI) that wraps the Elasticsearch REST API. It reads your cluster URL and credentials from environment variables, so you don’t have to repeat authentication headers on every command.

To see why that matters, here's a typical _search call with raw curl:

With escli, the same request becomes:

The credentials live in a .env file that escli sources automatically — no -H "Authorization: ..." on every call, no risk of leaking secrets in shell history. The request body is passed via stdin (<<<), which makes it easy to pipe in multi-line JSON built dynamically with jq.

Step 1 — Count documents in both indices

Before doing a full scan, get a quick count of each index. If the counts match, the indices are likely in sync, and there’s no need to scan at all.

The _count API returns:

If the counts differ, proceed to the full comparison.

Step 2 — When IDs mean something: Use op_type=create

If both indices use the same _id for the same document, for example, because you indexed documents using a functional business key like emp_no rather than a generated UUID, you can find and fix missing documents in a single _reindex call.

Why functional IDs matter

Using a meaningful field as _id (instead of a random UUID) is a best practice when the data has a natural key. It means:

- The same document always gets the same

_id, regardless of which pipeline ingested it. - You can easily update or delete documents by ID.

- You can use

op_type=createto skip documents that already exist in the target. - No client-side scanning or comparison is needed.

The op_type=create trick

_reindex with op_type=create tries to create each document from the source in the target. If a document with the same _id already exists, Elasticsearch reports it as a version_conflict and moves on. It doesn’t overwrite the existing document. Setting conflicts=proceed tells the API to continue instead of aborting on the first conflict.

The response tells you exactly what happened:

created: Documents that were missing fromindex-band have now been added.version_conflicts: Documents that already existed inindex-band were left untouched.

No scanning, no client-side comparison, no intermediate file. Everything happens server-side in about six seconds on a 1M-document dataset.

Step 3 — When IDs are not stable: Business-key comparison

Sometimes you can't rely on _id. A document pipeline that generates IDs at ingestion time will assign a different _id each time the same record is processed. If index-a and index-b were populated by two such pipelines, the same employee record might have _id: "abc123" in one index and _id: "xyz789" in the other, even though the underlying data is identical.

In this case, you need to match documents by content rather than by ID. The key is to identify a set of fields that together form a unique business key.

For an employee dataset, a reasonable business key is (first_name, last_name, birth_date). A document in index-a is "missing" from index-b if no document in index-b has the same combination of those three fields.

3a — Scan the source with PIT + search_after

Open a point in time (PIT) on the source index to get a consistent snapshot, and then paginate through it, fetching only the business-key fields:

The sort key _shard_doc is the most efficient sort for full-index pagination: it uses the internal Lucene document order with no overhead. Repeat with search_after until the response contains zero hits. Always close the PIT when done:

3b — Check each page against the target via _msearch

For each page of source documents, build one _msearch request with one subquery per document. Each subquery uses a bool/must on the three business-key fields and requests size: 0; we only need to know whether a match exists, we don’t need to retrieve the document itself.

The response contains one entry per subquery, in the same order:

total.value == 0 means no document in index-b matches that business key; the document is missing. Collect the corresponding _id from the source page.

Note on.keywordsubfields:termqueries require exact (keyword) matching. Thefirst_nameandlast_namefields must have a.keywordsubfield in the index mapping. The demo'smapping.jsonincludes this.

3c — Speed it up with split-by-date

If the business key includes a date field, you can partition the source into date slices and run each slice as an independent job. Each slice opens its own PIT with a range filter on birth_date, runs its own msearch loop, and writes its results to a separate file. The parent script launches all slices in parallel and aggregates the results when they’re all done.

But depending on your use case, you might want to partition by a different field; for example, if you have a team field, you could run one slice per team. The key is to find a field that allows you to split the data into reasonably even chunks that can be processed in parallel.

Performance on a 1M dataset

To validate the approaches, the demo generates 1,000,000 documents in index-a and deliberately skips ~5% in index-b (49,594 missing documents), and then runs the full compare → reindex cycle.

Results on a MacBook M3 Pro:

Comparison (compare-indices.sh):

| Strategy | Compare | Reindex | Total | How it works |

|---|---|---|---|---|

| op_type | 6s | 6s | Full _reindex server-side, skips existing | |

| business-key | 1m 38s | 4s | 1m 42s | PIT scan + _msearch by business key |

| split-by-date | 32s | 4s | 36s | Same as business-key, 5 slices in parallel |

The op_type=create approach is fastest because everything is server-side and requires no client-side scanning. The split-by-date strategy cuts the business-key duration from 1m 38s down to 36s through parallelism: not bad for a comparison across two 1M-document indices.

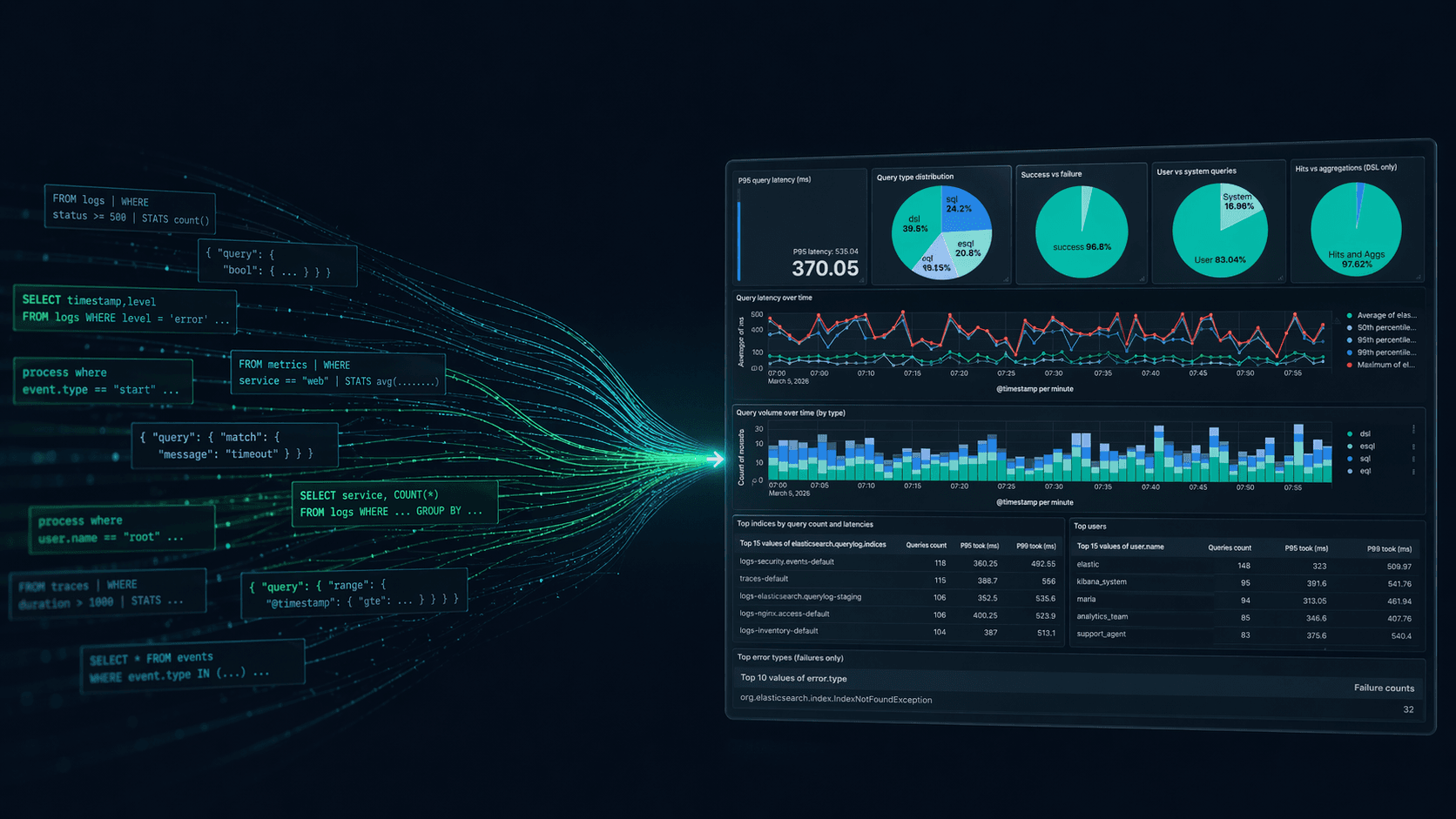

Decision tree

Conclusion

Elasticsearch doesn't offer a native index diff command, but the right strategy depends on your data model:

- Use functional

_ids (a natural business key likeemp_no) whenever possible. It unlocks the simplest and fastest approach:_reindexwithop_type=createfinds and fills gaps in one server-side call. - When IDs are unstable, match by business key using PIT +

_msearch. Partition by a field and run slices in parallel to recover most of the performance. If you find yourself doing this regularly, consider computing a hash of your business key fields and using it as_idat ingestion time. You get the best of both worlds: stable IDs and efficient lookups.

The complete demo, including dataset generation, comparison scripts, and reindex scripts, is available at https://github.com/dadoonet/blog-compare-indices/.