Agent Builder is available now GA. Get started with an Elastic Cloud Trial, and check out the documentation for Agent Builder here.

When querying Elasticsearch through an AI assistant, you need facts: index names, field mappings, Elasticsearch Query Language (ES|QL) queries, case IDs, sentiment scores. But current large language model (LLM) interfaces wrap every response in conversational padding:

"Of course! I'd be happy to help you..."

"This should give you a good overview..."

"Feel free to let me know if you need anything else!"

This isn't just annoying; it's expensive. Every token costs money and adds latency. For production Elasticsearch queries, that overhead compounds fast. In this post, we introduce elastic-caveman and share the results of a controlled experiment across eight live Model Context Protocol (MCP) scenarios against an Elasticsearch cluster. The findings: 63.6% average token reduction, 817 tokens saved, and zero loss of technical accuracy.

Enter elastic-caveman

elastic-caveman tests a simple hypothesis: Strip AI responses to pure signal, and measure the impact. The approach:

- Normal mode: Full conversational AI with greetings, explanations, and sign-offs.

- Caveman mode: Raw data with minimal structural labels only.

We tested both modes against a live Elasticsearch instance using MCP with real support ticket and Salesforce case data across eight production scenarios.

Results: 64% token reduction, zero accuracy loss

Here's what we found across eight live MCP tool calls: The Elastic-Caveman initiative has successfully optimized AI response size without compromising quality or functionality.

| Metric | Result |

|---|---|

| Scenarios tested | 8 |

| Success rate | 88% |

| Token reduction | 63.6% average |

| Total normal tokens | 1,284 |

| Total Caveman tokens | 467 |

| Tokens saved | 817 |

| Max reduction (single scenario) | 91.5% |

Key preservations (0% loss):

- Technical accuracy

- API paths

- ES|QL syntax

- Field names

The critical finding: Every field name, case ID, ES|QL query, account name, and sentiment score was preserved exactly. Not approximately. Exactly.

Real examples: Before and after

Example 1. List indices: 87% reduction

User: Show me my indices

Normal mode (107 tokens):

Caveman mode (14 tokens):

Saved: 93 tokens (86.9%)

Example 2. Generate ES|QL query: 75% reduction

User: Show me open critical tickets grouped by product area

Normal mode (208 tokens):

[followed by the actual query, plus 150+ tokens of step-by-step explanation]

Caveman mode (52 tokens):

Saved: 156 tokens (75.0%). ES|QL syntax is character-for-character identical in both modes.

Example 3. Search recent support tickets: 35% reduction

User: Show me 5 recent support tickets

Caveman mode (143 tokens):

What gets removed vs. what stays

When we clean up the output, we strip out conversational filler, like “Of course! I’d be happy to help you…”, “This should give you a good overview…”, or “Would you like me to help you prioritize these?”, and we keep every piece of factual content, such as ES|QL snippets, like FROM support-tickets WHERE status = "Open"; field names like sentiment_score, product_area, and resolution_hours; and index names, like support-tickets and salesforce-cases. We also preserve concrete identifiers and business entities, such as case IDs CASE-0012 and CASE-0002; account names, like Pinnacle Financial and United Oil Gas Corp; along with all numeric values, for example, a sentiment_score of -0.94, counts like 47 duplicates, durations such as 18 days, or metrics like 27.0 average hours, so the edited text is tightly focused on query syntax, entities, and numbers while discarding only the polite scaffolding.

Results varied by operation type:

| Query type | Token reduction | Why |

|---|---|---|

| Metadata listings | 85–92% | Small payload, maximum filler in normal mode |

| ES|QL generation | 70–75% | Query is identical; explanation is eliminated |

| Data-heavy searches | 35–40% | Actual data dominates, leaving less room for fluff |

Complete evaluation breakdown

Token savings by query type across all eight scenarios against live MCP data:

| Scenario | Normal tokens | Caveman tokens | Reduction | Tokens saved | MCP tool |

|---|---|---|---|---|---|

| T1: List all streams | 118 | 10 | 91.5% | 108 | platform.streams.list_streams |

| T2: List indices | 107 | 14 | 86.9% | 93 | platform.core.list_indices |

| T3: Get index mapping | 143 | 40 | 72.0% | 103 | platform.core.get_index_mapping |

| T4: Generate ES|QL query | 208 | 52 | 75.0% | 156 | platform.core.generate_esql |

| T5: Execute ES|QL aggregation | 149 | 44 | 70.5% | 105 | platform.core.execute_esql |

| T6: Search recent tickets | 221 | 143 | 35.3% | 78 | platform.core.search |

| T7: Search escalated cases | 198 | 128 | 35.4% | 70 | platform.core.search |

| T8: ES|QL stats by priority | 140 | 36 | 74.3% | 104 | platform.core.execute_esql |

| TOTALS | 1,284 | 467 | 63.6% | 817 |

Technical accuracy verification:

| Accuracy check | Result | Details |

|---|---|---|

| ES|QL syntax preserved | PASS | FROM, WHERE, STATS, SORT, LIMIT identical |

| Field names preserved | PASS | account_id, sentiment_score, product_area verbatim |

| Index names preserved | PASS | support-tickets, salesforce-cases unchanged |

| Case IDs preserved | PASS | CASE-0012, CASE-0002 exact |

| Account names preserved | PASS | Pinnacle Financial, United Oil Gas Corp exact |

| Numeric values preserved | PASS | Sentiment scores -0.94, -0.88; days open 18, 7 exact |

| Priority/status labels | PASS | Critical, Escalated, Open verbatim |

| Null values preserved | PASS | null for low priority resolution hours retained |

| Error messages preserved | PASS | Tool validation errors quoted verbatim |

Zero information loss. 64% fewer tokens.

Why this matters for Elastic users

For teams building AI assistants on Elasticsearch, 64% token reduction means 64% savings on output costs at scale, faster streaming responses, and more context window space for actual data rather than fillers. When you're debugging an ES|QL query at 2 a.m., you don't need an AI telling you it's delighted to help; you just need the query response!

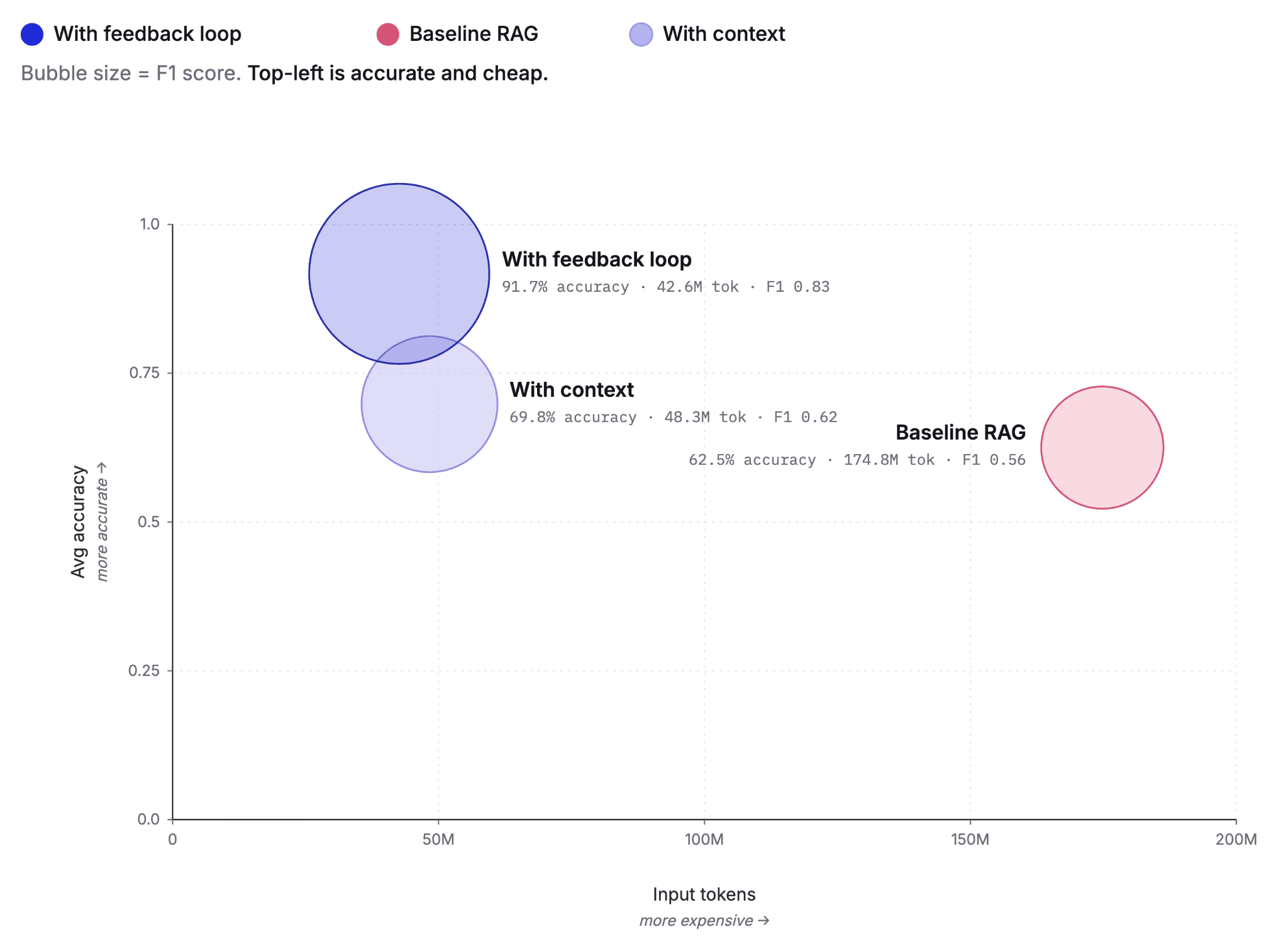

The bigger picture: Rethinking AI interfaces

This experiment reveals something fundamental: Conversational AI interfaces optimize for the wrong metric. They optimize for sounding human when users often just want accurate data, fast.

For technical workflows, especially data queries, there's a strong case for mode-switching:

- Conversational mode: When exploring or learning.

- Caveman mode: When you know what you want and need it now.

The Elastic MCP server makes this possible by returning structured, accurate responses that work in both modes without modification.

How elastic-caveman works

elastic-caveman is an Agent Skill, that is, a markdown file with YAML front matter that any compatible AI agent reads and follows. No runtime. No binary. No API calls. Just instructions that reshape how your agent talks when working with Elasticsearch.

Install with:

Supported agents: Claude Code, Cursor, Codex, Windsurf, GitHub Copilot, Gemini CLI, Roo

Trigger with:/elastic-caveman

Disable with:"normal mode" or "verbose"

Live in action

We tested elastic-caveman with the Claude model to measure its impact on token usage and cost:

- With elastic-caveman: Token usage was 368 tokens (in) and 1.6k tokens (out), resulting in a cost of $0.11.

- Without elastic-caveman: Token usage was 367 tokens (in) and 1.8k tokens (out), resulting in a cost of $0.12.

Prompt: Get me the critical support tickets from the support-tickets index in kibana for Pinnacle Financial

This test demonstrates the efficiency of elastic-caveman.

What's next

Caveman mode is just the beginning. Consider dynamic mode switching: Flip between concise and conversational mid-session. Or a hybrid approach: Lean on success, explanatory on errors. Or custom verbosity levels for teams that want something in between. The goal isn't to make AI assistants robotic; it's to give users control over the signal-to-noise ratio.

Try it yourself

Test caveman mode with your Elasticsearch data:

- Set up the Elastic MCP server.

- Install elastic-caveman.

- Run queries in both normal and caveman modes.

- Compare token counts and accuracy.

Full evaluation methodology and scripts available in the GitHub repo.

The bottom line

Across eight real scenarios with live Elasticsearch data, elastic-caveman delivered 64% average token reduction with zero accuracy loss and 100% preservation of ES|QL syntax, field names, and technical values. Sometimes the best AI response isn't the chattiest one. Sometimes you just need the data; and with elastic-caveman, you can get it 64% faster. Ready to optimize your Elasticsearch AI workflows? Check out Elasticsearch Labs for more tutorials, integrations, and research on building with Elasticsearch and AI, or start building with Elasticsearch today.

Want to optimize your Elasticsearch AI workflows? Check out Elasticsearch Labs for more tutorials, integrations, and research on building with Elasticsearch and AI. Ready to try it yourself? Start building with Elasticsearch today.