Scaling Elastic Agent on Kubernetes

For more information on how to deploy Elastic Agent on Kubernetes, review these pages:

This document summarizes some key factors and best practices for using Elastic Observability to monitor Kubernetes infrastructure at scale. Users need to consider different parameters and adjust Elastic Stack accordingly. These elements are affected as the size of Kubernetes cluster increases:

- The amount of metrics being collected from several Kubernetes endpoints

- The Elastic Agent's resources to cope with the high CPU and Memory needs for the internal processing

- The Elasticsearch resources needed due to the higher rate of metric ingestion

- The Dashboard’s visualizations response times as more data are requested on a given time window

The document is divided in two main sections:

The Kubernetes Observability is based on Elastic Kubernetes integration, which collects metrics from several components:

Per node:

- kubelet

- controller-manager

- scheduler

- proxy

Cluster wide (such as unique metrics for the whole cluster):

- kube-state-metrics

- apiserver

Controller manager and Scheduler data streams are being enabled only on the specific node that actually runs based on autodiscovery rules

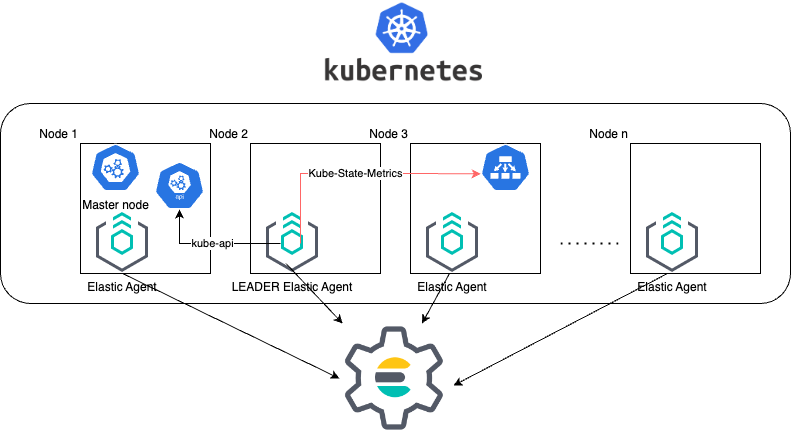

The default manifest provided deploys Elastic Agent as DaemonSet which results in an Elastic Agent being deployed on every node of the Kubernetes cluster.

Additionally, by default one agent is elected as leader (for more information visit Kubernetes LeaderElection Provider). The Elastic Agent Pod which holds the leadership lock is responsible for collecting the cluster-wide metrics in addition to its node’s metrics.

The above schema explains how Elastic Agent collects and sends metrics to Elasticsearch. Because of Leader Agent being responsible to also collecting cluster-lever metrics, this means that it requires additional resources.

The DaemonSet deployment approach with leader election simplifies the installation of the Elastic Agent because we define less Kubernetes Resources in our manifest and we only need one single Agent policy for our Agents. Hence it is the default supported method for Managed Elastic Agent installation

Resourcing of your Pods and the Scheduling priority (check section Scheduling priority) of them are two topics that might be affected as the Kubernetes cluster size increases. The increasing demand of resources might result to under-resource the Elastic Agents of your cluster.

Based on our tests we advise to configure only the limit section of the resources section in the manifest. In this way the request's settings of the resources will fall back to the limits specified. The limits is the upper bound limit of your microservice process, meaning that can operate in less resources and protect Kubernetes to assign bigger usage and protect from possible resource exhaustion.

resources:

limits:

cpu: "1500m"

memory: "800Mi"

Based on our Elastic Agent Scaling tests, the following table provides guidelines to adjust Elastic Agent limits on different Kubernetes sizes:

Sample Elastic Agent Configurations:

| No of Pods in K8s Cluster | Leader Agent Resources | Rest of Agents |

|---|---|---|

| 1000 | cpu: "1500m", memory: "800Mi" | cpu: "300m", memory: "600Mi" |

| 3000 | cpu: "2000m", memory: "1500Mi" | cpu: "400m", memory: "800Mi" |

| 5000 | cpu: "3000m", memory: "2500Mi" | cpu: "500m", memory: "900Mi" |

| 10000 | cpu: "3000m", memory: "3600Mi" | cpu: "700m", memory: "1000Mi" |

The above tests were performed with Elastic Agent version 8.7 and scraping period of 10sec (period setting for the Kubernetes integration). Those numbers are just indicators and should be validated for each different Kubernetes environment and amount of workloads.

Although daemonset installation is simple, it can not accommodate the varying agent resource requirements depending on the collected metrics. The need for appropriate resource assignment at large scale requires more granular installation methods.

Elastic Agent deployment is broken in groups as follows:

- A dedicated Elastic Agent deployment of a single Agent for collecting cluster wide metrics from the apiserver

- Node level Elastic Agents(no leader Agent) in a Daemonset

- kube-state-metrics shards and Elastic Agents in the StatefulSet defined in the kube-state-metrics autosharding manifest

Each of these groups of Elastic Agents will have its own policy specific to its function and can be resourced independently in the appropriate manifest to accommodate its specific resource requirements.

Resource assignment led us to alternatives installation methods.

The main suggestion for big scale clusters is to install Elastic Agent as side container along with kube-state-metrics Shard. The installation is explained in details Elastic Agent with Kustomize in Autosharding

The following alternative configuration methods have been verified:

With

hostNetwork:false- Elastic Agent as Side Container within KSM Shard pod

- For non-leader Elastic Agent deployments that collect per KSM shards

With

taint/tolerationsto isolate the Elastic Agent daemonset pods from rest of deployments

You can find more information in the document called Elastic Agent Manifests in order to support Kube-State-Metrics Sharding.

Based on our Elastic Agent scaling tests, the following table aims to assist users on how to configure their KSM Sharding as Kubernetes cluster scales:

| No of Pods in K8s Cluster | No of KSM Shards | Agent Resources |

|---|---|---|

| 1000 | No Sharding can be handled with default KSM config | limits: memory: 700Mi , cpu:500m |

| 3000 | 4 Shards | limits: memory: 1400Mi , cpu:1500m |

| 5000 | 6 Shards | limits: memory: 1400Mi , cpu:1500m |

| 10000 | 8 Shards | limits: memory: 1400Mi , cpu:1500m |

The tests above were performed with Elastic Agent version 8.8 + TSDB Enabled and scraping period of 10sec (for the Kubernetes integration). Those numbers are just indicators and should be validated per different Kubernetes policy configuration, along with applications that the Kubernetes cluster might include

Tests have run until 10K pods per cluster. Scaling to bigger number of pods might require additional configuration from Kubernetes Side and Cloud Providers but the basic idea of installing Elastic Agent while horizontally scaling KSM remains the same.

Setting the low priority to Elastic Agent comparing to other pods might also result to Elastic Agent being in Pending State.The scheduler tries to preempt (evict) lower priority Pods to make scheduling of the higher pending Pods possible.

Trying to prioritize the agent installation before rest of application microservices, PriorityClasses suggested

Policy configuration of Kubernetes package can heavily affect the amount of metrics collected and finally ingested. Factors that should be considered in order to make your collection and ingestion lighter:

- Scraping period of Kubernetes endpoints

- Disabling log collection

- Keep audit logs disabled

- Disable events dataset

- Disable Kubernetes control plane datasets in Cloud managed Kubernetes instances (see more info ** Run Elastic Agent on GKE managed by Fleet, Run Elastic Agent on Amazon EKS managed by Fleet, Run Elastic Agent on Azure AKS managed by Fleet pages)

The Dashboard Guidelines document provides guidance on how to implement your dashboards and is constantly updated to track the needs of Observability at scale.

User experience regarding Dashboard responses, is also affected from the size of data being requested. As dashboards can contain multiple visualizations, the general consideration is to split visualizations and group them according to the frequency of access. The less number of visualizations tends to improve user experience.

A new environmental variable ELASTIC_NETINFO: false has been introduced to globally disable the indexing of host.ip and host.mac fields in your Kubernetes integration. For more information see Environment variables.

Setting this to false is recommended for large scale setups where the host.ip and host.mac fields' index size increases. The number of IPs and MAC addresses reported increases significantly as a Kubernetes cluster grows. This leads to considerably increased indexing time, as well as the need for extra storage and additional overhead for visualization rendering.

The configuration of Elastic Stack needs to be taken under consideration in large scale deployments. In case of Elastic Cloud deployments the choice of the deployment Elastic Cloud hardware profile is important.

For heavy processing and big ingestion rate needs, the CPU-optimised profile is proposed.

After Elastic Agent deployment, we need to verify that Agent services are healthy, not restarting (stability) and that collection of metrics continues with expected rate (latency).

For stability:

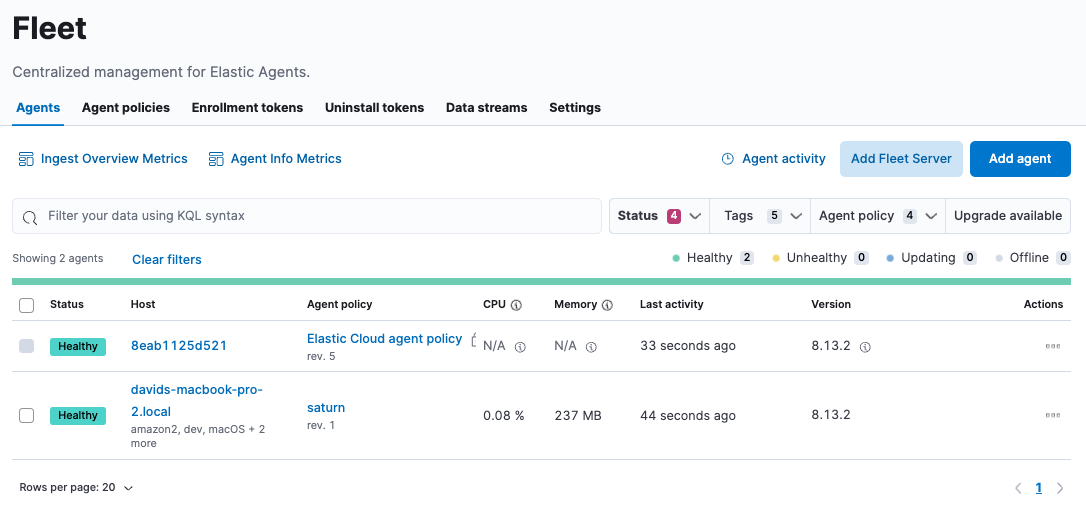

If Elastic Agent is configured as managed, in Kibana you can observe under Fleet > Agents

Additionally you can verify the process status with following commands:

kubectl get pods -A | grep elastic

kube-system elastic-agent-ltzkf 1/1 Running 0 25h

kube-system elastic-agent-qw6f4 1/1 Running 0 25h

kube-system elastic-agent-wvmpj 1/1 Running 0 25h

Find leader agent:

❯ k get leases -n kube-system | grep elastic

NAME HOLDER AGE

elastic-agent-cluster-leader elastic-agent-leader-elastic-agent-qw6f4 25h

Exec into Leader agent and verify the process status:

❯ kubectl exec -ti -n kube-system elastic-agent-qw6f4 -- bash

root@gke-gke-scaling-gizas-te-default-pool-6689889a-sz02:/usr/share/elastic-agent# ./elastic-agent status

State: HEALTHY

Message: Running

Fleet State: HEALTHY

Fleet Message: (no message)

Components:

* kubernetes/metrics (HEALTHY)

Healthy: communicating with pid '42423'

* filestream (HEALTHY)

Healthy: communicating with pid '42431'

* filestream (HEALTHY)

Healthy: communicating with pid '42443'

* beat/metrics (HEALTHY)

Healthy: communicating with pid '42453'

* http/metrics (HEALTHY)

Healthy: communicating with pid '42462'

It is a common problem of lack of CPU/memory resources that agent process restart as Kubernetes size grows. In the logs of agent you

kubectl logs -n kube-system elastic-agent-qw6f4 | grep "kubernetes/metrics"

[output truncated ...]

(HEALTHY->STOPPED): Suppressing FAILED state due to restart for '46554' exited with code '-1'","log":{"source":"elastic-agent"},"component":{"id":"kubernetes/metrics-default","state":"STOPPED"},"unit":{"id":"kubernetes/metrics-default-kubernetes/metrics-kube-state-metrics-c6180794-70ce-4c0d-b775-b251571b6d78","type":"input","state":"STOPPED","old_state":"HEALTHY"},"ecs.version":"1.6.0"}

{"log.level":"info","@timestamp":"2023-04-03T09:33:38.919Z","log.origin":{"file.name":"coordinator/coordinator.go","file.line":861},"message":"Unit state changed kubernetes/metrics-default-kubernetes/metrics-kube-apiserver-c6180794-70ce-4c0d-b775-b251571b6d78 (HEALTHY->STOPPED): Suppressing FAILED state due to restart for '46554' exited with code '-1'","log":{"source":"elastic-agent"}

You can verify the instant resource consumption by running top pod command and identify if agents are close to the limits you have specified in your manifest.

kubectl top pod -n kube-system | grep elastic

NAME CPU(cores) MEMORY(bytes)

elastic-agent-ltzkf 30m 354Mi

elastic-agent-qw6f4 67m 467Mi

elastic-agent-wvmpj 27m 357Mi

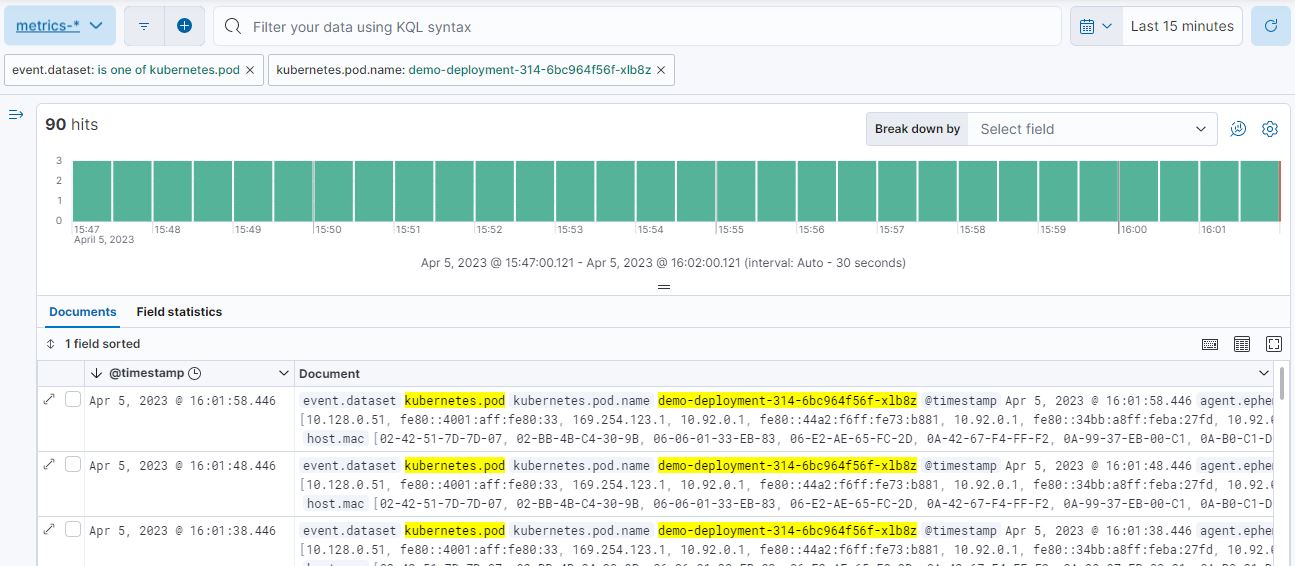

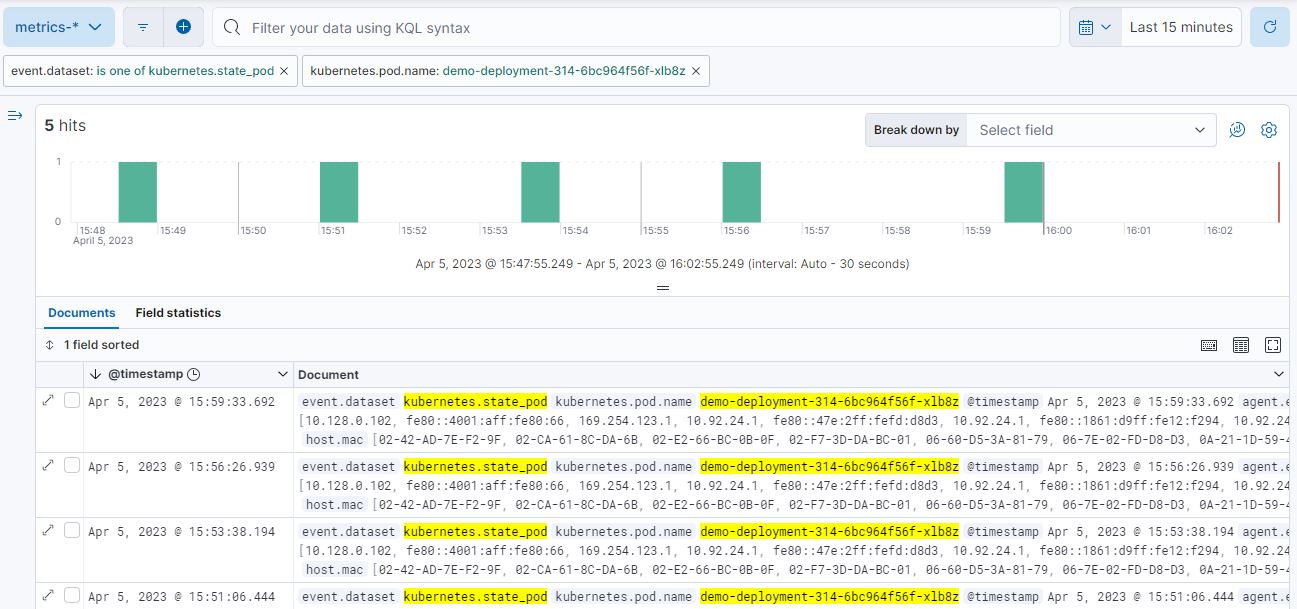

Kibana Discovery can be used to identify frequency of your metrics being ingested.

Filter for Pod dataset:

Filter for State_Pod dataset

Identify how many events have been sent to Elasticsearch:

kubectl logs -n kube-system elastic-agent-h24hh -f | grep -i state_pod

[output truncated ...]

"state_pod":{"events":2936,"success":2936}

The number of events denotes the number of documents that should be depicted inside Kibana Discovery page.

For example, in a cluster with 798 pods, then 798 docs should be depicted in block of ingestion inside Kibana.

In some cases maybe the Elasticsearch can not cope with the rate of data that are trying to be ingested. In order to verify the resource utilization, installation of an Elastic Stack monitoring cluster is advised.

Additionally, in Elastic Cloud deployments you can navigate to Manage Deployment > Deployments > Monitoring > Performance. Corresponding dashboards for CPU Usage, Index Response Times and Memory Pressure can reveal possible problems and suggest vertical scaling of Elastic Stack resources.