Overview

editOverview

editAnalyzing the past and present

editThe machine learning features automate the analysis of time-series data by creating accurate baselines of normal behavior in the data and identifying anomalous patterns in that data. You can submit your data for analysis in batches or continuously in real-time datafeeds.

Using proprietary machine learning algorithms, the following circumstances are detected, scored, and linked with statistically significant influencers in the data:

- Anomalies related to temporal deviations in values, counts, or frequencies

- Statistical rarity

- Unusual behaviors for a member of a population

Automated periodicity detection and quick adaptation to changing data ensure that you don’t need to specify algorithms, models, or other data science-related configurations in order to get the benefits of machine learning.

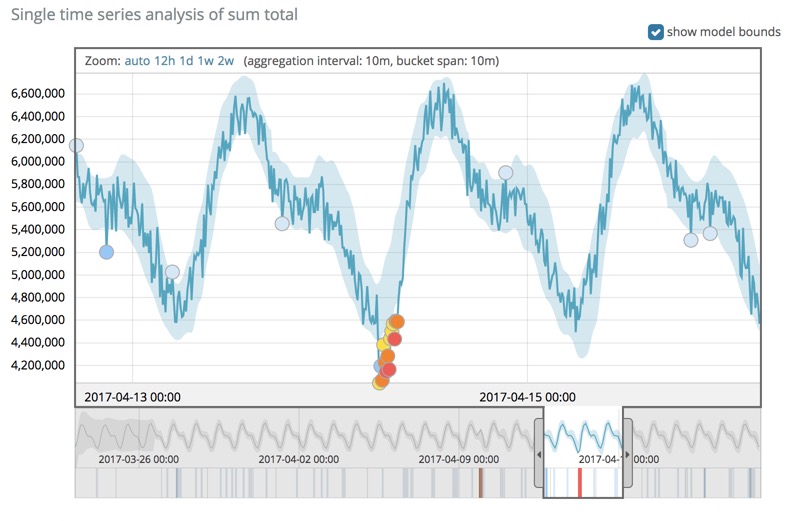

You can view the machine learning results in Kibana where, for example, charts illustrate the actual data values, the bounds for the expected values, and the anomalies that occur outside these bounds.

Forecasting the future

editAfter the machine learning features create baselines of normal behavior for your data, you can use that information to extrapolate future behavior.

You can use a forecast to estimate a time series value at a specific future date. For example, you might want to determine how many users you can expect to visit your website next Sunday at 0900.

You can also use it to estimate the probability of a time series value occurring at a future date. For example, you might want to determine how likely it is that your disk utilization will reach 100% before the end of next week.

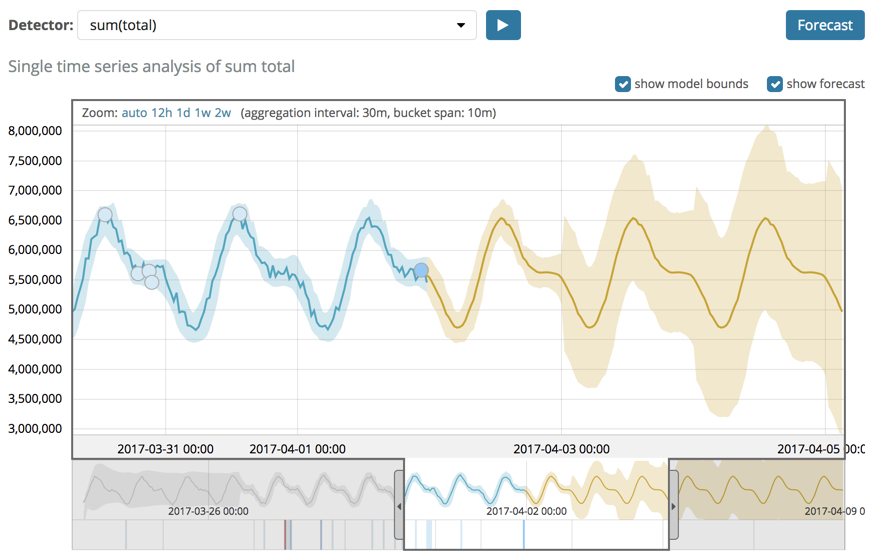

Each forecast has a unique ID, which you can use to distinguish between forecasts that you created at different times. You can create a forecast by using the forecast anomaly detection jobs API or by using Kibana. For example:

The yellow line in the chart represents the predicted data values. The shaded yellow area represents the bounds for the predicted values, which also gives an indication of the confidence of the predictions.

When you create a forecast, you specify its duration, which indicates how far the forecast extends beyond the last record that was processed. By default, the duration is 1 day. Typically the farther into the future that you forecast, the lower the confidence levels become (that is to say, the bounds increase). Eventually if the confidence levels are too low, the forecast stops.

You can also optionally specify when the forecast expires. By default, it

expires in 14 days and is deleted automatically thereafter. You can specify a

different expiration period by using the expires_in parameter in the

forecast anomaly detection jobs API.

There are some limitations that affect your ability to create a forecast:

- You can generate only three forecasts concurrently. There is no limit to the number of forecasts that you retain. Existing forecasts are not overwritten when you create new forecasts. Rather, they are automatically deleted when they expire.

-

If you use an

over_field_nameproperty in your anomaly detection job (that is to say, it’s a population job), you cannot create a forecast. -

If you use any of the following analytical functions in your anomaly detection job, you cannot create a forecast:

-

lat_long -

rareandfreq_rare -

time_of_dayandtime_of_weekFor more information about any of these functions, see Function reference.

-

- Forecasts run concurrently with real-time machine learning analysis. That is to say, machine learning analysis does not stop while forecasts are generated. Forecasts can have an impact on anomaly detection jobs, however, especially in terms of memory usage. For this reason, forecasts run only if the model memory status is acceptable.

- The anomaly detection job must be open when you create a forecast. Otherwise, an error occurs.

- If there is insufficient data to generate any meaningful predictions, an error occurs. In general, forecasts that are created early in the learning phase of the data analysis are less accurate.